AUTOMATION WORKFLOW

Step 1: Search News

Automation Workflow

Scraped Articles

| Action | Title | URL | Images | Scraped At | Status |

|---|---|---|---|---|---|

| Humanoid robots join human models in rampwalk at Seoul fashion … | https://interestingengineering.com/ai-r… | 10 | Jun 05, 2026 00:00 | active | |

Humanoid robots join human models in rampwalk at Seoul fashion showURL: https://interestingengineering.com/ai-robotics/humanoid-robot-fashion-show Description: Humanoid robots wearing designer clothing walked alongside human models at a futuristic fashion show in Seoul. Content:

From daily news and career tips to monthly insights on AI, sustainability, software, and more—pick what matters and get it in your inbox. Discover the engineering revolution transforming modern defense with Strength, Stealth, Speed: The Very Fast Future of Advanced Defense Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. Follow Us On Future of Defense Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. The Seoul fashion show explored how humans and robots may coexist in everyday life. Humanoid robots wearing designer outfits walked alongside human models at a fashion show in Seoul this week, offering a glimpse into how South Korea’s technology industry imagines a future where robots are not just tools, but participants in everyday cultural life. The event, called the “Mach33: Physical AI Fashion Show,” was hosted by South Korean entertainment technology company Galaxy Corporation and featured robots and humans walking the runway together in coordinated outfits. Videos and images from the show depicted humanoid robots strutting down the catwalk, posing beside models, and performing synchronized choreography. According to Reuters, the event was designed around the idea of humans and robots coexisting in daily life, with matching outfits intended to imagine how future interactions between people and physical AI systems might look. The fashion show took place at Galaxy Robot Park in Seoul, a recently opened robot-themed entertainment complex that combines robotics, artificial intelligence, K-pop culture, and interactive attractions. Humanoid robots have traditionally been demonstrated in controlled industrial settings, research laboratories, or technology exhibitions focused on engineering capabilities. The Seoul event reflected a growing shift toward presenting robots in social, cultural, and entertainment environments rather than purely technical ones. According to Reuters and local South Korean media reports, the runway presentation featured robots dressed in designer clothing and paired with human models, with organizers describing the concept as a “physical AI” showcase. Galaxy Corporation has increasingly positioned itself as an “enter-tech” company that combines entertainment and advanced technology. The firm is also known for managing major Korean entertainment figures, including K-pop star G-Dragon. The fashion show was part of a broader effort by the company to expand robot-centered entertainment experiences. Reports indicate Galaxy also plans robot concerts, interactive performances, and additional AI-focused cultural events. The runway event arrives as South Korea continues to strengthen its position as one of the world’s most robot-intensive economies. South Korea has one of the highest robot densities globally, with more than 1,000 industrial robots for every 10,000 workers. The country has invested heavily in automation, advanced manufacturing, artificial intelligence, and humanoid robotics. In recent years, South Korea has also launched major initiatives to accelerate domestic humanoid robot development, including collaborations among technology firms, universities, and government-backed research programs. At the same time, robotics companies worldwide are increasingly attempting to move humanoid machines beyond factories and warehouses into environments where they interact more directly with people. That transition remains difficult. While modern humanoid robots have become significantly better at walking, balancing, dancing, and performing choreographed movements, researchers continue to face challenges involving dexterity, autonomy, perception, and natural human-robot interaction. Still, events like the Seoul runway show demonstrate how robotics is increasingly being presented not just as an industrial technology, but as part of broader discussions about culture, design, entertainment, and daily life. Whether robot fashion shows become a lasting trend or remain a technological novelty, the sight of humanoid machines sharing the catwalk with human models shows how quickly robotics is moving into spaces once considered uniquely human. Kaif Shaikh is a journalist and writer passionate about turning complex information into clear, impactful stories. His writing covers technology, sustainability, geopolitics, and occasionally fiction. A graduate in Journalism and Mass Communication, his work has appeared in the Times of India and beyond. After a near-fatal experience, Kaif began seeing both stories and silences differently. Outside work, he juggles far too many projects and passions, but always makes time to read, reflect, and hold onto the thread of wonder. Premium Follow

Images (10):

|

|||||

| Humanoid Robots Remain Years Away From Replacing Human Workers | https://cointelegraph.com/news/ai-human… | 10 | Jun 05, 2026 00:00 | active | |

Humanoid Robots Remain Years Away From Replacing Human WorkersURL: https://cointelegraph.com/news/ai-humanoid-robots-years-away-from-replacing-human-workers Description: AI-powered humanoid robots are still years away from replacing human workers due to challenges with adaptability, reliability, safety and real-world performance, researchers say. Content:

AI robotics company Figure posted several videos on X throughout May showcasing its robots performing basic tasks, including cleaning a room and sorting packages. Modern artificial intelligence-powered robots are impressive in their capabilities, but are still years away from replacing humans as they can’t yet adapt to changing conditions, researchers say. Last month, AI robotics company Figure showcased its humanoid robots performing basic tasks, such as cleaning a room, but a series of robots working for nine days straight sorting packages sparked conversation about how soon robots could replace jobs. Oliver Obst, an associate professor of robotics at the Australia based University of New South Wales, told Cointelegraph that repetitive jobs such as physical work in structured environments are currently most at risk of being replaced by robots, while administrative and document-processing tasks could be replaced by AI. There has been growing concern that AI and robots will replace people in jobs as technology advances. A report in May from workforce consulting firm Challenger, Gray and Christmas found that US companies have laid off an estimated 49,135 people in 2026 due to AI. A group of Figure’s robots worked for nine days straight sorting packages. Source: Figure However, Obst said that humanoid robots are unlikely to see a mass rollout soon because they don’t appear to be more efficient or less error-prone than current robotic manufacturing methods. “Even in relatively structured settings, they still face problems with reliability, speed, safety, cost, and recovery from unexpected situations,” he said. “The harder the environment is to control, the harder the robotics problem becomes. Most human jobs involve more variation and more judgment than the package-sorting demonstration.” In another video in May, a human worker managed to sort more packages compared to a team of Figure’s robots, which swapped out when needing a recharge. Figure CEO Brett Adock said it would be the last time “a human will ever win.” Source: Brett Adock Markus Levin, co-founder of decentralized data network XYO, said AI models and automation software can perform repetitive tasks with far greater consistency and endurance than humans; however, robots still require charging, maintenance and supervision. A report in September from the International Federation of Robotics found that global demand for factory robots has doubled over the last decade, with warehouses and logistics among the fastest-growing areas of adoption. “I believe broad human replacement is still likely years away,” Levin added, “Reliability, safety, regulation, infrastructure costs, and trust remain major barriers to full-scale deployment across society. The challenge is no longer simply making machines capable of acting but ensuring they can operate safely and reliably as they take on greater autonomy.” Dr Francisco Cruz Naranjo, a senior lecturer at the University of New South Wales with a PhD in robotics, said the efficiency of robots compared to people depends heavily on the activity and the environment. Related: ‘Developed ecosystem’ based on crypto has sprung up for AI agents: Report “Robots are much better at repetitive tasks without the need for constant pauses, as showcased in the Figure livestream. However, in highly dynamic environments, robots still struggle to quickly adapt to changing conditions,” he said. Naranjo said repetitive jobs performed in a less static setting are at risk of being replaced by robots, but it will depend on how quickly research advances and how quickly society adapts in areas like making spaces robot-friendly, which is likely years away. Naranjo and Obst said that a mass rollout of robots in the workforce could be of some benefit, such as improving work-life balance, increasing the workforce in areas with shortages, and addressing dangerous environments that are too risky for humans. “The social question is harder. If robots make dangerous work cheaper in human terms, that can be good. But it can also have unintended consequences. For example, keeping humans out of harm’s way in military operations may save lives, but it could also lower the perceived cost of conflict,” Obst said. Magazine: Korea’s first memecoin rug-pull case, China’s crypto rules review: Asia Express More on the subject Cointelegraph is committed to providing independent, high-quality journalism across the crypto, blockchain, AI, and fintech industries. All news, reviews, and analyses are produced with full journalistic independence and integrity. For more details on our standards and processes, please read our Editorial Policy.

Images (10):

|

|||||

| Humanoid Robots Remain Years Away From Replacing Human Workers<!-- --> … | https://www.zerohedge.com/ai/humanoid-r… | 10 | Jun 05, 2026 00:00 | active | |

Humanoid Robots Remain Years Away From Replacing Human Workers<!-- --> | ZeroHedgeURL: https://www.zerohedge.com/ai/humanoid-robots-remain-years-away-replacing-human-workers Description: ZeroHedge - On a long enough timeline, the survival rate for everyone drops to zero Content:

Authored by Stephen Katte via Cointelegraph, AI robotics company Figure posted several videos on X throughout May showcasing its robots performing basic tasks, including cleaning a room and sorting packages. Modern artificial intelligence-powered robots are impressive in their capabilities, but are still years away from replacing humans as they can't yet adapt to changing conditions, researchers say. Last month, AI robotics company Figure showcased its humanoid robots performing basic tasks, such as cleaning a room, but a series of robots working for nine days straight sorting packages sparked conversation about how soon robots could replace jobs. Welcome to Day 9 of our humanoid livestream: 191 consecutive hours and 238,000 packages. Oliver Obst, an associate professor of robotics at the Australia based University of New South Wales, told Cointelegraph that repetitive jobs such as physical work in structured environments are currently most at risk of being replaced by robots, while administrative and document-processing tasks could be replaced by AI. There has been growing concern that AI and robots will replace people in jobs as technology advances. A report in May from workforce consulting firm Challenger, Gray and Christmas found that US companies have laid off an estimated 49,135 people in 2026 due to AI. However, Obst said that humanoid robots are unlikely to see a mass rollout soon because they don't appear to be more efficient or less error-prone than current robotic manufacturing methods. "Even in relatively structured settings, they still face problems with reliability, speed, safety, cost, and recovery from unexpected situations," he said. "The harder the environment is to control, the harder the robotics problem becomes. Most human jobs involve more variation and more judgment than the package-sorting demonstration." "I would not say we are at the point of mass replacement by humanoid robots. We are much closer to the selective automation of some tasks. AI software is moving faster and is already affecting some forms of information work, but physical robots still have a much harder problem to solve." In another video in May, a human worker managed to sort more packages compared to a team of Figure's robots, which swapped out when needing a recharge. Figure CEO Brett Adock said it would be the last time "a human will ever win." Congrats to Aime!! He said his left forearm is basically broken. Final scores: F.03: 12,732 packages (2.83 seconds/package) - Aime: 12,924 packages (2.79 seconds/package). This is the last time a human will ever win. Markus Levin, co-founder of decentralized data network XYO, said AI models and automation software can perform repetitive tasks with far greater consistency and endurance than humans; however, robots still require charging, maintenance and supervision. A report in September from the International Federation of Robotics found that global demand for factory robots has doubled over the last decade, with warehouses and logistics among the fastest-growing areas of adoption. "I believe broad human replacement is still likely years away," Levin added, "Reliability, safety, regulation, infrastructure costs, and trust remain major barriers to full-scale deployment across society. The challenge is no longer simply making machines capable of acting but ensuring they can operate safely and reliably as they take on greater autonomy." Dr Francisco Cruz Naranjo, a senior lecturer at the University of New South Wales with a PhD in robotics, said the efficiency of robots compared to people depends heavily on the activity and the environment. "Robots are much better at repetitive tasks without the need for constant pauses, as showcased in the Figure livestream. However, in highly dynamic environments, robots still struggle to quickly adapt to changing conditions," he said. "Humans, in this case, are much better. This is precisely why robots at the moment are highly efficient in controlled environments, such as factories, but they have not yet succeeded widely in home settings." Naranjo said repetitive jobs performed in a less static setting are at risk of being replaced by robots, but it will depend on how quickly research advances and how quickly society adapts in areas like making spaces robot-friendly, which is likely years away. Naranjo and Obst said that a mass rollout of robots in the workforce could be of some benefit, such as improving work-life balance, increasing the workforce in areas with shortages, and addressing dangerous environments that are too risky for humans. "The social question is harder. If robots make dangerous work cheaper in human terms, that can be good. But it can also have unintended consequences. For example, keeping humans out of harm's way in military operations may save lives, but it could also lower the perceived cost of conflict," Obst said. "Hypothetically, if we became very successful at automating almost all work, then society would need to rethink economies that are currently built around individual wages and employment." Assistance and Requests: Contact Us Tips: tips@zerohedge.com General: info@zerohedge.com Legal: legal@zerohedge.com Advertising: Contact Us Abuse/Complaints: abuse@zerohedge.com Make sure to read our "How To [Read/Tip Off] Zero Hedge Without Attracting The Interest Of [Human Resources/The Treasury/Black Helicopters]" Guide It would be very wise of you to study our privacy policyand our (non)policy on conflicts / full disclosure.Here's our Cookie Policy. How to report offensive comments Notice on Racial Discrimination.

Images (10):

|

|||||

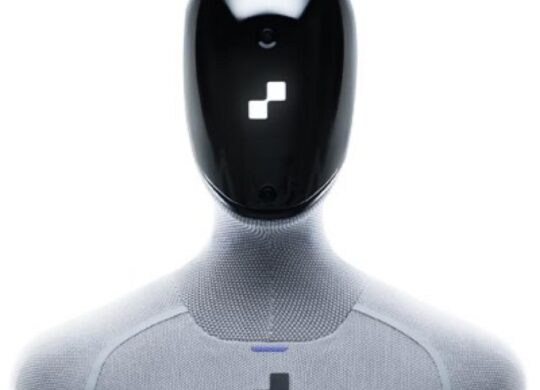

| LCQ4: Development of embodied intelligence technologies | https://www.info.gov.hk/gia/general/202… | 5 | Jun 04, 2026 00:01 | active | |

LCQ4: Development of embodied intelligence technologiesURL: https://www.info.gov.hk/gia/general/202606/03/P2026060300470.htm Description: Following is a question by Professor the Hon William Wong and a reply by the Secretary for Innovation, Technology and Industry, Professor Sun Dong, in the Legislative Council today... Content: Images (5):

|

|||||

| Why Google Gave Up on Boston Dynamics - Geeky Gadgets | https://www.geeky-gadgets.com/why-googl… | 10 | Jun 03, 2026 00:00 | active | |

Why Google Gave Up on Boston Dynamics - Geeky GadgetsURL: https://www.geeky-gadgets.com/why-google-sold-boston-dynamics/ Description: Discover why Google sold Boston Dynamics in 2017 and how the robotics company finally achieved commercial success under Hyundai. Content:

Geeky Gadgets The Latest Technology News 7:00 am May 30, 2026 By Julian Horsey Google’s decision to sell Boston Dynamics in 2017 underscored the tension between research-driven robotics and the demands of commercial viability. As Chromeborne explains, the sale stemmed from a mismatch between Boston Dynamics’ emphasis on experimental advancements, such as the humanoid robot Atlas and Google’s focus on creating products with clear and immediate market applications. This case illustrates the broader challenge of balancing long-term technological exploration with the pressures of short-term business goals. Explore how Boston Dynamics shifted its priorities under new ownership, including its move toward warehouse automation and logistics. Gain insight into the roles played by SoftBank and Hyundai in shaping the company’s trajectory. Understand the broader implications of integrating advanced robotics into industries that demand both innovation and profitability. TL;DR Key Takeaways : Boston Dynamics was established in 1992 by Marc Raibert, a visionary in the field of robotics. His ambition was to create machines capable of mimicking the agility, balance and movement of animals. From its inception, the company concentrated on dynamic locomotion, pushing the boundaries of what robots could achieve. Early projects were heavily research-driven, often funded by organizations like the Defense Advanced Research Projects Agency (DARPA). These initiatives prioritized technological breakthroughs over immediate commercial applications, solidifying Boston Dynamics’ reputation as a leader in innovation. The company’s early work laid the groundwork for its future success. By focusing on solving complex problems in robotic movement, Boston Dynamics developed technologies that would later influence the broader robotics industry. However, this emphasis on research over practicality also posed challenges, particularly when it came to finding real-world applications for their innovations. Boston Dynamics achieved several key breakthroughs that demonstrated the potential of advanced robotics. These milestones not only showcased the company’s technical expertise but also highlighted the challenges of translating innovation into practical use: While these innovations were new, their lack of immediate, practical applications hindered their commercial potential. This gap between technological achievement and market readiness became a recurring challenge for Boston Dynamics. Expand your understanding of Boston Dynamics with additional resources from our extensive library of articles. In 2013, Google acquired Boston Dynamics as part of its broader exploration into robotics and automation. At the time, Google was investing heavily in emerging technologies, aiming to position itself as a leader in the field. However, the partnership quickly revealed a fundamental misalignment of priorities. Google sought to develop robots that could address immediate industrial needs, focusing on market-ready solutions. In contrast, Boston Dynamics remained committed to long-term innovation and experimental prototypes. This divergence in goals created tension between the two companies. Boston Dynamics’ research-driven approach did not align with Google’s commercial ambitions, leading to friction and ultimately the decision to sell the company in 2017. The sale highlighted the challenges of integrating a research-focused organization into a commercially driven enterprise. When SoftBank acquired Boston Dynamics in 2017, the company began to pivot toward practical, real-world applications. This shift was evident in the development of quieter, electric-powered robots like Spot, which was designed to perform tasks in various industries. Spot’s capabilities included: Spot’s versatility and ability to navigate complex environments made it a valuable tool for industries such as construction, energy and manufacturing. Additionally, Boston Dynamics’ acquisition of Kinema Systems, a company specializing in robotic vision, enhanced its robots’ autonomy and adaptability, further aligning the company with market demands. In 2021, Hyundai acquired Boston Dynamics, marking another significant turning point in the company’s evolution. With Hyundai’s backing, Boston Dynamics intensified its focus on aligning its innovations with industrial needs. This partnership emphasized the development of robots for factory and warehouse automation, areas where robotics could deliver immediate value. Under Hyundai’s ownership, Boston Dynamics expanded the applications of its robots. Spot became a reliable tool for inspection tasks, while Atlas demonstrated potential for performing repetitive labor in controlled environments. This shift toward practical, real-world uses allowed Boston Dynamics to transition from a research-focused organization to a commercially viable enterprise. Hyundai’s support also provided the resources needed to scale production and refine the company’s technologies for broader adoption. Boston Dynamics faced numerous challenges throughout its journey, including: The company addressed these challenges by focusing on autonomy, environmental awareness and electric-powered designs. By adapting its technologies to meet market demands, Boston Dynamics successfully bridged the gap between innovation and practicality. This approach not only ensured the company’s survival but also solidified its position as a leader in the robotics industry. Today, Boston Dynamics is recognized as a pioneer in robotics, producing machines with clear, defined purposes. Spot has become a staple in industrial inspections, while Atlas continues to evolve as a platform for repetitive tasks in controlled environments. The company’s transformation from a research-driven organization to a commercially focused business underscores its ability to adapt and thrive in a competitive and rapidly evolving industry. As robotics technology continues to advance, Boston Dynamics remains at the forefront, shaping the future of automation and dynamic locomotion. Its journey serves as a powerful example of how innovation, when balanced with practicality, can drive progress and redefine what is possible in the field of robotics. Media Credit: Chromeborne Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Images (10):

|

|||||

| Vous vous souvenez du robot Figure 03 ? Il travaille … | https://www.lebigdata.fr/vous-vous-souv… | 10 | Jun 02, 2026 16:00 | active | |

Vous vous souvenez du robot Figure 03 ? Il travaille maintenant 40 heures d’affiléeDescription: Le robot Figure 03 de Figure AI vient d'effectuer plus de 40 heures de tri de colis autonome sans pause ni assistance humaine. Content:

Tinah F. Publié le 15 mai 2026 Mis à jour le 19 mai 2026 2 minutes de lecture Robotique Le robot Figure 03 de Figure AI vient de réaliser une démonstration qui fait parler dans le monde de la robotique. Il a effectué plus de 40 heures de tri de colis autonome sans pause ni assistance humaine. Ce qui est impressionnant dans cette histoire, ce n’est pas seulement qu’un robot humanoïde sache déplacer des colis sans tout faire tomber au bout de trois minutes. Le vrai sujet, c’est l’autonomie. Le robot Figure 03 aurait travaillé plus de 40 heures d’affilée sans interruption, sans assistance humaine et sans pause improvisée devant une machine à café inexistante. Figure AI veut donc prouver l’endurance de ses machines. Derrière cette démonstration, il y a surtout Helix-02, le nouveau réseau neuronal développé par Figure AI. C’est lui qui pilote les capacités des robots Figure 03 pendant ces longues sessions de travail. Le point mis en avant par l’entreprise n’est pas seulement la précision des mouvements. Figure insiste surtout sur la continuité du service. Les robots peuvent détecter certaines erreurs et reprendre automatiquement une tâche interrompue. Ils seraient aussi capables de gérer le remplacement de leurs batteries grâce à plusieurs unités fonctionnant en relais. ⚡️ INSIGHT: Figure says its robot crossed 30 hours of continuous autonomous work with no downtime. pic.twitter.com/4uAcPmoUYV Autrement dit, l’objectif n’est plus simplement de fabriquer un robot qui sait déplacer une boîte. Le vrai défi consiste maintenant à maintenir un système autonome pendant des dizaines d’heures dans un environnement industriel réel. Et c’est précisément là que les choses deviennent intéressantes. Parce qu’entre une vidéo virale sur X et une chaîne logistique qui tourne jour comme de nuit, il y a un gouffre technique et financier. Figure AI affirme également avoir expédié 350 robots depuis son usine BotQ de Sunnyvale, avec un rythme d’environ un robot produit par heure. Ces chiffres montrent surtout l’ambition industrielle de la société. Figure AI commence à se faire de la place dans le domaine de la robotique. Fondée seulement en 2022 par Brett Adcock, la société s’est rapidement imposée dans une course dominée par des géants comme Tesla ou Boston Dynamics. L’arme principale de Figure AI, c’est évidemment le robot Figure 03. Cet humanoïde de troisième génération représente une énorme évolution par rapport aux anciens prototypes de la marque. Beaucoup de robots humanoïdes restent encore limités à des démonstrations très contrôlées. De son côté, Figure 03 cherche surtout à prouver qu’il peut fonctionner dans des environnements réels. Et l’entreprise multiplie les démonstrations pour le montrer. Ces derniers mois, Figure AI a notamment diffusé une vidéo où l’on voit le robot ranger une chambre, déplacer des objets et organiser l’espace avec des gestes étonnamment fluides. 28 mai 2026 26 mai 2026 25 mai 2026 Rejoignez nos 100 000 passionnés et experts et recevez en avant-première les dernières tendances de l’intelligence artificielle🔥 Accueil > Robotique > Vous vous souvenez du robot Figure 03 ? Il travaille maintenant 40 heures d’affilée Rejoignez nos 100 000 passionnés et experts et recevez en avant-première les dernières tendances de l’intelligence artificielle🔥 Rejoins nos 100 000 passionnés et experts et reçois en avant-première les dernières tendances de l’intelligence artificielle🔥

Images (10):

|

|||||

| Due umanoidi, un piumone e nessuna regia centrale: la nuova … | https://www.dday.it/redazione/57354/due… | 4 | Jun 02, 2026 16:00 | active | |

Due umanoidi, un piumone e nessuna regia centrale: la nuova demo dei robot di Figure AI | DDay.itDescription: I robot rassettano la stanza, spostando oggetti, e soprattutto risistemando il letto senza una regia centrale: osservano i rispettivi movimenti e decidono l'azione Content:

Figure ha mostrato un nuovo video che ha per protagonista un "attore" nascosto, Helix 02, il sistema di controllo basato su una policy Vision-Language-Action che guida i suoi robot umanoidi. Nel filmato due robot F.03 sistemano una camera da letto in meno di due minuti. La scena che ha attirato più attenzione è quella del letto: i due umanoidi si mettono ai lati opposti, sollevano e stendono il piumone, correggono le pieghe e lavorano sullo stesso oggetto deformabile. Secondo Figure, i due robot usano una sola rete appresa, capace di tradurre immagini e istruzioni in movimenti. Non ci sarebbe un pianificatore condiviso, né scambio di messaggi o regia centrale. Ogni umanoide osserva la stanza dalle proprie telecamere e deduce le intenzioni dell'altro dai movimenti. Vai all'approfondimento La sequenza include anche altre azioni. Attraverso gli arti dei due robot, Helix 02 apre porte, appende un indumento, mette via cuffie su un supporto, chiude un libro, getta un rifiuto usando il pedale del cestino e spinge una sedia sotto la scrivania. Servono camminata, equilibrio, mani e lettura continua dell'ambiente. La collaborazione intorno al letto resta il passaggio più interessante. Un piumone non ha una posa fissa, si piega, scivola e cambia forma dopo ogni tiro. Quando un robot modifica la tensione del tessuto, anche il compito dell'altro cambia nello stesso istante. Come sempre in questi casi, la demo però mostra una camera specifica e non chiarisce quante prove siano state necessarie, o quanto cambi l’esito con oggetti disposti diversamente o con arredi non presenti nei dati di addestramento. Cioè, in sostanza, se i robot F.03 con Helix 02 riuscirebbero a destreggiarsi in qualsiasi altro ambiente. © riproduzione riservata Copyright © 2026 DDay.it - Scripta Manent servizi editoriali srl - Tutti i diritti sono riservati - P.IVA 11967100154

Images (4):

|

|||||

| Il Video di Figure AI Annuncia l'Accelerazione della Produzione del … | https://www.mooseek.com/v/il-video-di-f… | 10 | Jun 02, 2026 16:00 | active | |

Il Video di Figure AI Annuncia l'Accelerazione della Produzione del Robot Umanoide F.03 presso BotQ | MooseekDescription: Figure AI pubblica un aggiornamento cruciale sulla rampa di produzione del suo robot umanoide di terza generazione, il F.03, nello stabilimento BotQ. Il video, Content:

Figure AI pubblica un aggiornamento cruciale sulla rampa di produzione del suo robot umanoide di terza generazione, il F.03, nello stabilimento BotQ. Il video, caricato il 29 aprile 2026, dura circa 2 minuti e 53 secondi e mostra le impressionanti conquiste industriali raggiunte dall’azienda. In soli 120 giorni, Figure ha scalato la produzione di 24 volte, passando da 1 robot al giorno a 1 robot all’ora. Questa crescita esponenziale dimostra la maturità del processo manifatturiero, passando da prototipi artigianali a una linea di produzione automatizzata e scalabile. Figure AI, fondata nel 2022 da Brett Adcock e con sede a Sunnyvale in California, si posiziona come leader nella robotica AI per creare umanoidi autonomi commercialmente viabili. BotQ rappresenta l’impianto manifatturiero dedicato di Figure, progettato per alte volumi senza dipendere da fornitori esterni, garantendo controllo su qualità e iterazioni rapide. Questa settimana, l’azienda produrrà 55 robot F.03, confermando l’obiettivo di 12.000 unità annue sulla prima linea, con un target di 100.000 robot in quattro anni. La transizione a processi come fusione sotto pressione, stampaggio a iniezione e timbratura ha ridotto drasticamente i costi unitari, rendendo il F.03 pronto per la scala globale. Il F.03 è stato ridisegnato da zero per Helix, il modello vision-language-action di Figure, integrando percezione, ragionamento e controllo in un unico cervello generalista. Con un design più morbido e testurizzato, mani dexterous a 20 gradi di libertà e batteria integrata nel torso con densità energetica aumentata del 94% rispetto alle generazioni precedenti, il robot è ottimizzato per compiti domestici come lavare piatti o pulire. Figure mira a portare questi umanoidi in ogni casa, espandendo le capacità umane attraverso AI avanzata e produzione di massa. Sfruttando offload dati ad alta velocità, flotte di F.03 possono caricare terabyte per apprendimento continuo, rivoluzionando fabbriche e residenze. Il video non è solo un annuncio, ma una vetrina sul progresso di Figure verso la produzione seriale, evidenziando l’impegno per supply chain interne e innovazione hardware. Rilasciato in un momento di accelerazione manifatturiera, rafforza la posizione di Figure nel panorama competitivo della robotica, con focus su sicurezza, costo-efficacia e autonomia reale. Questa milestone a BotQ segnala che i robot umanoidi non sono più prototipi da laboratorio, ma prodotti pronti per il deployment massivo. Figure AI sta trasformando l’industria robotica, puntando a umanoidi versatili per case e fabbriche entro i prossimi anni. Il tuo indirizzo email non sarà pubblicato. I campi obbligatori sono contrassegnati * Commento * Nome * Email * Sito web Salva il mio nome, email e sito web in questo browser per la prossima volta che commento. Il video è la registrazione del keynote tenuto da Jensen Huang, fondatore e CEO di NVIDIA, all’NVIDIA GTC Taipei 2026, svoltosi in coincidenza con il COMPUTEX di Taipei il 31 maggio 2026 presso il Taipei Music Center. Si tratta di uno degli eventi tecnologici più attesi dell’anno, nel corso del quale Huang ha annunciato alcune […] Il video pubblicato dall’archivio della BBC è un affascinante documento storico tratto dalla trasmissione Micro Live, originariamente andata in onda su BBC Two il 12 dicembre 1986. In poco più di sei minuti, la giornalista Lesley Judd compie un viaggio dall’Inghilterra all’aeroporto di Schiphol, in Olanda, per dimostrare al pubblico televisivo dell’epoca come fosse possibile […] Il video pubblicato da OpenAI il 22 maggio 2026 presenta il lancio in preview di una nuova esperienza di finanza personale integrata in ChatGPT, disponibile per gli utenti Pro negli Stati Uniti. Connessione sicura dei conti finanziari Gli utenti possono ora collegare in modo sicuro i propri conti bancari e finanziari a ChatGPT attraverso Plaid, […] Google I/O 2026 viene presentato come il momento in cui l’azienda fa un salto netto verso una “era agentica”, in cui l’intelligenza artificiale non si limita a rispondere ma pianifica, decide e agisce nel mondo digitale degli utenti.Il keynote mette al centro Gemini come piattaforma unificata che alimenta prodotti, servizi e dispositivi, con l’obiettivo dichiarato […] Cos’è Googlebook Googlebook è il nuovo nome di una categoria di laptop che Google ha presentato recentemente nell’ambito dell’Android Show 2026, posizionandolo come il primo portatile progettato da cima a fondo per Gemini Intelligence. Non è un semplice Chromebook con un’assistente AI in più, ma un sistema operativo‑hardware pensato per integrare l’intelligenza artificiale direttamente nel […] L’Opening Keynote di Code with Claude 2026 rappresenta il momento inaugurale della conferenza organizzata da Anthropic per mostrare l’evoluzione di Claude nel lavoro degli sviluppatori, dei team tecnici e delle aziende che stanno integrando agenti AI nei propri processi. La sessione ufficiale si è tenuta a San Francisco il 6 maggio 2026, dalle 09:00 alle […] Il video presenta un’eccezionale trasformazione di una iconica Ford Mustang del 1966 in un veicolo completamente elettrico, un progetto ambizioso portato a termine da Calimotive Auto Recycling a Sacramento, California. Questa build, durata due anni, fonde il fascino vintage della muscle car americana con le tecnologie all’avanguardia di Tesla, creando un’auto unica che mantiene l’estetica […] Ubuntu 26.04 LTS, nome in codice Resolute Raccoon, è stata rilasciata il 23 aprile 2026 da Canonical come undicesima release long-term support di Ubuntu. È pensata per un uso professionale e di produzione, con un forte accento su affidabilità, sicurezza e supporto ai carichi di lavoro moderni, in particolare nell’ambito dell’intelligenza artificiale e dell’infrastruttura cloud. […] Figure AI pubblica un aggiornamento cruciale sulla rampa di produzione del suo robot umanoide di terza generazione, il F.03, nello stabilimento BotQ. Il video, caricato il 29 aprile 2026, dura circa 2 minuti e 53 secondi e mostra le impressionanti conquiste industriali raggiunte dall’azienda. In soli 120 giorni, Figure ha scalato la produzione di 24 […] Brick Machines ha realizzato una LEGO Coffee Factory capace di preparare il caffè su comando tramite smartphone, trasformando una semplice costruzione in una macchina funzionante e scenografica. Il progetto unisce creatività, automazione e spirito da laboratorio, con un risultato pensato sia per stupire sia per essere davvero utile. Come funziona la macchina La struttura utilizza […] Il video racconta Claude Design come una piattaforma in cui l’utente non si limita a descrivere un’idea, ma la trasforma in un progetto visivo vero e proprio attraverso una conversazione con Claude. Il messaggio più importante è che la progettazione viene resa più immediata, perché l’intelligenza artificiale costruisce una prima versione del lavoro e poi […] Il video “Miniature Mountain Magic: A Tilt-Shift Journey through Four Seasons in the Alps” mostra le Alpi bavaresi in una chiave visiva giocosa e spettacolare, trasformando il paesaggio in un mondo che sembra in miniatura. È un lavoro di Joerg Daiber per il progetto Little Big World, costruito con riprese aeree, time-lapse e tecnica tilt-shift. […] VOXmail è una piattaforma italiana dedicata all'invio di newsletter e all'email marketing, attiva dal 2008 e sviluppata interamente in Italia da Void Labs. Ogni giorno, migliaia di utenti si affidano a questo servizio per raggiungere i propri iscritti con comunicazioni efficaci e profession [...] FlowSpeech è una piattaforma di sintesi vocale basata sull'intelligenza artificiale che trasforma testi, documenti e immagini in audio professionale dall'intonazione naturale, con controllo avanzato delle emozioni, delle pause e dello stile narrativo. Che cos'è FlowSpeech e Come Funzion [...] PicPocket è un’app pensata per condividere e organizzare foto e video con amici, famiglia e gruppi in modo semplice, veloce e ordinato (mette a disposizione spazio per 2000 foto gratis) Sostituisce allegati email e link complicati con uno spazio condiviso che assomiglia a una chat, ma co [...] Cherri (pronunciato "cherry") è un linguaggio di programmazione open source dedicato a Siri Shortcuts, progettato per compilare direttamente uno Shortcut valido e firmato, pronto per essere eseguito su tutti i dispositivi Apple. Che Cos'è Cherri e Qual È il Suo Obiettivo Principale [...] Questo Sfondo dedicato al logo Apple per il WWDC26. Ottimo per iphone ha una risoluzione di 1206 x 2672.. E' appartenente alla categoria Tecnologia ed è reperibile all'interno dei wallpapers della pagina Apple Download Wallpaper L'immagine è ottimizzata per essere utilizzata su smartphone [...] Il video è la registrazione del keynote tenuto da Jensen Huang, fondatore e CEO di NVIDIA, all'NVIDIA GTC Taipei 2026, svoltosi in coincidenza con il COMPUTEX di Taipei il 31 maggio 2026 presso il Taipei Music Center. Si tratta di uno degli eventi tecnologici più attesi dell'anno, nel corso de [...]

Images (10):

|

|||||

| OneRobotics: il gigante cinese dei robot domestici con AI che … | https://www.smartdomotica.it/news/onero… | 2 | Jun 02, 2026 16:00 | active | |

OneRobotics: il gigante cinese dei robot domestici con AI che sfida Figure AIDescription: OneRobotics, l'azienda cinese dietro SwitchBot, è diventata la prima società al mondo quotata in borsa focalizzata sui robot domestici con AI, con una strategia che punta su dati reali e un'unica intelligenza per robot di forme diverse. Content:

Quando Figure AI ha pubblicato il video Helix-02 Bedroom Tidy, mostrando due robot umanoidi Figure 03 rifare il letto e sistemare i vestiti in una camera, l’industria della robotica ha alzato le antenne. Ma mentre tutti guardavano a ovest, dall’altra parte del mondo stava emergendo con discrezione un protagonista cinese capace di fare molto di più che girare video dimostrativi: OneRobotics. Fondata nel 2015 a Shenzhen da due laureati dell’Harbin Institute of Technology, la società è già nota a milioni di persone per la sua linea SwitchBot di dispositivi per la domotica, tra cui apricurtine intelligenti e serrature connesse. Negli ultimi anni, però, ha compiuto un salto qualitativo significativo, espandendosi nella robotica domestica basata su intelligenza artificiale con un’architettura proprietaria chiamata “One Brain, Multiple Embodiments”, il cui cuore è il modello AI OneModel, capace di condividere capacità e continuare ad evolversi tra diverse tipologie di robot. I numeri raccontano una crescita solida: il fatturato principale è passato da 275 milioni di yuan nel 2022 a 610 milioni di yuan nel 2024, con il Giappone che ha rappresentato il 68% dei ricavi nel primo semestre 2025. Non a caso, la televisione pubblica giapponese NHK ha dedicato un’intervista speciale all’azienda poco dopo la pubblicazione del video di Figure AI, concentrandosi proprio sulla dimostrazione live del robot onero H1 che, in un ambiente domestico reale, ha eseguito il flusso completo di riconoscimento degli indumenti, presa e inserimento nel cestello della lavatrice, senza scenografie costruite ad arte. Il 30 dicembre 2025, OneRobotics è approdata sul Main Board della Borsa di Hong Kong con il codice 06600.HK, diventando la prima società al mondo quotata in borsa focalizzata sui robot domestici con intelligenza artificiale embodied. L’IPO ha raccolto circa 1,64 miliardi di dollari di Hong Kong (circa 188 milioni di euro), e la capitalizzazione di mercato, già a inizio gennaio 2026, aveva superato i 23 miliardi di HKD, pari a circa 2,7 miliardi di euro. Seleziona SmartDomotica.it come fonte preferita su Google L’approccio “una sola mente, molteplici forme” si traduce concretamente in tre linee di prodotto che coprono altrettanti scenari domestici fondamentali. Il robot compagno Kata Friends si rivolge all’interazione e alla compagnia intelligente, Acemate entra nell’ambito dello sport e del benessere, mentre onero H1 affronta i servizi domestici veri e propri. Tutti condividono lo stesso cervello artificiale, con il vantaggio che ogni dato raccolto in un contesto arricchisce l’intera piattaforma. Acemate è già diventato un caso a sé: classificato come il primo robot da tennis con AI al mondo, integra visione artificiale, interazione ad alta dinamica e decision-making in tempo reale per muoversi autonomamente e rispondere ai colpi. Questa innovazione gli ha valso un posto nella celebre lista delle migliori invenzioni 2025 del Time, lo stesso riconoscimento che ha portato anche Figure 03 sotto i riflettori. Una coincidenza che dice molto sulla direzione in cui si sta muovendo l’industria. Sul fronte delle infrastrutture dati, a inizio 2026 OneRobotics ha vinto una gara pubblica a Shenzhen per la costruzione di un “Embodied Intelligence Data Full-Chain Service Center”, un contratto dal valore di 44,95 milioni di yuan (circa 5,6 milioni di euro). Il progetto prevede il dispiegamento di unità onero H1 a doppio braccio mobile, terminali di acquisizione dati UMI e sistemi indossabili per la teleoperazione, con scenari applicativi che spaziano dall’assistenza agli anziani al retail, fino alla ricerca scientifica. Si tratta di un passaggio che segna l’evoluzione dell’azienda da produttore di robot commerciali a vero e proprio fornitore di infrastrutture per la raccolta e l’addestramento dei dati, chiudendo il cerchio tra corpi fisici, scenari reali e miglioramento continuo dei modelli. Con prodotti distribuiti in oltre 90 Paesi e regioni e più di 3,6 milioni di famiglie già servite nel mondo, OneRobotics non parte da zero sul fronte della penetrazione commerciale. E proprio qui risiede forse il vantaggio competitivo più difficile da replicare: non la capacità di girare video spettacolari in ambienti controllati, ma quella di accumulare dati reali da case reali, dove le variabili sono infinite e la complessità non si può simulare in laboratorio. Seleziona SmartDomotica.it come fonte preferita su Google

Images (2):

|

|||||

| Figure AI presenta Helix 02: i robot umanoidi che collaborano … | https://www.mrw.it/news/figure-ai-prese… | 10 | Jun 02, 2026 16:00 | active | |

Figure AI presenta Helix 02: i robot umanoidi che collaborano senza regia centrale - MRW.itDescription: Figure AI ha rivelato Helix 02, un sistema innovativo che guida robot umanoidi nella collaborazione autonoma. Content:

Figure AI ha recentemente svelato un avanzato sistema di controllo chiamato Helix 02, progettato per gestire i suoi robot umanoidi. Questo sistema è in grado di guidare i robot senza la necessità di un pianificatore centralizzato, permettendo così una collaborazione fluida e autonoma, come dimostrato in un video che ha catturato l’attenzione del pubblico. Nel video, due robot F.03 sono stati filmati mentre sistemano una camera da letto in meno di due minuti. La scena più impressionante è quella in cui i robot collaborano per sistemare un piumone. Entrambi si posizionano ai lati opposti del letto, sollevano e stendono il piumone, correggendo le pieghe e adattandosi ai movimenti reciproci. Questa azione evidenzia non solo la loro capacità di lavorare insieme, ma anche l’efficacia del sistema Helix 02 nel tradurre istruzioni visive in movimenti coordinati. Una delle caratteristiche distintive di Helix 02 è l’assenza di una regia centrale o di uno scambio di messaggi tra i robot. Ognuno di essi utilizza le proprie telecamere per osservare l’ambiente e dedurre le intenzioni dell’altro in base ai movimenti. Questo approccio decentralizzato consente ai robot di adattarsi rapidamente ai cambiamenti nell’ambiente, rendendoli più versatili e reattivi. Il sistema Helix 02 integra una “memoria muscolare”, che consente ai robot di apprendere e migliorare le proprie abilità nel tempo. Nel video, i robot non solo sistemano il piumone, ma eseguono anche una serie di altre azioni, come aprire porte, appendere indumenti e gettare rifiuti. Tuttavia, la demo solleva interrogativi sulla versatilità di questi robot. Non è chiaro quante prove siano state necessarie per raggiungere tali risultati e come si comporterebbero in ambienti con arredi o oggetti non presenti durante l’addestramento. La tecnologia sviluppata da Figure AI rappresenta un passo significativo verso il futuro della robotica. La capacità di lavorare in modo autonomo e collaborativo potrebbe avere applicazioni in vari settori, dall’assistenza domestica alla logistica. Tuttavia, è fondamentale continuare a esplorare le possibilità e i limiti di questi sistemi per comprendere appieno il loro potenziale e le sfide che si presenteranno. © 2003 - 2025 Mr. Webmaster ® è un marchio registrato.E' vietata ogni forma di riproduzione. Un progetto a cura di IKIweb Internet Media S.r.l. - P.IVA: 02848390122 - NREA: VA-294824 - Cap. soc. 10.000 Eu i.v. - Sede legale: Via Varzi 6, Busto A. (VA) - Sede operativa: Vicolo dell'Assunta 5, Busto A. (VA) Gestisci le preferenze pubblicitarie

Images (10):

|

|||||

| Figure AI produit désormais un robot humanoïde par heure dans … | https://kulturegeek.fr/news-351646/figu… | 10 | Jun 02, 2026 16:00 | active | |

Figure AI produit désormais un robot humanoïde par heure dans son usine BotQ - KultureGeekURL: https://kulturegeek.fr/news-351646/figure-ai-produit-desormais-robot-humanoide-heure-usine-botq Description: Décidément, l'heure est à la phase de la production de masse pour les robots humanoïdes. La firme américaine Figure AI franchit une étape industrielle Content:

Décidément, l’heure est à la phase de la production de masse pour les robots humanoïdes. La firme américaine Figure AI franchit une étape industrielle majeure en annonçant avoir multiplié par 24 son rythme de production en moins de quatre mois, passant d’un robot par jour à un robot par heure pour son modèle Figure 03 ! Cette montée en cadence s’appuie sur BotQ, l’usine conçue par Figure AI pour fabriquer ses humanoïdes à plus grande échelle. L’entreprise indique avoir déjà produit plus de 350 robots Figure 03 et démontré le cycle nécessaire à ses objectifs de production : « Nous avons réussi à démontrer le temps de cycle d’un robot par heure nécessaire à nos objectifs de production du Figure 03. » Pour y parvenir, Figure AI a mis en place des lignes dédiées aux modules critiques du robot, pilotées par un logiciel interne de gestion de production. Plus de 150 postes de travail connectés seraient désormais utilisés dans cette structure industrielle. Outre cette production de masse, Figure AI dispose aussi d’une unité dédiée à l’entrainement des robots située dans son quartier général : dans ce lieu unique, on peut voir des robots déambuler dans les couloirs ou répéter des gestes précis, le tout donnant l’impression d’être littéralement projeté dans une version réelle de la série Westworld (voir vidéo ci-dessous). La production de centaines de robots permet ainsi Figure AI d’accumuler davantage de données terrain (et plus rapidement) pour améliorer Helix, son système d’intelligence artificielle destiné à piloter les tâches physiques du quotidien. La société affirme aussi avoir dépassé les 9 000 actionneurs produits, avec plus de dix variantes de composants. Figure AI espère ainsi réduire les coûts, fiabiliser les machines et préparer leur déploiement dans les entreprises… avant d’envisager la vente aux particuliers. Signaler une erreur dans le texte Merci de nous avoir signalé l'erreur, nous allons corriger cela rapidement. Δ Nous nous réservons le droit de supprimer les commentaires qui ne respectent pas ces règles Meta a annoncé aujourd’hui de nouveaux garde-fous sur Instagram, Facebook et Messenger pour limiter l’exposition... SFR annonce la disponibilité de « SFR Navigation Protégée », une nouvelle protection de... Anthropic a déposé son projet d’introduction en Bourse, ouvrant une nouvelle phase pour l’un des groupes les plus suivis de... Le premier indice de qualité de MoffettNathanson bouscule la hiérarchie habituelle du streaming en plaçant Apple TV devant Netflix.... Amazon annonce les dates pour le Prime Day 2026, son événement shopping avec plein de réductions : ce sera du 23 juin à... Météo Météo Musique Musique Jeux Musique Musique Divertissement Utilitaires Jeux Thriller Thriller Horreur Thriller Thriller Drame Action et aventure Comédie 2 Jun. 2026 • 16:00 2 Jun. 2026 • 15:11 2 Jun. 2026 • 13:33 2 Jun. 2026 • 11:26 Actualité High-Tech, Culture Geek et comparateur de prix Recherchez le meilleur prix des produits Hi-tech Recherchez des articles sur le site

Images (10):

|

|||||

| Robot vs uomo in magazzino: chi ha vinto la sfida … | https://www.libero.it/tecnologia/robot-… | 10 | Jun 02, 2026 16:00 | active | |

Robot vs uomo in magazzino: chi ha vinto la sfida di Figure AI?URL: https://www.libero.it/tecnologia/robot-figure-ai-vs-uomo-magazzino-sfida-117081 Description: Il robot umanoide F.03 di Figure AI ha sfidato un lavoratore umano in magazzino per 10 ore consecutive. Il risultato finale sorprende: scopri cosa è successo. Content:

Figure AI ha messo un robot umanoide contro un lavoratore reale in una sfida di 10 ore. Il risultato finale mostra quanto la distanza si sia ridotta. Pubblicato: 18 Maggio 2026 Biagio Petronaci Tech Editor Scrive di tecnologia e innovazione digitale analizzando trend e impatti socio-culturali. Per anni, il racconto sull’automazione industriale è stato accompagnato dalla stessa idea: i robot avrebbero superato rapidamente gli esseri umani nei lavori ripetitivi. La prova organizzata da Figure AI restituisce invece uno scenario più complesso. Dopo dieci ore consecutive di lavoro in magazzino, il robot umanoide F.03 non è riuscito a battere un dipendente umano in una gara di smistamento pacchi. Il distacco finale è stato minimo, ma sufficiente a lasciare un dato concreto: almeno oggi, nelle attività fisiche reali, l’uomo conserva ancora un vantaggio. La sfida è stata trasmessa in diretta streaming e ha attirato molta attenzione online, anche perché rappresenta uno dei test pubblici più chiari sul livello raggiunto dalla robotica umanoide applicata alla logistica. Il test prevedeva un compito semplice da descrivere, ma difficile da sostenere per ore senza rallentamenti. Il robot e il concorrente umano dovevano individuare il pacco, riconoscere il codice a barre, afferrarlo e posizionarlo correttamente su un nastro trasportatore con il barcode rivolto verso il basso. Da una parte c’era il robot F.03 di Figure AI, dall’altra Aime, intern dell’azienda. Entrambi hanno lavorato per dieci ore consecutive mantenendo la stessa routine operativa. Alla fine della prova, il lavoratore umano ha elaborato 12.924 pacchi contro i 12.732 completati dal robot. Anche la differenza media per singola operazione è stata minima: 2,79 secondi per pacco contro 2,83. Il dato più interessante è proprio questo. Non si tratta più di una macchina lontana dalle prestazioni umane, ma di un sistema ormai abbastanza vicino da rendere il confronto credibile anche in un contesto operativo reale. Durante la competizione, Aime ha effettuato la pausa pranzo e i momenti di riposo previsti dalle normative sul lavoro. Il robot, invece, ha continuato a operare senza interruzioni. Secondo quanto dichiarato dal CEO Brett Adcock, il dipendente avrebbe concluso la giornata con un forte affaticamento fisico e problemi all’avambraccio dopo ore di movimenti ripetuti. È un elemento che cambia il significato del risultato finale. In una singola giornata, il vantaggio umano è rimasto intatto, ma la resistenza sul lungo periodo continua a essere uno dei principali punti di forza delle macchine. Figure AI sostiene infatti che i propri sistemi siano in grado di lavorare per turni molto più lunghi senza cali fisici evidenti. Nei test pubblicati dalla società, i robot avrebbero continuato a smistare pacchi anche oltre la durata della sfida pubblica. La logistica resta uno dei principali obiettivi della robotica umanoide proprio per la natura ripetitiva delle attività di magazzino. Figure AI punta a dimostrare che i suoi robot possano lavorare accanto agli esseri umani, ma secondo alcuni esperti la tecnologia non sarebbe ancora pronta per un’adozione industriale davvero ampia e stabile. La sfida organizzata da Figure AI mette in evidenza un paradosso sempre più evidente. Mentre molte aziende sostengono che l’intelligenza artificiale automatizzerà rapidamente i lavori d’ufficio, i robot umanoidi non riescono ancora a superare gli esseri umani nelle attività fisiche reali. Nonostante i progressi della robotica, il vantaggio umano nei contesti operativi concreti resta tangibile. Allo stesso tempo, però, il risultato ottenuto da F.03 rimane significativo proprio per la distanza ormai ridotta rispetto alle prestazioni di un lavoratore umano. FAQ Chi ha vinto la sfida tra F.03 e l'umano? L'intern Aime ha smistato 12.924 pacchi contro 12.732 del robot F.03, risultando vincitore nella giornata. Qual era il compito della prova? Individuare il pacco, leggere il codice a barre, afferrarlo e posizionarlo con il barcode rivolto verso il basso. Quanto era la differenza media per pacco? La differenza media per singola operazione è stata minima: 2,79 s per l'umano contro 2,83 s per il robot. Il robot ha fatto pause durante la prova? No, il robot ha operato senza interruzioni mentre l'umano ha osservato le pause previste dalle normative. Perché i magazzini sono adatti ai robot umanoidi? La logistica implica attività ripetitive e turni lunghi, dove la resistenza e la continuità delle macchine sono un vantaggio. L'intern Aime ha smistato 12.924 pacchi contro 12.732 del robot F.03, risultando vincitore nella giornata. Individuare il pacco, leggere il codice a barre, afferrarlo e posizionarlo con il barcode rivolto verso il basso. La differenza media per singola operazione è stata minima: 2,79 s per l'umano contro 2,83 s per il robot. No, il robot ha operato senza interruzioni mentre l'umano ha osservato le pause previste dalle normative. La logistica implica attività ripetitive e turni lunghi, dove la resistenza e la continuità delle macchine sono un vantaggio. Come funzionerà il Custom Feed di YouTube? Samsung, la soundbar top oggi costa pochissimo: va comprata subito Smart TV Panasonic, prezzo mai visto prima: solo oggi costa la metà Galaxy A56, con lo sconto di oggi il prezzo crolla al minimo storico Come potrebbe essere fatta una forma di vita aliena? Ecco qualche ipotesi Amazon anticipa il Prime Day 2026: offerte esclusive e iniziative dedicate al Sud Italia Pop-up "polyfill.io" blocca le smart TV Samsung: cos'è e come risolvere subito Nvidia vuole reinventare il PC: il nuovo chip RTX Spark che sfida Intel e Apple Investimenti, incentivi e strategie per il 2026 © Italiaonline S.p.A. 2026Direzione e coordinamento di Libero Acquisition S.á r.l.P. IVA 03970540963

Images (10):

|

|||||

| Figure AI, saat başı 1 insansı robot üretmeye başladı | … | https://www.donanimhaber.com/figure-ai-… | 10 | Jun 02, 2026 16:00 | active | |

Figure AI, saat başı 1 insansı robot üretmeye başladı | DonanımHaberURL: https://www.donanimhaber.com/figure-ai-saat-basi-1-insansi-robot-uretmeye-basladi--205143 Description: Figure, insansı robot üretimini 4 ayda 24 kat artırarak saatte 1 adede çıkardı. Verimlilik yüzde 80’i aşarken yeni Helix AI modeli robotlardaki görsel algıyı daha da geliştirdi. Content:

Teknoloji ve bilim dünyasını seven ve takip etmekten büyük zevk alan Metin, öğrendiklerini ise DonanımHaber okuyucuları ile paylaşır. Tam Boyutta Gör ABD merkezli robotik şirketi Figure AI, BotQ üretim tesisinde insansı robot modeli Figure 03 için üretim hızını günde bir adet seviyesinden saatte bir adet seviyesine çıkararak yalnızca dört ay içinde 24 katlık artış elde etti. Bu gelişme, şirketin prototip aşamasından seri üretime geçtiğini net biçimde ortaya koyuyor. Üretim artışı, şirketin geliştirdiği özel yazılım altyapısı ve 150’den fazla birbirine bağlı iş istasyonundan oluşan üretim hattı sayesinde mümkün oldu. Figure, bu ölçekleme süreciyle birlikte bugüne kadar 350’den fazla robot teslimatı gerçekleştirdiğini açıkladı. Şirketin hedefi ise yıllık 12.000 robot üretim kapasitesine ulaşmak ve bu kapasiteyi daha da yukarı taşımak. Şirket, sadece bu hafta 55 adet insansı robot üreteceğini de açıkladı. Tam Boyutta Gör Figure, üretim verimliliğini artırmak için tedarik zincirinde kalite standartlarını sıkılaştırdı ve üretim sürecine 50’den fazla ara kontrol noktası ekledi. Bu yaklaşımın sonucunda nihai üretim hattında ilk denemede başarı oranı yüzde 80’in üzerine çıktı. Özellikle kritik bileşenlerde dikkat çekici sonuçlar elde edildi. Batarya üretiminde verimlilik yüzde 99,3 seviyesine ulaşırken toplamda 9.000’den fazla aktüatör üretildi. Şirket ayrıca 500’ün üzerinde robotun sevkiyatını tamamladı. Bununla birlikte üretilen her bir robot, üretim sonrası kapsamlı test süreçlerinden geçiriliyor. 80’den fazla fonksiyonel test, erken arızaları önlemek amacıyla uygulanıyor. Bu testler arasında çömelme ve koşu gibi fiziksel dayanıklılık senaryoları da yer alıyor. Şirketin robot filosu büyüdükçe robotlarda kullanılan yapay zeka modeli Helix için de daha fazla ölçekli veri toplanmış oluyor. Bu da robotların otonom yeteneklerinin geliştirilmesine doğrudan katkı sağlıyor. Robotlarla veri merkezi inşa edecek şirket geliyor 1 ay önce eklendi Ayrıca Figure, robot filosunu yönetmek için uzaktan güncelleme (OTA), servis ve filo yönetim sistemleri geliştirdi. Bu sistemler sayesinde sahadaki robotlar sürekli izlenebiliyor, güncellenebiliyor ve elde edilen geri bildirimler doğrudan geliştirme süreçlerine aktarılabiliyor. Şirket, üretim tarafındaki ilerlemelerin yanı sıra yapay zeka modelinde de önemli bir güncelleme duyurdu. Helix System 0 (S0) adlı modelin yeni versiyonu, robotlara çevreyi algılayarak hareket etme yeteneği kazandırıyor. Önceki sürüm yalnızca robotun kendi eklem hareketlerini ve pozisyonunu algılayan proprioseptif verilere dayanıyordu. Bu durum, merdiven veya engebeli zemin gibi karmaşık ortamlarda hareket kabiliyetini sınırlıyordu. Yeni güncellemeyle birlikte robotlar artık stereo kameralar aracılığıyla elde edilen görsel verileri kullanarak çevrenin üç boyutlu haritasını oluşturabiliyor. Bu sayede robot, bulunduğu ortamı hem “hissedebiliyor” hem de “görebiliyor”. Bu sistemin simülasyon ortamında farklı ve rastgele zemin koşullarında pekiştirmeli öğrenme yöntemiyle uçtan uca eğitildiğinin altı çiziliyor. En dikkat çekici noktalardan biri ise bu öğrenilmiş davranışların, ek bir kalibrasyon gerektirmeden doğrudan gerçek dünyaya aktarılabilmesi oldu. Sonuç olarak Figure 03 robotları artık merdivenleri çıkabilen, farklı zeminlerde dengeli hareket edebilen ve değişken ışık koşullarında stabil performans gösterebilen bir yapıya kavuştu. İnsansı robot Atlas bu kez buzdolabı taşıdı {{Description}} https://www.amazon.com.tr/dp/B0BM62VSHP https://www.amazon.com.tr/dp/B0DT1KLV4H https://www.amazon.com.tr/dp/B0CSNGM6D6 https://www.amazon.com.tr/dp/B09XBTQZF2 https://app.hb.biz/dB52DXc6OZqj https://store.steampowered.com/app/858710/Gravity_Circuit

Images (10):

|

|||||

| Journal of Medical Internet Research - Human and Robot Assistance … | https://www.jmir.org/2026/1/e94738 | 10 | Jun 02, 2026 00:00 | active | |

Journal of Medical Internet Research - Human and Robot Assistance for Cognitive Load in Younger and Older Adults: Multimodal Within-Subject Experimental StudyURL: https://www.jmir.org/2026/1/e94738 Description: Background: Maintaining cognitive efficiency and independence is a central goal of healthy aging. Socially assistive robots (SARs) are increasingly proposed as scalable digital health solutions to support daily activities in older adults and to facilitate aging-in-place. However, concerns remain regarding whether robot-mediated assistance reduces or inadvertently increases cognitive load, potentially undermining usability, user acceptance, and long-term real-world adoption, particularly in aging populations. Objective: This study aimed to examine how robot-assisted (human-robot interaction [HRI]) and human-assisted (human-human interaction [HHI]) support influences cognitive load during task performance in younger and older adults. A multimodal assessment framework integrating behavioral, subjective, and physiological measures was used to identify age-related differences in cognitive effort and stress associated with different forms of assistance. Methods: A total of 60 healthy adults (30 younger adults: mean age 34.8, SD 10.1 years; and 30 older adults: mean age 72.3, SD 5.5 years) completed a modified Trail Making Test under 7 within-subject conditions: independent performance (baseline), 3 robot-assisted conditions, and 3 human-assisted conditions, each corresponding to low, medium, and high cognitive load levels. Performance accuracy and completion time were recorded as behavioral indicators. Perceived cognitive load was assessed using the National Aeronautics and Space Administration Task Load Index, and physiological stress was evaluated via pre- and postcondition salivary cortisol concentrations. Linear mixed-effects models were applied to examine main effects and interactions of age group, assistance type, cognitive load level, and time. Results: Significant interactions between age group and assistance type were observed for accuracy (=6.50; =.01) and perceived cognitive load (=4.58; =.03). Older adults demonstrated lower accuracy and higher perceived cognitive load during robot-assisted conditions compared with human-assisted conditions, whereas no such differences were observed in younger adults. Across age groups, human assistance improved performance at low and medium cognitive load levels. Physiological analysis revealed a significant age×assistance× time interaction (=5.16; =.02), with older adults showing increased posttask cortisol concentrations during robot-assisted interaction, indicating higher physiological stress. Conclusions: While both human and robotic assistance enhanced task performance relative to independent completion, the type of support critically shaped cognitive load responses in older adults. Robot-assisted interaction was associated with increased behavioral errors, higher perceived workload, and elevated physiological stress, suggesting that current SAR implementations may impose additional extraneous cognitive load in older users. These findings highlight the importance of designing adaptive, age-sensitive digital assistive systems that minimize cognitive burden through simplified interaction, responsive pacing, and multimodal support. Multimodal cognitive load assessment provides a valuable framework for optimizing the usability and effectiveness of assistive digital health technologies for aging populations. Content: