AUTOMATION HISTORY

1191

Total Articles Scraped

2288

Total Images Extracted

Scraped Articles

New Automation| Action | Title | URL | Images | Scraped At | Status |

|---|---|---|---|---|---|

| How the Voice of Service Robots Can Convey Social Support | https://idw-online.de/de/news870795 | 10 | May 18, 2026 15:58 | active | |

How the Voice of Service Robots Can Convey Social SupportURL: https://idw-online.de/de/news870795 Content:

Nachrichten, Termine, Experten d Instanz: Teilen Teilen: 13.05.2026 09:36 It’s not just what is said, but how it is said: A study from the University of Augsburg examines how the voice of service robots influences customer perceptions after ser-vice failures When service robots make mistakes, it is not only important whether customers receive compensation. The robot’s voice can also shape how the situation is perceived. A human-like voice can make customers feel more supported after a service failure. This is the finding of a study by the Chair of Value Based Marketing at the University of Augsburg, published in the Journal of Business Research. The findings are relevant for companies that use service robots and similar AI-based systems. Service robots are increasingly used in service settings, such as hotels, restaurants, and airports. They can provide information, serve food, or perform other basic service tasks. In doing so, they can help address challenges related to labor shortages in many industries. However, robots also make mistakes: they may misunderstand customers, bring the wrong order, or fail to respond appropriately to a problem. This raises the question of how service robots should be designed and how they should respond in such situations. Voice as Social Support It is hardly surprising that financial compensation, such as discounts, can be helpful after service failures. However, the Augsburg study shows that the voice of a service robot can also play an important role. “When a service robot makes a mistake, customers are not only concerned with the factual correction of the error,” explains Maximilian Bruder, one of the authors of the study. “They also perceive the extent to which they feel supported and taken seriously in the situation.” The study focuses on the concept of social support. This concept has long been established in psychology, but has received considerably less attention in marketing research. The study shows that a human-like voice can increase perceived social support. Customers then experience the robot’s response as more helpful, caring, and supportive. This, in turn, has a positive effect on satisfaction with the service robot and on attitudes toward the company. This effect is particularly relevant when no financial compensation is offered. When customers receive a discount, this material form of recovery tends to dominate their evaluation of the situation. However, when no such compensation is provided, the way the robot communicates becomes more important. In such cases, a human-like voice can help customers perceive the interaction as more supportive. Not Every Human-Like Feature Has the Same Effect Another finding of the study is that not every form of human-likeness has the same effect. While a human-like voice produced positive effects, a more human-like appearance of the robot did not lead to comparable outcomes. “A voice not only conveys information, but also social cues,” explains Michael Paul, Chair of Value Based Marketing at the University of Augsburg. “It can influence whether a response is perceived as mechanical and distant or as supportive and caring.” For companies, this means that the design of service robots should not be reduced to their appearance or technical functionality. Especially in difficult service situations, voice can be an important design element. When financial compensation cannot or should not be offered, a human-like voice can help customers feel more supported and thereby improve their overall perception of the service interaction. Five Studies Show: Voice Makes a Difference The published article reports a total of five experimental studies. The studies examined service failures in a restaurant context in which a service robot brings the wrong order. The authors varied, among other things, whether financial compensation was offered and whether the robot spoke with a human-like or artificial-sounding voice. Additional studies examined whether comparable effects also emerge from a more human-like robot appearance. The results show that voice can convey social support, whereas a more human-like appearance did not produce comparable effects. https://www.uni-augsburg.de/en/fakultaet/wiwi/prof/bwl/paul/ https://www.sciencedirect.com/science/article/pii/S014829632600295X Merkmale dieser Pressemitteilung: Journalisten Psychologie, Wirtschaft überregional Forschungsergebnisse Englisch Suche in Pressemitteilungen Suche in Terminen Anfangsdatum Enddatum Sie können Suchbegriffe mit und, oder und / oder nicht verknüpfen, z. B. Philo nicht logie. Verknüpfungen können Sie mit Klammern voneinander trennen, z. B. (Philo nicht logie) oder (Psycho und logie). Zusammenhängende Worte werden als Wortgruppe gesucht, wenn Sie sie in Anführungsstriche setzen, z. B. „Bundesrepublik Deutschland“. Die Erweiterte Suche können Sie auch nutzen, ohne Suchbegriffe einzugeben. Sie orientiert sich dann an den Kriterien, die Sie ausgewählt haben (z. B. nach dem Land oder dem Sachgebiet). Haben Sie in einer Kategorie kein Kriterium ausgewählt, wird die gesamte Kategorie durchsucht (z.B. alle Sachgebiete oder alle Länder).

Images (10):

|

|||||

| Fanuc partners with Google to bring Gemini AI and Intrinsic … | https://thenextweb.com/news/fanuc-googl… | 10 | May 18, 2026 15:57 | active | |

Fanuc partners with Google to bring Gemini AI and Intrinsic platform to 1.1 million industrial robotsURL: https://thenextweb.com/news/fanuc-google-physical-ai-factory-robots Description: Fanuc shares hit a record high after partnering with Google to integrate Gemini Enterprise and the Intrinsic robotics platform into its industrial robot systems worldwide. Content:

TL;DRFanuc, the world’s largest industrial robot manufacturer with 1.1 million robots installed globally, is integrating Google Cloud’s Gemini Enterprise and Google’s Intrinsic robotics platform into its systems, replicating Google’s Android strategy for factory robots and sending Fanuc shares to a record high. Fanuc, the world’s largest industrial robot manufacturer with 1.1 million robots installed globally, is integrating Google Cloud’s Gemini Enterprise and Google’s Intrinsic robotics platform into its systems, replicating Google’s Android strategy for factory robots and sending Fanuc shares to a record high. Fanuc makes more industrial robots than anyone on the planet. Google makes more software platforms than anyone on the planet. On Wednesday, the two companies announced a partnership that merges those positions: Fanuc will integrate Google Cloud’s Gemini Enterprise and Google’s Intrinsic robotics platform into its industrial robot systems, giving the 1.1 million Fanuc robots already installed in factories worldwide the ability to understand human instructions, recognise objects, and coordinate autonomously. Fanuc shares surged 16 per cent to an intraday record of 8,880 yen. The market understood what this means before the press release finished loading. It means Google is doing to factory robots what Android did to phones. The 💜 of EU tech The latest rumblings from the EU tech scene, a story from our wise ol' founder Boris, and some questionable AI art. It's free, every week, in your inbox. Sign up now! In February 2026, Google folded Intrinsic, its robotics software subsidiary, out of the experimental Other Bets division and into the core business. The move was not administrative. It was strategic. Intrinsic had spent years building Flowstate, a web-based platform that lets manufacturers build robotic applications without writing thousands of lines of code. The platform handles motion planning, machine learning integration, and task orchestration across different manufacturers’ hardware. A factory could swap robot arms from Fanuc, Universal Robots, and KUKA while keeping the same Intrinsic-powered software running operations. The analogy is precise. Android does not build phones. It provides the operating system that runs across Samsung, Xiaomi, Motorola, and every other manufacturer’s hardware, giving Google access to billions of users without manufacturing a single handset. Intrinsic does not build robots. It provides the intelligence layer that runs across Fanuc, Universal Robots, and KUKA’s hardware, giving Google access to millions of industrial machines without bending a single piece of metal. The Fanuc partnership is the Samsung moment. Samsung was not the first Android partner, but it was the one with the manufacturing scale and market share to prove that Android could dominate. Fanuc commands roughly 16 to 18 per cent of global robot shipments, holds an estimated 50 to 60 per cent of the global CNC market, and has surpassed 1.1 million robots installed in factories from automotive plants to pharmaceutical packaging lines. When the world’s largest robot manufacturer adopts your software platform, the rest of the industry recalculates. The technical details matter. Fanuc will use Google Cloud’s Gemini Enterprise, the same generative AI platform that powers eight million paid enterprise seats across 2,800 companies, to build industrial robot systems that can process natural language instructions, identify and classify objects in unstructured environments, and autonomously control multiple robots working together. Fanuc will also achieve full compatibility with Intrinsic’s Flowstate development environment, meaning developers can program Fanuc robots through a visual, web-based interface rather than proprietary Fanuc code. Fanuc has already shipped more than 1,000 robots equipped with physical AI capabilities since demonstrating the technology at the International Robot Exhibition in Tokyo last December, and reported that demand was accelerating. The company plans to demonstrate an AI agent system for industrial robots later this month, in which collaborative and non-collaborative robots operate together using natural language instructions. The Google partnership provides the foundation model layer that Fanuc’s existing physical AI stack lacked. Accenture has invested in General Robotics, whose GRID platform deploys AI skills across more than 40 robots from different manufacturers including Fanuc, illustrating that the consultancies are already building businesses around multi-vendor physical AI orchestration. Google’s entry changes the economics. Intrinsic is not a startup seeking Series B funding. It is backed by a company with 4.6 trillion dollars in market capitalisation, 70 billion dollars in annual cloud revenue, and the most widely deployed generative AI models in enterprise computing. Fanuc was born from a division of Fujitsu in 1956 and spun off as an independent company in 1972. It is headquartered in Oshino, a village at the base of Mount Fuji in Yamanashi Prefecture, where the corporate campus is surrounded by forest and painted entirely in the company’s signature yellow. By 1982, Fanuc had captured half of the world CNC market, a position it has never relinquished. The company makes three things: CNC systems that control machine tools, industrial robots that operate in factories, and robomachines that combine both capabilities. It makes them better and in greater volume than any competitor. Fanuc posted record sales of 857 billion yen in fiscal 2025, roughly 5.7 billion dollars, with an operating margin of 21.4 per cent. Robot sales declined 16 per cent during the period due to weaker demand in China, Europe, and the Americas, particularly in automobile-related industries. But the physical AI announcement, and the Google partnership that followed, signal that Fanuc sees the next growth cycle coming not from selling more robot arms but from making the arms it has already sold significantly more capable. Nvidia’s GTC 2026 opened with 30,000 attendees and announcements that could reshape the next two years of AI infrastructure, and physical AI was a dominant theme. Fanuc was already a Nvidia partner, having announced in March 2026 that it would integrate Nvidia’s Isaac simulation frameworks and Omniverse libraries into its physical AI pipeline. The Google partnership adds the foundation model layer on top of Nvidia’s simulation and training infrastructure, giving Fanuc a two-platform AI stack that no competitor can match. The physical AI market is projected to grow from 1.5 billion dollars in 2026 to 15.2 billion dollars by 2032, a compound annual growth rate of 47 per cent. The adjacent industrial robotics intelligence software market is forecast to add 49 billion dollars by 2031 as factories shift from programmed motion to adaptive automation. McKinsey projects the broader market for general purpose robots could reach 370 billion dollars by 2040. The numbers are large and speculative. What is not speculative is that every major robotics company in the world is partnering with at least one foundation model provider, and the companies that partner with the strongest providers will capture the most value. Fanuc’s competitors are moving in the same direction. ABB has partnered with Nvidia for simulation-based physical AI. KUKA and Universal Robots are Intrinsic partners. Yaskawa, whose shares also rose on the Fanuc-Google news, has its own AI integrations. But none of them have Fanuc’s installed base. The 1.1 million robots in factories worldwide represent an upgrade opportunity that no amount of new robot sales can match. If even a fraction of those machines receive AI software upgrades through the Intrinsic platform, Google’s physical AI revenue could scale faster than any hardware competitor’s. Alphabet closed in on Nvidia as the world’s most valuable company after Q1 2026 earnings beat estimates across every division, with Google Cloud growing 63 per cent year on year to cross 20 billion dollars in quarterly revenue. The robotics partnership extends Google Cloud’s value proposition beyond chatbots and enterprise agents into the physical operations that account for the majority of global economic output. Manufacturing, logistics, agriculture, and construction are collectively worth tens of trillions of dollars. The companies that provide the intelligence layer for those industries will capture a proportional share. The partnership has a geopolitical dimension. Fanuc announced in March 2026 a 90 million dollar investment to build an 840,000 square foot robot manufacturing facility in Pontiac, Michigan, creating 225 jobs and expanding its US-based production capacity for physical AI-enabled robots. Since 2019, Fanuc America has invested nearly 300 million dollars in US facilities, expanded its footprint to three million square feet, and created more than 700 jobs. The company is opening the largest robotics and automation skills development centre in the United States at its Auburn Hills, Michigan campus later this year. Japan accounts for roughly 38 per cent of global industrial robot production by value and houses five of the ten largest robot manufacturers. SoftBank, NEC, Sony, and Honda have formed a 2.3 billion dollar physical AI consortium with backing from Japan’s national research agency. The country that dominated industrial robotics for four decades is now positioning itself to dominate the AI layer that will make those robots intelligent. Fanuc’s Google partnership is the commercial expression of that national strategy. Sundar Pichai opened Cloud Next 2026 with a 240 billion dollar backlog, 750 million Gemini users, and a plan to turn every Google product into an agent manager. The Fanuc deal shows that the agent strategy extends beyond screens. The same Gemini models that summarise emails and generate code are now being adapted to control robotic arms that weld car frames and assemble electronics. The intelligence is the same. The output modality is different. Instead of text, it produces physical motion. Nvidia’s Jensen Huang said at GTC 2026 that every industrial company will become a robotics company. Google is betting that every robotics company will become a Google customer. The Fanuc partnership is the most significant evidence yet that the bet is working. A 54-year-old Japanese manufacturer that has spent half a century building the most reliable industrial robots in the world just decided that Google’s software is the intelligence layer its machines need. Kia has confirmed plans to deploy Boston Dynamics Atlas robots in its Georgia factories starting in 2028, and every major automaker is evaluating similar programmes. The demand is real. The question was always which software platform would run the robots. Fanuc’s answer, delivered to a market that added 16 per cent to its share price in a single morning, is that the platform will be built by the same company that runs Android, Search, YouTube, and the fastest-growing cloud business in enterprise computing. The factory floor just became a Google product. Alina Maria Stan builds connections that people actually feel. As co-founder and COO of Tekpon, she turns product intuition into real moment (show all) Alina Maria Stan builds connections that people actually feel. As co-founder and COO of Tekpon, she turns product intuition into real moments of discovery, shaping how teams find and adopt SaaS every day. Since 2020, she has led Tekpon’s brand voice, media strategy, and growth plays with a clear focus on human outcomes behind every metric. Before Tekpon, Alina followed curiosity across industries and countries. She was CEO of King Casino Bonus and led affiliate and brand strategy at Extremoo Media and Fable Media in Denmark, where she learned how to build partnerships that last. Early on, she sharpened her CRM and pricing instincts at K.H. ApS, always asking why customers choose what they choose. Her approach is rooted in more than a decade of international experience and two master’s degrees, one in Sustainable Consumption from the Technical University of Munich and one in Consumer Affairs Management from Aarhus University. Get the most important tech news in your inbox each week. The heart of tech A Tekpon Company Copyright © 2006—2026, Cogneve, INC. Made with <3 in Amsterdam.

Images (10):

|

|||||

| Florida Just Deployed 40 Robot Bunnies to Trick the Worst … | https://www.yahoo.com/news/articles/flo… | 6 | May 18, 2026 15:57 | active | |

Florida Just Deployed 40 Robot Bunnies to Trick the Worst Predator in the EvergladesURL: https://www.yahoo.com/news/articles/florida-just-deployed-40-robot-204617781.html Description: Researchers are now experimenting with animatronic, heat-generating rabbits that (they hope) can lure invasive pythons right to them Content:

Florida’s Burmese python problem isn’t going away anytime soon. The researchers, snake trackers, and other conservationists working to remove the giant snakes will be the first to tell you that eradicating this invasive species isn’t a realistic goal. That hasn’t kept them from trying to manage the problem, though, and scientists are now working on a new and futuristic approach to finding and removing pythons: robotic bunny rabbits. Researchers at the University of Florida are hoping these robo-bunnies can be another tool in the python toolbox, similar to the highly successful scout-snake method that has been honed by wildlife biologists at the Conservancy of Southwest Florida. Only instead of using male pythons (that have been surgically implanted with GPS) to lead them to the females, trackers would use the robots to bring the invasive snakes to them. These are stuffed toys that have been retro-fitted with electrical components so they can be remotely controlled. The robots also have tiny cameras that sense movement and notify researchers, who can then check the video feed to see if a python has been lured in. The University’s experiments with robotic rabbits are ongoing, according to the Palm Beach Post, and the research is being funded by the South Florida Water Management District — the same government agency that pays bounties to licensed snake removal experts and hosts the Florida Python Challenge every year. “Our partners have allowed us to trial these things that may sound a little crazy,” wildlife ecologist and UF project leader Robert McCleery told the Post. “Working in the Everglades for ten years, you get tired of documenting the problem. You want to address it.” McCleery said that in early July, his team launched a pilot study with 40 robotic rabbits spread out across a large area. These high-tech decoys will be monitored as the team continues to learn and build on the experiment. (As one example, McCleery explained that incorporating rabbit scents into the robots could be worth consideration in the future.) Read Next: These Snake Trackers Have Removed More than 20 Tons of Invasive Pythons from Florida… and They’re Just Getting Started The idea of using bunnies as decoys made sense for the team at UF, since rabbits, and specifically marsh rabbits, are some of the favorite prey items for Burmese pythons. Recent studies (including one authored by McCleery) have shown the massive declines in the Everglades’ marsh rabbit populations that can be directly attributed to pythons. “Years ago we were hearing all these claims about the decimation of mesomammals in the Everglades. Well, this researcher thought that sounded far-fetched, so he decided to study it,” says Ian Bartoszek, a wildlife biologist and python tracker based in Naples. “So, he got a bunch of marsh rabbits, put [GPS] collars on them, and then he let them go in the core Everglades area … Within six months, 77 percent of those rabbits were found inside the bellies of pythons. And he was a believer after that.”

Images (6):

|

|||||

| Affordable humanoid robot kit brings advanced robotics in reach | https://interestingengineering.com/ai-r… | 10 | May 18, 2026 15:56 | active | |

Affordable humanoid robot kit brings advanced robotics in reachURL: https://interestingengineering.com/ai-robotics/bipedal-humanoid-robot-kit-asimov Description: Menlo Research launches a DIY humanoid kit for $15K, bringing open-source bipedal robots to independent builders. Content:

From daily news and career tips to monthly insights on AI, sustainability, software, and more—pick what matters and get it in your inbox. Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. Follow Us On Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. Modular design links legs, arms, torso, head via universal mounts, enabling easy upgrades and faster robotics testing. The race to build humanoid robots is moving beyond secretive corporate labs and into the hands of independent developers. Singapore-based Menlo Research has unveiled a DIY version of its open-source humanoid robot, Asimov, aimed at hobbyists, researchers, and robotics enthusiasts. Priced at around $15,000—close to the project’s estimated bill-of-materials cost—the kit reflects a broader push to make bipedal robotics more accessible. Recently, a hobbyist created a life-size sci-fi droid replica using 3D printing and AI voice technology, showcasing affordable tools for interactive home robotics and automation. Menlo Research’s open-source humanoid robot kit has a strong focus on modular engineering and simulation-driven robotics development. The 3.93 feet (1.20 meter) tall humanoid weighs around 77 pounds (35 kilograms and features more than 25 degrees of freedom, offering builders a fully customizable research platform rather than a consumer-ready robot. Delivered completely unassembled, the system includes detailed manuals and instructional build videos aimed at developers and advanced hobbyists. A major technical highlight is the robot’s modular architecture. Independent leg, arm, torso, and head sections connect through universal motor mounting fixtures, allowing users to swap or upgrade components without redesigning the entire platform. The approach reduces maintenance complexity while enabling rapid experimentation with new actuators and control systems, reports Humanoids Daily (HD). The humanoid also incorporates a parallel Revolute-Spherical-Universal (RSU) ankle mechanism that provides two degrees of freedom for roll and pitch movement. The design improves torque distribution across the ankle joint and allows the robot to respond more naturally to uneven terrain and ground reaction forces during walking. To simplify locomotion control, Asimov uses passive articulated toes rather than powered toe actuators. These non-actuated joints assist with the transition from stance to push-off, improving traction and balance while reducing computational overhead and mechanical complexity. Most structural components are optimized for Multi Jet Fusion (MJF) 3D printing, enabling the production of strong, lightweight parts without relying on expensive CNC machining processes. This lowers manufacturing costs while making replacement and customization easier for developers, according to reports. Asimov’s software stack is built around a “Processor-in-the-Loop” (PIL) simulation approach that deliberately moves away from idealized robotics models. Instead of assuming clean, perfectly timed sensor data and deterministic physics, the training environment injects realistic operational imperfections to better mirror real-world conditions. This includes simulated CANBus communication delays of up to 9 milliseconds, producing stale or out-of-sync control signals, as well as artificially generated sensor noise through an I2C emulation layer. These disturbances are designed to replicate the unpredictability and latency inherent in physical robot systems. At the learning core, the system uses an Asymmetric Actor-Critic reinforcement learning framework. The “critic” network is granted access to privileged ground-truth simulation data, enabling accurate evaluation of state and reward signals. In contrast, the “actor” operates under constrained conditions, receiving only noisy, delayed sensor inputs similar to what onboard hardware would experience. By training under this mismatch, the policy learns to tolerate uncertainty and partial observability. The result is zero-shot sim-to-real transfer, allowing the robot to walk forwards, backwards, and recover from external pushes directly on hardware without additional tuning or calibration, reports HD. The kit isn’t inexpensive, with a target price of around $15,000. However, Asimov publishes a full bill of materials on its GitHub repository, allowing builders to source components independently and potentially reduce costs. According to Hackaday, while still a significant investment, it is considered far more accessible than earlier humanoid robotics systems that required millions in development funding. Jijo is an automotive and business journalist based in India. Armed with a BA in History (Honors) from St. Stephen's College, Delhi University, and a PG diploma in Journalism from the Indian Institute of Mass Communication, Delhi, he has worked for news agencies, national newspapers, and automotive magazines. In his spare time, he likes to go off-roading, engage in political discourse, travel, and teach languages. Premium Follow

Images (10):

|

|||||

| WIRobotics Raises $68M Series B To Expand Robotics Platform | https://ventureburn.com/wirorobotics-ra… | 10 | May 18, 2026 15:56 | active | |

WIRobotics Raises $68M Series B To Expand Robotics PlatformURL: https://ventureburn.com/wirorobotics-raises-68m-series-b-robotics/ Description: WIRobotics raises $68M Series B to scale wearable robots and humanoid ALLEX platform for global Physical AI expansion and deployment. Content:

By Clinton Key Takeaways WIRobotics raises $68M Series B led by JB Investment for robotics expansion. Funding supports wearable robot WIM and humanoid platform ALLEX development. Company accelerates global rollout and Physical AI partnerships. WIRobotics just raised $68 million in Series B funding, giving a major boost to its robotics development and global growth plans. JB Investment led the round, with backing from InterVest, Hana Ventures, Smilegate Investment, SBVA, NH Investment & Securities, Company K Partners, GU Investment, and FuturePlay. This comes right on the heels of their Series A from March 2024. The new funds are going to help the company grow its operations and ramp up product development, with a focus on wearable robotics and humanoid systems. Everything they build relies on real human movement data—that’s the heart of their entire strategy. WIRobotics only started in 2021, but they’ve already launched WIM, their wearable walking-assist robot. It’s picked up solid traction internationally. People are using WIM in Europe, China, Türkiye, and Japan. Now, WIRobotics is stretching both their hardware and software to keep up with this broader reach. This Series B gives them plenty of room to shift from just developing new ideas to actually rolling them out at scale. The big goal? To lock in their spot as a leader in both assistive robotics and Physical AI systems. WIRobotics has built its foundation through its wearable robotics system WIM. The device supports walking assistance using human movement data. It is designed to enhance mobility and improve physical capability. The system has now surpassed 3,000 cumulative units sold globally. The company has also expanded its subscription-based software services. Its WIM Premium platform adds a recurring revenue layer to its hardware business. This combination supports long-term commercial stability. It also strengthens real-world data collection for future robotics development. WIM has shown strong international growth across key markets. The system is now deployed in healthcare and mobility environments worldwide. Revenue has grown consistently over recent years. It rose from KRW 560 million in 2023 to KRW 2.79 billion in 2025. The company’s momentum reflects increasing demand for assistive robotics. WIM has also received CES Innovation Awards for three consecutive years. This highlights continued recognition of its technical capabilities and product design. WIRobotics is now scaling its commercial strategy further. It is expanding retail partnerships and experience centres in South Korea. It is also building a North American presence in California. These moves support wider global distribution and adoption. The company is now focusing heavily on humanoid robotics through its ALLEX platform. ALLEX is designed to replicate and extend human movement intelligence. It is built on years of wearable robotics data and real-world usage insights. At CES, ALLEX attracted interest from major global technology firms. Companies including NVIDIA, Meta, and Amazon engaged in discussions around potential collaboration. These interactions signal early commercial momentum for the platform. They also highlight growing industry interest in Physical AI systems. WIRobotics has been selected for NVIDIA’s Physical AI Fellowship. This programme supports advanced robotics and AI development. The selection strengthens the company’s position in the global robotics ecosystem. It also supports technical validation of its humanoid systems. The company is also working with AWS and NVIDIA on joint research initiatives. These collaborations focus on Physical AI and humanoid robotics validation. WIRobotics is conducting proof-of-concept projects with global institutions and automotive partners. These efforts support real-world deployment testing. The humanoid ALLEX platform is now entering a more advanced development stage. WIRobotics plans to launch a research-focused version later this year. It will be supplied to research institutions and industry partners. This will help accelerate data collection and applied robotics testing. WIRobotics is advancing global Physical AI commercialisation by combining wearable robotics and humanoid systems, creating a continuous human motion data loop that enhances adaptability, supports industrial deployment, and drives scalable robotics intelligence across global markets. Source: Created by Ventureburn. WIRobotics is positioning itself as a leader in Physical AI robotics. The company combines wearable systems with humanoid development. This creates a continuous data loop between human movement and machine learning systems. The approach is designed to improve long-term robotics intelligence. The company is also strengthening its international commercial strategy. It is expanding distribution networks across global markets. Partnerships with healthcare and industrial groups are supporting adoption. These efforts aim to increase both hardware deployment and software integration. A key focus is the evolution of ALLEX into a full Human Motion Robotics platform. The system aims to support natural human interaction. It is designed for both industrial and service applications. The long-term goal is scalable humanoid deployment. WIRobotics believes its real-world movement data provides a competitive advantage. This dataset supports improved robotic control systems. It also enhances adaptability across different environments. The company sees this as core to future humanoid robotics development. The company will continue building partnerships with global institutions. It is also working on mass production readiness for future humanoid systems. These steps are expected to support broader commercial deployment in the coming years. More News: Exponent Raises $40M To Build Financial Platform For Franchise Operators WIRobotics enters its next growth phase with strong investor backing. The Series B round reflects confidence in its dual-platform strategy. It also highlights growing global interest in humanoid robotics. The company is now positioned across both assistive and humanoid segments. The robotics sector continues to evolve toward Physical AI systems. Companies are increasingly focusing on real-world interaction capabilities. WIRobotics is building within this trend using movement-based datasets. This approach supports long-term scalability. The company plans to accelerate both research and commercial deployment. It will continue expanding WIM while advancing ALLEX development. It also aims to strengthen its global partnerships and supply chain. These efforts support its ambition to lead next-generation robotics innovation. To stay updated on crypto venture capital funding and market trends, visit our venture capital news section for more insights. Clinton Clinton Nwachukwu is a crypto and finance writer with an MBA in Artificial Intelligence and 6+ years of experience creating content for leading global brands. He turns complex topics into clear, actionable insights for readers worldwide. Disclaimer VentureBurn is a media platform covering the latest in cryptocurrency, artificial intelligence, venture capital, and the startup ecosystem. Opinions expressed on VentureBurn are for informational purposes only and do not constitute investment advice. Before making any high-risk investments in digital assets or emerging technologies, readers should conduct their own due diligence. All transactions and financial decisions are made at your own risk, and any losses incurred are solely your responsibility. VentureBurn does not endorse or recommend the buying or selling of any digital assets and is not a licensed investment advisor. Please note that VentureBurn may participate in affiliate marketing programs. Editor's Choice 10 Best Decentralized Crypto Exchanges (DEXs) in 2026 12 Crypto Exchanges with the Lowest Fees Comparison 13 Best Crypto Card Options in 2026: Debit & Credit Ranked Cowboy Space Corporation Raises $275M in Funding Round 10 Easiest Crypto to Mine: Best Profitable Coins In May 2026 Table of Contents Related articles Fractile Raises $220M To Advance AI Inference Chip Development Outmarket AI Raises $17M To Scale Insurance Workflow Automation Mind Robotics Raises $400M To Expand AI Industrial Robotics Platform Exaforce Raises $125M Series B To Scale AI Native Security Operations Platform Embat Raises €30M To Scale AI Treasury Management Platform Latest crypto news Join the Binance EDGE Trading Competition Now To Share in $100K Rewards. Contest Guide Join the Binance Wallet On-Chain Trade & Win Season 3 Win Up To 15 BNB or 88 SOL How Ethereum Casinos Are Driving Payment Innovation Exponent Raises $40M To Build Financial Platform For Franchise Operators Crew Carbon Raises $25M To Scale Wastewater Decarbonisation Technology GovWell Secures $25M Series A From Insight Partners Novella Raises $21M to Scale AI Insurance Brokerage Cash App’s Strategy Shifts And Its Help In Company Growth GridCARE Raises $64M to Launch AI Energy Category Synthetic Raises $10M Seed Funding For AI Bookkeeping Platform VentureBurn is a global news and insights platform covering the latest trends and developments across the technology landscape – including cryptocurrency, artificial intelligence, venture capital, and startups. Our mission is to empower entrepreneurs, investors, and tech enthusiasts with high-quality journalism, practical guides, and expert analysis to navigate and thrive in the rapidly evolving innovation economy. Category About Subcribe to our newletter Top tech news & guides in crypto, AI, VC, and startups — weekly in your inbox.

Images (10): |

|||||

| Unitree unveils optionally manned transformer robot GD01 | https://interestingengineering.com/ai-r… | 10 | May 18, 2026 15:28 | active | |

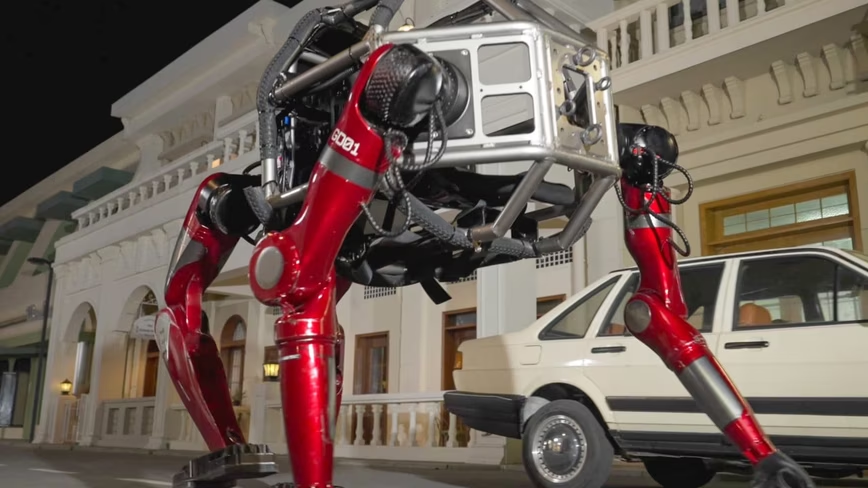

Unitree unveils optionally manned transformer robot GD01Description: Unitree unveils a manned transformable mecha robot capable of switching between bipedal and quadruped modes. Content:

From daily news and career tips to monthly insights on AI, sustainability, software, and more—pick what matters and get it in your inbox. Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. Follow Us On Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. GD01 features stable bipedal walking, strong force to topple walls, and quick switch to quadruped mode for rough terrain movement. China’s robotics giant Unitree has unveiled the GD01, a mecha-style machine that can switch between two-legged and four-legged configurations. It resembles a real-life Autobot from the Transformers franchise and is built from high-strength alloy for civilian transport applications. According to the Hangzhou-based firm, it weighs 1102 pounds (500 kilograms) with a pilot on board and has a starting price of 3.9 million yuan (US$573,674). Recently, Unitree launched a low-cost upper-body humanoid robot starting at 26,900 yuan ($ 4290), featuring modular bases and up to 31 degrees of freedom. Unitree’s GD01 demonstration video shows the mecha carrying a pilot in a torso-mounted cockpit as it walks in a humanoid stance, strikes a stack of bricks, and then reconfigures its chassis into a four-legged configuration. The system is presented as a transformable civilian vehicle. The company states the vehicle weighs about 1102 pounds (500 kilograms) with a passenger on board. Founder Wang Xingxing is shown seated inside the cockpit during the demonstration, highlighting a sharp size contrast between operator and machine. In upright mode, the robot reaches roughly 1.6 times the height of an average adult, reports 36Kr. It demonstrates stable bipedal walking, high force output capable of toppling a brick wall, and a rigid structure that remains steady under impact. The system can fold its legs, adjust its center of gravity, and transition into a quadruped form within seconds, continuing movement without external assistance across uneven terrain. The one-minute video was shared on social media platforms, with the company releasing limited technical specifications. Unitree also issued a safety notice urging users not to attempt hazardous modifications or extreme tests, noting that humanoid robotics remains in an early experimental stage with functional limitations for personal users. The company shared no additional specifications publicly yet. The GD01 adds to the Unitree portfolio amid rapid growth in China’s humanoid robotics industry. In April, the company released an upper-body bipedal humanoid robot starting at 26,900 yuan, designed with modular deployment options including a fixed base and mobile chassis for use in research, light industry, and service applications. According to research firm Omdia, Chinese companies accounted for nearly 90 percent of global humanoid robot sales in 2025. Unitree reportedly shipped more than 5,500 humanoid robots in the previous year, while US companies such as Tesla, Figure AI, and Agility Robotics each shipped around 150 units during the same period, reports the South China Morning Post (SCMP). Chinese humanoid robots are also priced lower than many Western alternatives. Unitree’s entry-level humanoid R1 costs about US$6,000, while rival AgiBot offers a model priced around US$14,000. Tesla CEO Elon Musk has estimated that the future cost of the Optimus humanoid robot could fall between US$20,000 and US$30,000. Unitree sells its R1, G1 humanoids, and Go2 robot dog internationally via Alibaba’s AliExpress platform, targeting markets in North America, Europe, and Japan. Chinese humanoid robots have also begun appearing in airports and logistics operations, including trials by Japan Airlines using systems from Unitree and UBTech Robotics at Tokyo’s Haneda Airport, reports SCMP. In March, Unitree filed for an IPO on Shanghai’s STAR Market, planning to allocate about 85 percent of its 4.2 billion yuan ($61 million) fundraising target to research and development, including over 2 billion yuan ($29 million) for robotics model development. Jijo is an automotive and business journalist based in India. Armed with a BA in History (Honors) from St. Stephen's College, Delhi University, and a PG diploma in Journalism from the Indian Institute of Mass Communication, Delhi, he has worked for news agencies, national newspapers, and automotive magazines. In his spare time, he likes to go off-roading, engage in political discourse, travel, and teach languages. Premium Follow

Images (10):

|

|||||

| Unitree GD01 mecha unveiled as company files for $7 billion … | https://thenextweb.com/news/unitree-gd0… | 9 | May 18, 2026 15:27 | active | |

Unitree GD01 mecha unveiled as company files for $7 billion IPO after outselling Tesla on humanoid robotsURL: https://thenextweb.com/news/unitree-gd01-mecha-humanoid-robot-ipo Description: Unitree Robotics unveiled a $650,000 transformable mecha and is filing for a $7 billion IPO. The Chinese company shipped more humanoid robots than Tesla in 2025. Content:

TL;DRUnitree Robotics unveiled the GD01, a 2.8-metre transformable mecha priced from $650,000, but the real story is the company behind it: Unitree shipped more humanoid robots than Tesla in 2025, holds 70% of the quadruped market, grew revenue 335% to $235 million, has been profitable since 2020, and is filing for a $7 billion IPO on the Shanghai Stock Exchange. Unitree Robotics unveiled the GD01, a 2.8-metre transformable mecha priced from $650,000, but the real story is the company behind it: Unitree shipped more humanoid robots than Tesla in 2025, holds 70% of the quadruped market, grew revenue 335% to $235 million, has been profitable since 2020, and is filing for a $7 billion IPO on the Shanghai Stock Exchange. Unitree Robotics has unveiled a 2.8-metre transformable mecha that a human pilot climbs inside and operates from an open cockpit in the torso. The GD01 walks on two legs, folds into a quadruped configuration in seconds, weighs roughly 500 kilograms with a passenger, and is priced from 3.9 million yuan, approximately 650,000 dollars. It can also operate unmanned. Unitree calls it the world’s first production-ready manned mecha. It is a civilian vehicle, the company says, built for transport across rough terrain, exploration, and rescue operations where a tall vantage point helps. The GD01 is a spectacle. It is also a brand statement from a company that has earned the right to make one. TNW City Coworking space - Where your best work happens A workspace designed for growth, collaboration, and endless networking opportunities in the heart of tech. Unitree was founded in 2016 by Wang Xingxing, who built his first quadruped robot as a master’s thesis project at Shanghai University and left a job at DJI to start the company in a 50-square-metre office in Hangzhou. A decade later, Unitree holds roughly 70 per cent of the global quadruped robot market, having shipped more than 23,700 units in 2024 across its Go, A, and B series. In 2025, it shipped more than 5,500 humanoid robots, more units than any other manufacturer including Tesla. Revenue reached 1.71 billion yuan, approximately 235 million dollars, in 2025, representing 335 per cent year-on-year growth. The company has been profitable every year since 2020. Humanoid robots overtook quadrupeds as the primary revenue driver in 2025, contributing roughly 52 per cent of total revenue in the first three quarters. Unitree filed for an initial public offering on the Shanghai Stock Exchange in March 2026, seeking to raise 4.2 billion yuan, approximately 610 million dollars, at a target valuation of seven billion dollars. The investor list reads like a directory of Chinese technology capital: Alibaba, Tencent, China Mobile, Geely Capital, Ant Group, Jinqiu Capital (ByteDance’s investment arm), and HongShan Capital, formerly Sequoia China. Every major Chinese technology conglomerate has money in the company that dominates the market for robots that walk. Unitree’s commercial significance has nothing to do with the GD01. It rests on a product line that spans the market from consumer to industrial at prices that undercut every Western competitor by an order of magnitude. The Go2 consumer quadruped starts at 1,600 dollars. The G1 humanoid, a research and light industrial platform, sells for 13,500 to 27,000 dollars. The H2, a full-size industrial-grade humanoid, is priced at 29,900 dollars. The B2-W, a wheeled quadruped variant, handles inspection, patrol, and fire rescue. Unitree Robotics unveiled the GD01, source: Unitree For context, Figure AI’s Figure 02 industrial humanoid is being piloted at BMW at costs that have not been publicly disclosed but are estimated to be multiples of Unitree’s pricing. Boston Dynamics has begun commercial production of its electric Atlas, with every 2026 unit already committed to Hyundai. Tesla’s Optimus remains in research and development with no productive factory deployments as of the first quarter of 2026. Unitree is the only company simultaneously shipping consumer, research, and industrial humanoid robots at scale. China’s humanoid robot boom faces a commercialisation reality check, with more than 150 companies chasing a market where only 23 per cent of buyers report satisfaction with the robots they have purchased. Unitree’s answer to the satisfaction problem is iteration speed and price. If the first robot disappoints, the replacement costs less than a competitor’s pilot programme. The company treats humanoid robots the way Chinese smartphone manufacturers treated handsets a decade ago: ship fast, price aggressively, iterate on customer feedback, and let volume drive down cost. The GD01 transforms between bipedal and quadrupedal modes by folding its legs and shifting its centre of gravity, a process that takes a few seconds. In bipedal mode, it walks upright at nearly three metres tall. In quadruped mode, it lowers its profile for stability on rough terrain. The machine uses LiDAR, depth cameras, an inertial measurement unit, and pressure sensors for stability and navigation. It runs on Unitree’s self-developed high-torque motors. Demonstration videos show it walking through urban environments, smashing through brick walls, and carrying a pilot across uneven ground. Unitree has been explicit about safety. The company has asked users to refrain from dangerous modifications and noted that humanoid robotics remains in an early experimental stage with functional limitations. The 650,000 dollar price is described as a preliminary reference; the final production version may be adjusted depending on performance optimisation. The machine is not a toy. It is also, unmistakably, not yet a product with a clear commercial market beyond high-net-worth buyers and demonstration events. What it is, precisely, is a capability demonstration. The GD01 proves that Unitree can build large-scale bipedal systems with transformation mechanics, high-torque actuation, and manned operation. Those capabilities feed back into the company’s commercial product line. The motors, sensors, control algorithms, and structural engineering developed for a 500-kilogram mecha are directly applicable to the next generation of industrial humanoids that will carry loads, navigate construction sites, and operate in environments too dangerous for human workers. Tesla has explored using its Shanghai Gigafactory for Optimus humanoid robot mass production, a decision that would place the world’s most valuable car company’s robotics programme inside China’s manufacturing ecosystem. The irony is instructive. Tesla, the American company, would build its humanoid robot in China because Chinese manufacturing infrastructure, supply chains, and cost structures are the most efficient in the world for producing complex electromechanical systems at scale. Unitree already has that infrastructure. The components that go into a humanoid robot, precision motors, sensors, thermal management systems, lightweight structural materials, and battery cells, are the same components that Chinese factories produce at scale for smartphones, electric vehicles, and drones. Morgan Stanley forecasts China’s humanoid robot sales will reach 28,000 units in 2026, a 133 per cent year-on-year increase, with material costs falling 16 per cent as supply chain efficiencies from consumer electronics manufacturing carry over into robotics production. Chinese electric vehicles are flooding American social media despite 100 per cent tariffs, driven by consumer demand that trade barriers cannot fully suppress. The pattern for robotics is likely to follow the same trajectory. Unitree’s quadrupeds already sell globally. Its humanoids are priced at a fraction of Western alternatives. The GD01, whatever its practical utility, ensures that the Unitree brand is visible in every robotics conversation on the planet. Unitree’s Shanghai Stock Exchange filing is the first major humanoid robotics IPO. Boston Dynamics, owned by Hyundai, has been valued between 21 and 28 billion dollars by Korean securities firms, with bullish IPO projections reaching 100 billion dollars. Figure AI raised one billion dollars at a 39 billion dollar valuation in September 2025. But neither has gone public. Unitree, the company from the 50-square-metre Hangzhou office, would be the first pure-play robotics company to list. Cerebras, the AI chipmaker, is targeting a 40 billion dollar IPO valuation in what would be the first major AI hardware listing of 2026. Unitree’s seven billion dollar target is more modest, but the company has something Cerebras does not: profitability. Unitree has been profitable every year since 2020. In a market where AI and robotics companies routinely burn capital at extraordinary rates, a profitable robotics manufacturer filing for a public listing is an anomaly. UBTech, one of Unitree’s Chinese competitors, has offered 18 million dollars to hire a chief AI scientist, a figure that illustrates the talent arms race in Chinese robotics. Unitree’s advantage is not a single hire. It is a decade of iteration from Wang Xingxing’s thesis project to a product line that covers the market from 1,600-dollar consumer quadrupeds to 650,000-dollar pilotable mechs, all manufactured in China at costs that Western competitors cannot match. The GD01 will not sell in volume. A 650,000 dollar mecha does not have a mass market. What it has is attention. Every technology publication in the world covered the announcement. The videos went viral. The brand registered. And behind the spectacle, Unitree’s actual business, the one generating 335 per cent revenue growth and filing for a seven billion dollar IPO, continued shipping robots that walk, run, and work at prices that make every competitor recalculate their cost structure. Wang Xingxing built his first walking robot in a university lab in 2013. Thirteen years later, the company he founded from that project sells more humanoid robots than Tesla, holds 70 per cent of the quadruped market, counts every major Chinese technology company as an investor, and just unveiled a vehicle that transforms from a walking machine into a crawling one with a human inside. The GD01 is not the product. The product is the company that could build it. Alina Maria Stan builds connections that people actually feel. As co-founder and COO of Tekpon, she turns product intuition into real moment (show all) Alina Maria Stan builds connections that people actually feel. As co-founder and COO of Tekpon, she turns product intuition into real moments of discovery, shaping how teams find and adopt SaaS every day. Since 2020, she has led Tekpon’s brand voice, media strategy, and growth plays with a clear focus on human outcomes behind every metric. Before Tekpon, Alina followed curiosity across industries and countries. She was CEO of King Casino Bonus and led affiliate and brand strategy at Extremoo Media and Fable Media in Denmark, where she learned how to build partnerships that last. Early on, she sharpened her CRM and pricing instincts at K.H. ApS, always asking why customers choose what they choose. Her approach is rooted in more than a decade of international experience and two master’s degrees, one in Sustainable Consumption from the Technical University of Munich and one in Consumer Affairs Management from Aarhus University. Get the most important tech news in your inbox each week. The heart of tech A Tekpon Company Copyright © 2006—2026, Cogneve, INC. Made with <3 in Amsterdam.

Images (9):

|

|||||

| Unitree unveils $4,290 humanoid robot with upper-body-only design | https://interestingengineering.com/ai-r… | 10 | May 18, 2026 15:27 | active | |

Unitree unveils $4,290 humanoid robot with upper-body-only designURL: https://interestingengineering.com/ai-robotics/china-unitree-humanoid-robot Description: Unitree launches a low-cost upper-body humanoid robot with modular design and precision control for research and industry. Content:

From daily news and career tips to monthly insights on AI, sustainability, software, and more—pick what matters and get it in your inbox. Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. Follow Us On Access expert insights, exclusive content, and a deeper dive into engineering and innovation all with fewer ads or a completely ad-free experience. All Rights Reserved, IE Media, Inc. Combines binocular vision, a 4-array mic, and voice interaction to enable real-time visual perception and speech-based control in one system. Chinese robotics firm Unitree has introduced a low-cost bipedal humanoid robot with an upper-body-only design. According to the Hangzhou-based firm, with prices starting at 26,900 yuan ($ 4290), it significantly lowers entry barriers in the sector. The robot replaces the traditional full-body structure with modular deployment options, including a fixed base or mobile chassis. It offers flexible configurations with 5 or 7 degrees of freedom per arm, for a total of up to 31 degrees of freedom. Last week, Unitree showcased its G1 humanoid gliding on skates, performing spins, turns, and flips using coordinated wheel-leg balance control. The bipedal robot replaces a full-body design with modular deployment options, offering either a fixed base or mobile chassis for varied applications. Each arm is available in 5-DOF or 7-DOF configurations, with total system DOF ranging from 15 to 31. The waist rotates ±150°, while the head supports ±115° yaw and ±36° pitch. Its gripper achieves ±0.1 mm repeatability and supports interchangeable dexterous hands, with each arm handling payloads up to 4.4 pounds (2 kilograms), reports EU.36KR. The base system integrates binocular vision, a 4-array microphone, and voice interaction, enabling combined visual perception and speech-based control. It is powered by dual 8-core high-performance CPUs, while the head vision module provides up to 10 TOPS of AI compute, supporting real-time perception tasks. The standard model includes 5-degree-of-freedom robotic arms, with an optional 7-DOF upgrade for enhanced manipulation. Mechanically, the platform is modular, offered in both fixed-base and wheeled configurations, allowing deployment across lab, industrial, and service environments. The system supports interchangeable components and exposes low-level interfaces for secondary development, enabling researchers to customize control, perception, and task execution. Weighing between 24 pounds (11 kilograms) and 70 pounds (32 kilograms), the robot supports both external and vehicle-mounted power supplies, balancing endurance with deployment flexibility. Its architecture is designed for rapid iteration, making it suitable for applications ranging from assembly and training to mobile service tasks such as guided interaction and warehouse assistance. Unitree is applying its proven playbook from quadruped robots to dual-arm humanoid systems. Experts point out that its earlier Go series succeeded by delivering capable legged robots at prices far below competing platforms, attracting a broad developer base and fostering an ecosystem around its SDK and control stack. A similar momentum could emerge in manipulation robotics if this approach translates effectively, reports Startup Fortune (SF). Last year, Unitree also unveiled the R1, a humanoid robot with 26 joints, at just 39,999 yuan (about US $5,900). However, the competitive landscape is more crowded. Companies like Boston Dynamics bring strong engineering depth, established enterprise ties, and brand trust, factors that matter in industrial deployments. Unitree’s lower-cost strategy may face limits in markets where reliability, support, and reputation outweigh price advantages. Experts say affordability is key for researchers and developers. Access to capable hardware lowers barriers, speeds iteration, and enables real-world testing beyond simulations, accelerating progress in embodied AI, especially in manipulation and applied robotics. The real signal will emerge from how developers use these systems. Open-source projects, academic work, and early-stage startups building on accessible platforms will shape the next phase of innovation more than high-end demonstrations, reports SF. Jijo is an automotive and business journalist based in India. Armed with a BA in History (Honors) from St. Stephen's College, Delhi University, and a PG diploma in Journalism from the Indian Institute of Mass Communication, Delhi, he has worked for news agencies, national newspapers, and automotive magazines. In his spare time, he likes to go off-roading, engage in political discourse, travel, and teach languages. Premium Follow

Images (10):

|

|||||

| Unitree Robotics G1 robot skates on ice and Rollerblades with … | https://www.foxnews.com/tech/unitree-g1… | 10 | May 18, 2026 15:27 | active | |

Unitree Robotics G1 robot skates on ice and Rollerblades with ease | Fox NewsURL: https://www.foxnews.com/tech/unitree-g1-humanoid-robot-ice-skates-rollerblades Description: Unitree Robotics' G1 humanoid robot glides on ice skates and Rollerblades, performing spins and flips while maintaining balance through wheel and leg control. Content:

This material may not be published, broadcast, rewritten, or redistributed. ©2026 FOX News Network, LLC. All rights reserved. Quotes displayed in real-time or delayed by at least 15 minutes. Market data provided by Factset. Powered and implemented by FactSet Digital Solutions. Legal Statement. Mutual Fund and ETF data provided by LSEG. Fox News Flash top headlines are here. Check out what's clicking on FoxNews.com. We've seen robots walk, run, climb stairs and even recently finish a half-marathon. What we haven't seen until now is a robot gliding across the ice like an Olympic skater or spinning on one leg on Rollerblades without losing balance. That is exactly what Unitree Robotics just showed with its G1 humanoid robot. In newly released footage, the robot moves on Rollerblades and ice skates while keeping its posture steady through coordinated wheel and leg control. It's pretty amazing to watch. Sign up for my FREE CyberGuy Report ELON MUSK TEASES A FUTURE RUN BY ROBOTS Unitree’s G1 humanoid robot glides on wheels, Rollerblades and ice skates, showing off sharp balance, spins and even a flip. (Unitree Robotics) When you actually watch the video, a few moments really stand out. It starts with the robot leaning into the motion, almost stepping as it propels itself forward on two wheels, shifting its weight from side to side as if one wheel is leading the next. Its arms move up and down to stay balanced, giving it a rhythm that feels closer to walking than rolling, like it's constantly adjusting in real time. Then it pulls off a series of spins and an impressive flip, landing clean on two wheels and continuing without missing a beat. No hesitation. Next, it switches to Rollerblades and moves with the same level of control. It glides, does some fancy footwork, changes direction and even lifts one leg while spinning and staying balanced like it's second nature. That alone would be impressive. But the real wow moment comes at the end. On ice, the robot starts doing smooth twirls, almost like it’s figure skating, while holding its posture without slipping. That’s when you start to see how far these humanoid robots have come. Most humanoid robots face the same problem. Staying upright while doing anything dynamic pushes the limits of control systems. The G1 changes that equation by blending two approaches. It combines wheeled efficiency with legged adaptability. That means it can roll when speed matters and step when terrain gets tricky. In the demo, the robot transitions smoothly between these modes. It executes continuous motion instead of stopping to rebalance. You see 360-degree turns, controlled spins and even front flips, all without a visible pause. That level of fluidity points to improvements in real-time control, balance correction and motion planning. These are areas that have held humanoid robots back for years, until now. ROBOTS LEARN 1,000 TASKS IN ONE DAY FROM A SINGLE DEMO The Unitree G1 performs spins, turns and a clean flip while riding on wheels and Rollerblades. The demo offers a striking look at how far humanoid robots have come. (Unitree Robotics) The hardware behind the G1 explains why it can pull this off. Unitree designed the system as a full-stack platform for AI training and deployment. That means the robot collects its own data, learns from simulation and applies those lessons in the real world. The robot comes in two main versions. The Standard model focuses on stationary tasks. The Flagship version adds a wheeled base that can reach about 3.3 feet per second. Both variations share a humanoid structure with up to 19 degrees of freedom. Each arm has seven degrees of freedom and can handle about 6.6 pounds. A flexible waist allows wide motion ranges, which helps with balance during dynamic movement. Vision comes from a binocular camera in the head, along with wrist cameras for close-up work. The system can use different grippers, including dexterous hands for more precise tasks. At the core, the Flagship model runs on an NVIDIA Jetson Orin NX module with up to 100 TOPS of compute. That level of onboard processing supports real-time decision-making during complex movement. Battery life can stretch up to six hours, depending on how hard the robot is working. For years, robotics has leaned in two directions. Wheeled machines move efficiently but struggle with obstacles. Legged robots handle complex environments but use more energy and move more slowly. Unitree's approach tries to merge both. By adding wheels to a humanoid frame, the G1 can move quickly across flat surfaces and still adapt when conditions change. That hybrid design also reduces wear on joints and improves energy efficiency over long distances. It also opens the door to new types of tasks. A robot like this could move through a warehouse, switch to precise manipulation at a workstation and then roll to the next job without slowing down. Take my quiz: How safe is your online security? Think your devices and data are truly protected? Take this quick quiz to see where your digital habits stand. From passwords to Wi-Fi settings, you’ll get a personalized breakdown of what you’re doing right and what needs improvement. Take my Quiz here: CyberGuy.com. NEW MOBILE ROBOT HELPS SENIORS WALK SAFELY AND PREVENT FALLS The Unitree R1 humanoid robot runs on a flat surface. The model is noted for its affordability at $5,900. (Unitree Robotics) The skating is what grabs you first. It is fun to watch and hard to ignore. What stands out after a few seconds is how steady the robot stays the whole time. It keeps moving, keeps adjusting and never looks close to losing control. That is a big change from the stop-and-go motion we are used to seeing. If this keeps improving, and I know it will, you are going to see robots that can move through real environments without slowing down or needing constant input. CLICK HERE TO DOWNLOAD THE FOX NEWS APP So here is the question. If robots can move this fluidly today, how long before they start working alongside you without missing a step and are you OK with that? Let us know by writing to us at CyberGuy.com. Sign up for my FREE CyberGuy Report Copyright 2026 CyberGuy.com. All rights reserved. Kurt "CyberGuy" Knutsson is an award-winning tech journalist who has a deep love of technology, gear and gadgets that make life better with his contributions for Fox News & FOX Business beginning mornings on "FOX & Friends." Got a tech question? Get Kurt’s free CyberGuy Newsletter, share your voice, a story idea or comment at CyberGuy.com. Get a daily look at what’s developing in science and technology throughout the world. Subscribed You've successfully subscribed to this newsletter! This material may not be published, broadcast, rewritten, or redistributed. ©2026 FOX News Network, LLC. All rights reserved. Quotes displayed in real-time or delayed by at least 15 minutes. Market data provided by Factset. Powered and implemented by FactSet Digital Solutions. Legal Statement. Mutual Fund and ETF data provided by LSEG.

Images (10):

|

|||||

| Sergey Levine: General robotic foundation models may outperform narrow solutions, … | https://cryptobriefing.com/sergey-levin… | 10 | May 14, 2026 16:00 | active | |

Sergey Levine: General robotic foundation models may outperform narrow solutions, the future of medicine involves autonomous robots, and the importance of understanding physical interactions | Invest Like the BestDescription: General robotic models could revolutionize robotics by enhancing adaptability and efficiency across diverse applications. Content:

Searching... General robotic models could revolutionize robotics by enhancing adaptability and efficiency across diverse applications. Share Sergey Levine is an Associate Professor in the Department of Electrical Engineering and Computer Sciences at UC Berkeley and co-founder of Physical Intelligence. He earned his PhD in Computer Science from Stanford University in 2014 and joined UC Berkeley faculty in 2016. His research pioneered deep reinforcement learning algorithms for robotics, enabling end-to-end training of neural network policies that combine perception and control. General robotic models could revolutionize robotics by enhancing adaptability and efficiency across diverse applications. Share Sergey Levine is an Associate Professor in the Department of Electrical Engineering and Computer Sciences at UC Berkeley and co-founder of Physical Intelligence. He earned his PhD in Computer Science from Stanford University in 2014 and joined UC Berkeley faculty in 2016. His research pioneered deep reinforcement learning algorithms for robotics, enabling end-to-end training of neural network policies that combine perception and control. All content is for informational purposes only and does not constitute investment advice. CryptoBriefing does not provide recommendations to buy, sell, or hold any asset or contract. See our Disclaimer & Risk Disclosure. © Decentral Media and Crypto Briefing® 2026. Sign in to your account Create your account Already have an account? Sign In Forgot your password? Sign In Daily news, analysis & market insights delivered free.

Images (10):

|

|||||

| Researchers Build Light-Powered AI Chips That Let Robots Learn Autonomously | https://www.techjuice.pk/researchers-bu… | 10 | May 14, 2026 16:00 | active | |

Researchers Build Light-Powered AI Chips That Let Robots Learn AutonomouslyDescription: Researchers develop light-powered photonic chips that enable faster and more energy efficient AI learning. Content:

Researchers at Xidian University in China have demonstrated a light-powered computing chip capable of running reinforcement learning tasks entirely within the optical domain, a breakthrough that eliminates a fundamental limitation that has held back light-based AI hardware for over a decade. The findings, published in the journal Optica, point toward a future where autonomous vehicles and robots learn directly from their environments using chips that are dramatically faster and more energy-efficient than today’s electronic processors. Photonic spiking neural systems have long been considered a promising path toward AI hardware that outpaces conventional electronics. These systems mimic how biological neurons communicate by using rapid pulses of light rather than electrical signals, which travel faster and consume far less energy. The problem has always been in the learning step. “Photonic spiking neural systems use brief optical pulses, or spikes, to emulate neural signaling, but they can typically only process the linear parts of computation using light,” said research team leader Shuiying Xiang from Xidian University. Every previous attempt to build such systems ran into the same wall: the nonlinear operations that actually make learning and decision-making possible required converting signals back into electrical form for processing. “Previously, the nonlinear steps that make learning and decision making possible required the signal to be converted back into electronic signals. This adds delay and undercuts the speed and energy advantages of photonics,” Xiang explained. The new design removes that conversion step entirely, keeping all computation, both linear and nonlinear, inside the optical domain. The researchers designed a programmable photonic neuromorphic platform built from two chips working in tandem. The first is a 16-channel photonic neuromorphic processor containing 272 trainable parameters, capable of handling multiple optical signals simultaneously. The second features a distributed feedback laser array with a saturable absorber component that enables low-threshold nonlinear optical spiking, which is the key element that allows learning to happen without electronics. To test the system’s practical capability, the team used reinforcement learning, the same AI training approach that underlies many modern robotic and autonomous systems, where a machine learns through repeated trial and error rather than labelled training data. “We used this system to demonstrate reinforcement learning, supported by a hardware and software collaborative framework that trains and runs the neural network. The system was able to learn quickly through trial and error, showing potential as a fast, low-latency solution that could be used for applications such as autonomous driving and embodied intelligence,” Xiang said. The results were tested against two standard control benchmarks. One required balancing a pole on a moving cart, the classic CartPole task used widely to evaluate reinforcement learning systems. The other required stabilising an inverted pendulum. Hardware decisions closely matched the software model in both tests, with accuracy dropping only 1.5% on CartPole and 2% on the pendulum challenge. On raw computing metrics, the photonic linear processing reached 1.39 tera operations per second per watt. Nonlinear computation achieved nearly 988 giga operations per second per watt. On-chip computing latency measured just 320 picoseconds, a speed advantage that conventional electronic processors cannot approach. The current prototype operates with 16 optical channels. The team has announced plans to scale the architecture to a 128-channel photonic spiking neural chip, which would support more complex reinforcement learning tasks closer to real-world deployment conditions. The researchers also aim to build compact hybrid photonic systems suitable for edge computing, where low power consumption and fast local processing are both critical requirements. If the architecture scales successfully, photonic AI hardware could offer a credible alternative to electronic GPU clusters for the class of applications, including robotics, autonomous vehicles, and real-time environmental adaptation, where latency and energy consumption are the binding constraints on what machines can do. You can read the research here. Abdul Wasay explores emerging trends across AI, cybersecurity, startups and social media platforms in a way anyone can easily follow. Google and SpaceX negotiate to launch orbital data centers revolutionizing AI computing infrastructure. Google’s Project Suncatcher aims for 2027 prototype satellites with TPUs. SpaceX pitches. Grok app downloads fell nearly 60% to 8.3 million in April from January peak of 20 million according to AppMagic data reported by Wall Street. OpenAI launched Daybreak cybersecurity initiative on May 11 using GPT-5.5 models and Codex Security to find vulnerabilities before exploitation. The platform competes with Anthropic’s Claude. The provincial government of Punjab has approved a government GPU cloud project, establishing the first local artificial intelligence platform for official and academic use. As. Premier Pakistan technology news website with special focus on startups, entrepreneurship and consumer products. © 2026 TechJuice.PK – All rights reserved.

Images (10):

|

|||||

| Disney trains robots to fall, roll, and land safely without … | https://interestingengineering.com/ai-r… | 10 | May 14, 2026 16:00 | active | |

Disney trains robots to fall, roll, and land safely without damageURL: https://interestingengineering.com/ai-robotics/disney-builds-smart-robot-system Description: Disney researchers have developed a new system that lets robots turn uncontrolled falls into safe and controlled landings. Content: