AUTOMATION HISTORY

1191

Total Articles Scraped

2288

Total Images Extracted

Scraped Articles

New Automation| Action | Title | URL | Images | Scraped At | Status |

|---|---|---|---|---|---|

| Hyundai Motor : Completes Acquisition of Boston Dynamics from SoftBank | https://www.marketscreener.com/quote/st… | 0 | Dec 30, 2025 00:03 | active | |

Hyundai Motor : Completes Acquisition of Boston Dynamics from SoftBankDescription: · Hyundai Motor Group acquires a controlling interest in Boston Dynamics from SoftBank, following regulatory approvals and other conditions ... Content: |

|||||

| Boston Dynamics: Spot lernt den Rückwärtssalto – aus gutem Grund … | https://www.notebookcheck.com/Boston-Dy… | 1 | Dec 30, 2025 00:03 | active | |

Boston Dynamics: Spot lernt den Rückwärtssalto – aus gutem Grund - Notebookcheck.com NewsDescription: Der Roboterhund Spot hat den mehrfachen Rückwärtssalto gelernt. Dank Reinforcement Learning soll Spot so in Grenzsituationen eher überstehen, so Boston Dynamics. Das schützt die Hardware, die Spot besitzt. Content:

Boston Dynamics lässt Spot Saltos schlagen. Der vierbeinige Roboter hat damit etwas gelernt, was den Erfindern damals gar nicht in den Sinn kam und was selbst das Team, das die Software angepasst hat, erst einmal für unmöglich hielt. Was auf den ersten Blick nutzlos klingt – und das Schlagen eines Saltos ist selbst in den Augen von Boston Dynamics nichts, was die Kunden der Firma brauchen – hat einen interessanten Nebeneffekt. Denn mit der notwendigen Kontrolle, einen Salto zu schlagen, hat Spot auch gelernt, sich in kritischen Situationen besser abzufangen. Wenn der Roboter stürzt, ausrutscht oder stolpert, kann sich Spot so vor größeren Schäden schützen. Aber auch die Payload, oft teure Sensoren auf dem Rücken, kann so besser vor Sturzschäden geschützt werden. Reinforced Learning nennt Boston Dynamics dies und zeigt im Video, wie gut das mittlerweile funktioniert. Selbst ein Rückwärtssalto ist möglich. Das schafft Spot sogar mehrmals hintereinander. Spot kann selbst mit installierten Rollen auf den Vorderbeinen balancieren. Bis dahin war es aber ein weiter Weg, wie Arun Kumar, Robotics Engineer von Boston Dynamics, in dem Video erklärt. So hat er die Szenarios zunächst im Rechner (erfolgreich) simuliert. Doch beim Aufspielen auf den echten Roboter ging so gut wie jedes Mal etwas schief, wie Kumar darlegt. Boston Dynamics zeigt in dem Video auch die ersten unbeholfenen Versuche. Zunächst auf Turnmatten, um Schäden zu minimieren. Später riskierte Boston Dynamics aber auch mehr. Als interessanter Nebeneffekt kann Spot auch deutlich natürlicher laufen, ähnlich anderen Vierbeinern. Im produktiven Einsatz läuft Spot noch so, wie man es für einen Roboter erwarten würde. Das sieht nicht sonderlich elegant aus, nutzt dabei aber die Hardware auch schonender. Die neuen Experimente setzen Spot und dessen Motoren nämlich ans technische Limit. Die neuen Fähigkeiten zeigen auch, dass die Entwicklung von Spot noch nicht am Ende ist, auch wenn sich Boston Dynamics derzeit zumindest in seinen Videos eher auf Atlas konzentriert. Boston Dynamics

Images (1):

|

|||||

| Boston Dynamics Atlas — компания отказывается от робота-гуманоида / NV | https://techno.nv.ua/popscience/boston-… | 0 | Dec 30, 2025 00:03 | active | |

Boston Dynamics Atlas — компания отказывается от робота-гуманоида / NVURL: https://techno.nv.ua/popscience/boston-dynamics-atlas-50410766.html Description: После почти 11 лет разработки Boston Dynamics объявила о прекращении работы над гуманоидным ро... Content: |

|||||

| Spot Boston Dynamics станцевал в наряде с блестками | https://techno.nv.ua/popscience/boston-… | 0 | Dec 30, 2025 00:03 | active | |

Spot Boston Dynamics станцевал в наряде с блесткамиURL: https://techno.nv.ua/popscience/boston-dynamics-spot-sparkles-50414509.html Description: Boston Dynamics, известная своими удивительными роботами, опубликовала новое видео, где их ро?... Content: |

|||||

| Ev işleri Boston Dynamics robotuna emanet! - ShiftDelete.Net | https://shiftdelete.net/ev-isleri-bosto… | 1 | Dec 30, 2025 00:03 | active | |

Ev işleri Boston Dynamics robotuna emanet! - ShiftDelete.NetURL: https://shiftdelete.net/ev-isleri-boston-dynamics-robotuna-emanet Description: Boston Dynamics robot teknolojileri konusunda inovasyonlarına tam gaz devam ediyor. Atlas robot ev işlerinde yardımcınız olacak. Content:

Massachusetts merkezli robotik devi Boston Dynamics, Toyota Araştırma Enstitüsü (TRI) ile yaptığı iş birliğiyle insansı robotu Atlas’ı adeta yeni bir çağa taşıdı. Atlas, artık “Büyük Davranış Modeli” (LBM) adı verilen, insan eylemlerine dair devasa veri setleriyle eğitilmiş karmaşık bir yapay zeka sistemiyle yönetiliyor. Bu yeni beyin, robota sadece ne yapacağını değil, beklenmedik durumlarla nasıl başa çıkacağını da öğretiyor. Yayınlanan videonun en can alıcı kısmı ise Atlas’ın bir sabır testine tabi tutulduğu anlardı. Robot, belirli nesneleri bir kutuya yerleştirme görevini yapıyor. Bir mühendis tıpkı muzip bir iş arkadaşı gibi davranarak sürekli olarak kutunun kapağını kapatıyor ve kutuyu farklı bir yere itiyordu. Daha önceki robotik sistemler bu tür bir müdahale karşısında donup kalabilir veya hataya düşebilirdi. Ancak Atlas, şaşırtıcı bir şekilde durumu analiz etti. Pozisyonunu sakince yeniden ayarladı, kutuyu buldu ve kapağını açtı. Ardından görevine kaldığı yerden devam etti. Bu sahne, robotun artık sadece programlanmış komutları değil, değişen koşullara dinamik olarak adapte olabilme yeteneğini de kazandığını gözler önüne serdi. Boston Dynamics, yaptığı açıklamada, LBM’lerin en büyük devriminin, yeni yeteneklerin tek bir satır kod yazmadan robota eklenebilmesi olduğunu belirtti. Bu, daha önce aylar süren programlama çalışmalarının yerini, yapay zekanın öğrenme kapasitesinin alacağı anlamına geliyor. Boston Dynamics Robotik Araştırmalar Başkan Yardımcısı Scott Kuindersma, “Bu çalışma, yaşam ve çalışma biçimimizi dönüştürecek genel amaçlı robotlar vizyonumuza bir pencere açıyor.” dedi. Kuindersma, bu yaklaşımın Atlas gibi son derece yetenekli robotların, güç ve hassasiyet gerektiren görevler için veri toplamasını kolaylaştıracağını vurguladı. Ayrıca bu durum gelişimi hızlandıracağını ifade etti. Hızla gelişen robot teknolojisi, çamaşır katlamaktan fabrika montajına kadar çok çeşitli alanlarda görev alabilecek daha çevik, akıllı ve uyumlu insansı robotların hayatımıza girmesinin artık çok da uzak bir hayal olmadığını kanıtlıyor. İsmimi bu tarayıcıya kaydet Δ

Images (1):

|

|||||

| Lidl verwendet Entladeroboter von Boston Dynamics | https://www.elektronikpraxis.de/lidl-ve… | 1 | Dec 30, 2025 00:03 | active | |

Lidl verwendet Entladeroboter von Boston DynamicsDescription: Der Entladeroboter Stretch von Boston Dynamics arbeitet nun im Regelbetrieb von Lidl. 22 Systeme sollen bis Mitte 2026 in Importlagern in mehreren europäischen Ländern Container automatisch entladen und damit körperlich belastende Tätigkeiten reduzieren. Content:

Anbieter zum Thema Der Entladeroboter Stretch von Boston Dynamics arbeitet nun im Regelbetrieb von Lidl. 22 Systeme sollen bis Mitte 2026 in Importlagern in mehreren europäischen Ländern Container automatisch entladen und damit körperlich belastende Tätigkeiten reduzieren. Lidl treibt die Automatisierung seiner Logistik voran und setzt künftig stärker auf Robotik für das Entladen von Seecontainern. Nach einer Pilotphase, die im vergangenen September startete, hat der Handelskonzern jetzt bestätigt, dass der Roboter Stretch von Boston Dynamics in mehreren Importlagern fest eingeplant ist. Insgesamt 22 Systeme sollen bis Mitte 2026 in den Niederlanden, Belgien, Österreich und Spanien arbeiten. Der Rollout markiert den bisher größten bekannten Einsatz des speziell für Container entwickelten Roboters in Europa. Stretch ist ein mobiler Entladeroboter, der Kartons direkt im Container greift und absetzt. Er verwendet einen vakuumbasierten Greifkopf mit mehreren Saugern, kombiniert mit Kameras und Bilderkennung. Das System orientiert sich im Container autonom, identifiziert Kartons und nimmt sie einzeln oder in Gruppen auf. Boston Dynamics nennt als typische Leistungswerte mehrere Hundert Kartons pro Stunde und eine Einsatzdauer von bis zu 16 Stunden pro Akkuladung. Lidl begründet den Rollout mit einer erfolgreichen Pilotphase. Wie das Unternehmen mitteilt, fiel die Entscheidung nach einem Test in einem Importlager, dessen Ergebnisse intern als sehr positiv bewertet wurden. Auf Nachfrage betont Lidl, dass vor allem die körperliche Entlastung der Mitarbeitenden sowie die stabile Arbeitsgeschwindigkeit im Container entscheidende Faktoren gewesen seien. Stretch soll Container mit gemischten Kartongrößen, wechselnder Verpackungsqualität und teilweise unebenen Stapeln handhaben, was in europäischen Importlagern als kritischer Alltag gilt. Unternehmen wie die Otto Group oder DHL nutzen Stretch bereits in anderen Logistikszenarien. Dort entlädt das System je nach Aufbau zwischen rund 400 und etwa 700 Pakete pro Stunde. Solche Werte lassen sich nicht direkt auf Lidl übertragen, geben aber eine Vorstellung davon, wo Stretch bei typischen Einsätzen landet. Technisch bleibt der Blick in den Container das anspruchsvollste Szenario. Im Inneren herrscht wenig Platz, die Beleuchtung schwankt und die Kartonlage wechselt von Container zu Container. Stretch kombiniert Tiefenkameras, 2D-Bildverarbeitung und ein internes Kartonmodell. Sein Roboterarm fährt auf einer mobilen Basis, die sich entlang der Containerkante bewegt. Der Vakuumgreifer arbeitet mit adaptivem Druck, um beschädigte Kartons nicht weiter zu belasten. Das System kommuniziert über eine lokale Edge-Steuerung und übergibt Statusdaten an die Lager-IT. Boston Dynamics hat den Roboter in den vergangenen Jahren technisch weiterentwickelt. Neuere Varianten unterstützen Multipick-Funktionen, bei denen mehrere Kartons gleichzeitig bewegt werden. Das beschleunigt das Entladen bei leichten Verpackungen. Zudem verbessert Boston Dynamics fortlaufend die Bildverarbeitung, damit der Roboter auch in engen Bereichen und bei ungleichmäßigen Stapeln weniger manuelle Eingriffe benötigt. Lidl investiert seit Jahren in Automatisierung. Dazu gehören automatisierte Umpalettieranlagen wie Genesys One von Premium Robotics sowie umfangreiche Intralogistikprojekte in Zusammenarbeit mit Europa Systems. Der Einsatz von Stretch reiht sich in diese Linie ein, zielt aber speziell auf den ergonomisch schwierigen Prozess des Containerentladens. Der aktuelle Roboter-Rollout betrifft ausschließlich Importlager, die große Mengen Kartons aus Übersee abwickeln. (mc) (ID:50650959) Bitte geben Sie eine gültige E-Mailadresse ein. Mit Klick auf „Newsletter abonnieren“ erkläre ich mich mit der Verarbeitung und Nutzung meiner Daten gemäß Einwilligungserklärung (bitte aufklappen für Details) einverstanden und akzeptiere die Nutzungsbedingungen. Weitere Informationen finde ich in unserer Datenschutzerklärung. Die Einwilligungserklärung bezieht sich u. a. auf die Zusendung von redaktionellen Newslettern per E-Mail und auf den Datenabgleich zu Marketingzwecken mit ausgewählten Werbepartnern (z. B. LinkedIn, Google, Meta). Stand: 08.12.2025 Es ist für uns eine Selbstverständlichkeit, dass wir verantwortungsvoll mit Ihren personenbezogenen Daten umgehen. Sofern wir personenbezogene Daten von Ihnen erheben, verarbeiten wir diese unter Beachtung der geltenden Datenschutzvorschriften. Detaillierte Informationen finden Sie in unserer Datenschutzerklärung. Ich bin damit einverstanden, dass die Vogel Communications Group GmbH & Co. KG, Max-Planckstr. 7-9, 97082 Würzburg einschließlich aller mit ihr im Sinne der §§ 15 ff. AktG verbundenen Unternehmen (im weiteren: Vogel Communications Group) meine E-Mail-Adresse für die Zusendung von redaktionellen Newslettern nutzt. Auflistungen der jeweils zugehörigen Unternehmen können hier abgerufen werden. Der Newsletterinhalt erstreckt sich dabei auf Produkte und Dienstleistungen aller zuvor genannten Unternehmen, darunter beispielsweise Fachzeitschriften und Fachbücher, Veranstaltungen und Messen sowie veranstaltungsbezogene Produkte und Dienstleistungen, Print- und Digital-Mediaangebote und Services wie weitere (redaktionelle) Newsletter, Gewinnspiele, Lead-Kampagnen, Marktforschung im Online- und Offline-Bereich, fachspezifische Webportale und E-Learning-Angebote. Wenn auch meine persönliche Telefonnummer erhoben wurde, darf diese für die Unterbreitung von Angeboten der vorgenannten Produkte und Dienstleistungen der vorgenannten Unternehmen und Marktforschung genutzt werden. Meine Einwilligung umfasst zudem die Verarbeitung meiner E-Mail-Adresse und Telefonnummer für den Datenabgleich zu Marketingzwecken mit ausgewählten Werbepartnern wie z.B. LinkedIN, Google und Meta. Hierfür darf die Vogel Communications Group die genannten Daten gehasht an Werbepartner übermitteln, die diese Daten dann nutzen, um feststellen zu können, ob ich ebenfalls Mitglied auf den besagten Werbepartnerportalen bin. Die Vogel Communications Group nutzt diese Funktion zu Zwecken des Retargeting (Upselling, Crossselling und Kundenbindung), der Generierung von sog. Lookalike Audiences zur Neukundengewinnung und als Ausschlussgrundlage für laufende Werbekampagnen. Weitere Informationen kann ich dem Abschnitt „Datenabgleich zu Marketingzwecken“ in der Datenschutzerklärung entnehmen. Falls ich im Internet auf Portalen der Vogel Communications Group einschließlich deren mit ihr im Sinne der §§ 15 ff. AktG verbundenen Unternehmen geschützte Inhalte abrufe, muss ich mich mit weiteren Daten für den Zugang zu diesen Inhalten registrieren. Im Gegenzug für diesen gebührenlosen Zugang zu redaktionellen Inhalten dürfen meine Daten im Sinne dieser Einwilligung für die hier genannten Zwecke verwendet werden. Dies gilt nicht für den Datenabgleich zu Marketingzwecken. Mir ist bewusst, dass ich diese Einwilligung jederzeit für die Zukunft widerrufen kann. Durch meinen Widerruf wird die Rechtmäßigkeit der aufgrund meiner Einwilligung bis zum Widerruf erfolgten Verarbeitung nicht berührt. Um meinen Widerruf zu erklären, kann ich als eine Möglichkeit das unter https://contact.vogel.de abrufbare Kontaktformular nutzen. Sofern ich einzelne von mir abonnierte Newsletter nicht mehr erhalten möchte, kann ich darüber hinaus auch den am Ende eines Newsletters eingebundenen Abmeldelink anklicken. Weitere Informationen zu meinem Widerrufsrecht und dessen Ausübung sowie zu den Folgen meines Widerrufs finde ich in der Datenschutzerklärung, Abschnitt Redaktionelle Newsletter. Weiterführende Inhalte Automatisierung in der Batteriefertigung Von der Modulfertigung bis zum Recycling Künstliche Intelligenz und Robotik Nicht Humanoide, sondern Roboterhunde sind das wichtigste Testfeld für Embodied AI Cookie-Manager Leserservice AGB Hilfe Abo-Kündigung Werbekunden-Center Mediadaten Datenschutz Barrierefreiheit Impressum Abo KI-Leitlinien Autoren Copyright © 2025 Vogel Communications Group Diese Webseite ist eine Marke von Vogel Communications Group. Eine Übersicht von allen Produkten und Leistungen finden Sie unter www.vogel.de

Images (1):

|

|||||

| Boston Dynamics отменила выпуск робота-гуманоида Atlas: Техника: Наука и техника: … | https://lenta.ru/news/2024/04/18/atlas-… | 1 | Dec 30, 2025 00:03 | active | |

Boston Dynamics отменила выпуск робота-гуманоида Atlas: Техника: Наука и техника: Lenta.ruURL: https://lenta.ru/news/2024/04/18/atlas-bye/ Description: Американская компания Boston Dynamics заявила о прекращении разработки робота Atlas. Об этом сообщает издание TechCrunch Content:

Реклама Фото: Josh Reynolds / AP Американская компания Boston Dynamics заявила о прекращении разработки робота Atlas. Об этом сообщает издание TechCrunch. В фирме, принадлежащей корейской корпорации Hyundai, рассказали об отмене проекта Atlas. Boston Dynamics выпустила прощальное видео с записями робота-гуманоида, в котором заявила, что Atlas «пора отправиться на пенсию». В компании не назвали причину отмены проекта, однако представители фирмы заявили, что будут использовать наработки при создании других роботов. По словам журналистов, Boston Dynamics не занималась продажей Atlas коммерческим компаниям, поэтому, вероятно не смогла найти способ заработать на роботе. Также, возможно, Atlas устарел на фоне новых машин. Впервые Atlas представили почти 11 лет назад. Компания выпустила человекоподобного робота, созданного специально для замены человека на тяжелом или опасном производстве. По мнению журналистов TechCrunch, Boston Dynamics вложила в разработку гуманоида миллионы долларов. В середине марта стало известно, что немецкий автомобильный концерн Mercedes-Benz заключил договор об использовании роботов. Гуманоиды от компании Apptronik будут работать в тестовом режиме на одном из заводов Mercedes в Венгрии.

Images (1):

|

|||||

| Boston Dynamics AI Institute eröffnet Standort in Zürich - 20 … | https://www.20min.ch/story/roboterfirma… | 1 | Dec 30, 2025 00:03 | active | |

Boston Dynamics AI Institute eröffnet Standort in Zürich - 20 MinutenURL: https://www.20min.ch/story/roboterfirma-boston-dynamics-eroeffnet-standort-in-zuerich-850469497868 Description: Die renommierte US-Roboterfirma erweitert ihre Präsenz und etabliert in Zürich ihren ersten Forschungs-Hub ausserhalb der USA. Content:

Das Boston Dynamics AI Institute kommt nach Zürich und eröffnet ihren ersten Forschungs-Hub ausserhalb der USA. Kann die Stadt einen ähnlichen Erfolg erzielen wie einst mit Google? Die Volkswirtschaftsdirektorin von Zürich zeigt ihre Begeisterung darüber, dass das AI Institute von Boston Dynamics seine Präsenz in ihrer Region ausbaut. Dazu schreibt Carmen Walker Späh: «Einer der weltweit führenden Akteure im Bereich Robotik wird ein Entwicklungsteam in unserem Kanton etablieren.» Das Projekt in die Schweiz geholt und die Ansiedlung betreut hat der Standortmarketingverbund Greater Zurich Area. Die eigentliche Ankündigung erfolgte letzte Woche auf den Swiss Robotics Days in Zürich. Neben Schweizer Grössen in der Robotikbranche wie beispielsweise ETH-Professor Roland Siegwart trat auch Al Rizzi auf, der eine Keynote hielt. Rizzi ist der Chief Technology Officer des AI Institute von Boston Dynamics. In einer Pressemitteilung erklärt das Institut, «dass das Team in Zürich ab Anfang 2024 an der Entwicklung intelligenter, agiler und geschickter Robotersysteme arbeiten wird, die in den anspruchsvollsten Umgebungen eingesetzt werden sollen.» Der Standort Zürich wird dem Unternehmen dabei behilflich sein, Verbindungen zu talentierten Personen, Universitäten und Forschungsorganisationen im lebendigen europäischen Umfeld zu knüpfen. Die Kultur des Instituts ist darauf ausgerichtet, das Beste aus akademischer und privater Forschung zu vereinen, wie Tippinpoint.ch schreibt. Das Boston Dynamics AI Institute wurde im August 2022 von Marc Raibert gegründet. Es gibt an, dass es 150 Vollzeitmitarbeiter und 10 Gastprofessoren beschäftigt, die eine Fläche von mehr als 30’000 Quadratmetern für Labors und Büros im Kendall Square in Boston nutzen. Das AI Institute eröffnet nun seinen ersten Forschungsstandort ausserhalb der USA. Die Volkswirtschaftsdirektorin von Zürich, Walker Späh, bezeichnet dies als eine bedeutende Chance für den Innovationsstandort Zürich. Die Entscheidung einer so renommierten Firma wie desBoston Dynamics AI Institute zugunsten von Zürich ist zunächst ein erheblicher Imagegewinn. Es bleibt jedoch abzuwarten, inwieweit das Unternehmen die bereits lebhafte Robotik-Szene zusätzlich beleben wird. Deine Meinung zählt Adventskalender Solitaire Kreuzworträtsel Sudoku Mahjong Bubbles Snake Schach eXchange Power of 2 Doppel Cuboro Riddles Wortblitz SudoKen Street Fibonacci Gumblast Rushtower Wimmlbid Bleib auf dem Laufenden.

Images (1):

|

|||||

| How to Buy Boston Dynamics Stock - Best Wallet Hacks | https://wallethacks.com/how-to-buy-bost… | 1 | Dec 30, 2025 00:03 | active | |

How to Buy Boston Dynamics Stock - Best Wallet HacksURL: https://wallethacks.com/how-to-buy-boston-dynamics-stock/ Description: Investors are racing to buy stock in AI companies creating new and exciting products. Can you buy stock in Boston Dynamics? Learn more. Content:

Best Wallet Hacks by Josh Patoka Updated February 22, 2024 Some links below are from our sponsors. At no added cost to you, some of the products mentioned below are advertising partners and may pay us a commission. This blog has partnered with CardRatings for our coverage of credit card products. This site and CardRatings may receive a commission from card issuers. Opinions, reviews, analyses & recommendations are the author's alone and have not been reviewed, endorsed or approved by any of these entities. We may receive commission from card issuers. Some or all of the card offers that appear on the WalletHacks.com are from advertisers and may impact how and where card products appear on the site. WalletHacks.com does not include all card companies or all available card offers. As an Amazon Associate, I earn from qualifying purchases. More information Artificial intelligence (AI) has become a popular theme among investors as companies use technology to turn what was once the subject of sci-fi movies into reality. One such company is Boston Dynamics, whose legged robots can dance, perform athletic tasks, and navigate uneven terrains like hills and stairs. As more organizations look to utilize robots with ever-developing capabilities, Boston Dynamics can expect strong revenues after years of research and development. This growth potential has many investors wanting to know how to buy Boston Dynamics stock. With Equitybee, accredited investors can gain investment access to high-growth, venture-backed startups before they IPO by funding employee stock options. Sign up for free today to see what companies are available now and get notified when companies like OpenAI are available. 👉 Learn more about Equitybee Originally an initiative of the Massachusetts Institute of Technology (MIT), Boston Dynamics began as a robotics and engineering startup in 1992. Today, this robotics pioneer focuses primarily on developing mobile robots for commercial and industrial uses. Other companies have also provided Boston Dynamics funding and gained an ownership stake. For example, Google is a previous stakeholder, and now Hyundai Motor Group and SoftBank are co-owners. The company eventually began making advanced robots with funding from various U.S. military agencies, including the Defense Advanced Research Projects Agency (DARPA). Those early-stage research and development contracts have helped advance robotics technology to its present state. Police and fire departments are starting to use the Spot Robo-dog to inspect suspicious packages and assess high-risk environments. Factories can also use Spot to inspect the plant at regular intervals within defined parameters for safety issues with cameras and sensors. In addition, it was recently announced that new robots from Boston Dynamics would have OpenAI’s ChatGPT capability. So far, these robots are not being sold to the general public, but needless to say, the company is pursuing consumer applications once the capabilities improve and the machine is safe to be near children, pets, and the public. Unfortunately, you cannot buy Boston Dynamics stock as it’s a privately-owned company. However, you can gain exposure indirectly by investing in Hyundai Motor Group (HYMTF) and SoftBank (SFTBF), which own Boston Dynamics and are both publicly-traded companies. Let’s take a closer look at these two companies. Hyundai Motor Group owns an 80% controlling interest in Boston Dynamics, making it the majority owner. It finalized the acquisition in June 2021 from Softbank. In a press release, the Group stated they will “create a robotics value chain, from robot component manufacturing to smart logistics solutions.” This holding company is best known for its Hyundai and Kia personal automobiles. As a result, most of the stock’s performance relies heavily on gas-powered car sales and potentially its success in autonomous vehicles, electric vehicles, and electric batteries. The South Korea-based company trades as an over-the-counter OTC stock in the U.S. stock market under the stock symbol HYMTF. This type of stock doesn’t have as much liquidity as common shares, and brokerages are more likely to charge trading commissions. In addition, not every investing app trades the HYMTF stock ticker. So, for example, you can’t buy Hyundai stock through Robinhood or M1 Finance, which are both easy-to-use platforms for beginner investors. Instead, you will need to use a discount broker like Fidelity, Schwab, or E*TRADE, which offers OTC stock. Note that you may be charged a commission fee. If you’re comfortable investing directly on foreign stock exchanges through a participating broker, Hyundai trades on the Korea Stock Exchange (KRX) with stock number 005380. A subsidiary of Japan-based SoftBank owns the remaining 20% of Boston Dynamics. This investment holding company acquired its stake in 2017, when Google sold its entire interest. Through most investing apps, investors can purchase U.S.-listed ADR shares (similar to OTC stocks) of SoftBank Group Corp. through the stock ticker SFTBF. For example, you can buy SFTBF shares on Robinhood, although liquidity can still be thinner than common shares. Consider buying SoftBank shares directly from the Tokyo Stock Exchange with ticker 9984.T for more liquidity. Boston Dynamics is only a tiny portion of the SoftBank portfolio, which also invests in the following niches: SoftBank is primarily a telecommunications company with cell phone plans. The Softbank Vision Fund is a venture capital fund investing in tech startups, including Boston Dynamics, DoorDash, and Uber. Private companies like Boston Dynamics raise capital through late-stage startup investing platforms. These funding rounds help the company grow to go public when angel investors can sell their privately held shares. You must be an accredited investor to participate. If you’re eligible for these platforms, consider investing in robotics offerings as they become available. Until Boston Dynamic goes public, consider investing in the following companies if you want to profit from smart robots. Note that a stock analyzer can help you complete your due diligence to understand a stock’s risks, rewards, and how it might fit into your investment strategy. iRobot (Nasdaq: IRBT) holds the largest market share for consumer-focused robots. The producer’s Roomba robot vacuums are the most recognized consumer product. Customers can also add robotic mops and air purifiers to their home cleaning system. These machines don’t perform the same functions as the Boston Dynamics robots, but they help solve customer needs for daily tasks. Rockwell Automation (NYSE: ROK) produces industrial automation components and controls. These devices and machines increase productivity and contain smart sensors that provide real-time updates and alerts. Rockwell’s machines are mostly stationary instead of bi-ped or quad-ped roving robots with the ability to move within certain boundaries. Nvidia (Nasdaq: NVDA) is an artificial intelligence darling that provides computer chips and graphic processing units for many AI-powered devices. Boston Dynamics is one of its many customers, so the share price could increase when demand for high-tech chips is strong. A sector ETF such as Global X Artificial Intelligence & Technology ETF (Nasdaq: AIQ) provides instant exposure to multiple companies offering AI services. Its 0.68% expense ratio is higher than most broad market index funds but slightly lower than competing funds. If you want more diversity to manage risk, consider this investment option, as picking individual stocks can be riskier. Additionally, the artificial intelligence industry is relatively young, and knowing which companies will be successful is challenging. A recent example of the fund’s top 10 holdings include: Some holdings are direct competitors that might be developing similar technology, although with different applications. Other companies like Amazon may use AI to improve efficiency and automate more processes. ✨ Related: How to Buy Hulu Stock Boston Dynamics doesn’t have a stock symbol as it’s a private company. The company hasn’t filed to become a publicly-traded company. As of June 2023, there are no indications that Boston Dynamics is taking steps to have an initial public offering (IPO). For now, investors wanting exposure to AI should consider other stocks or sector ETFs. Hyundai Motor Group has an 80% controlling interest and states that Boston Dynamics operates as an independent business within the company’s broader portfolio. Further, a SoftBank affiliate owns the remaining 20%. Previously, Alphabet (formerly known as Google X) owned a controlling stake in the company from 2013 until 2017. Softbank held a 100% controlling interest from 2017 until 2021 when Hyundai purchased an 80% interest. Boston Dynamics doesn’t release financial data as it’s a private company. During the 2020-2021 acquisition from Hyundai, the deal valued the robotics company at $1.1 billion. It’s probable that the company will be worth more in 2023 as it has more investor interest and product sales. There are no risk-free investments, and investing in Boston Dynamics carries several risks. First, it’s a private company that holds a tiny position in the holdings of Hyundai (HYMTF) or SoftBank (SFTBF). The price performance for either stock is more correlated to other business ventures than robots. Also, many remain cautious about artificial intelligence ethics as there are concerns that law enforcement or military agencies can use robots to harm or target individuals. Boston Dynamics and several other leading AI companies have pledged not to sell units to buyers with intentions to use the robot as a weapon or for harmful purposes. While Boston Dynamics remains a private company without any hints of going public shortly, you can gain indirect exposure by purchasing shares in the two companies with a controlling interest (Hyundai Motors Group and SoftBank). Your other option is to invest in the broader tech sector through an exchange-traded fund and wait patiently for Boston Dynamics to go public if it ever happens. Regardless of your decision, it’s important to remember that AI companies make up a small part of the overall market and that portfolio diversification should always be a priority when deciding where to invest. The stock market may seem overvalued but you know you shouldn't try to time the market - what should you do instead? It's easy to tell people that they shouldn't react emotionally when they're investing. Don't sell when you're scared and don't… eToro is it one of the most comprehensive cryptocurrency trading apps in the industry as it offers trading in 15 cryptocurrencies. It also allows you to track and copy successful traders on the app. Webull Product Name: Webull Product Description: Webull is a trading platform that has $0 commissions on stocks, ETFs and options. … After graduating in $50k with student loans in May 2008 from Virginia Military Institute with a B.A. International Studies and Political Science with a minor in Spanish (he studied abroad in Sevilla, Spain for 3 months), Josh decided to sell his soul for seven years by working in the transportation industry to get out of debt ASAP and focus on doing something else with a better work-life balance. He is a father of three and has been writing about (almost) everything personal finance since 2015. You can also find him at his own blog Money Buffalo where he shares his personal experience of becoming debt-free (twice) and taking a 50%+ pay cut when he changed careers. Today, Josh relishes the flexibility of being self-employed and debt-free and encourages others to pursue their dreams. Josh enjoys spending his free time reading books and spending time with his wife and three children. Opinions expressed here are the author's alone, not those of any bank or financial institution. This content has not been reviewed, approved or otherwise endorsed by any of these entities. Δ Δ As Seen In: Disclaimer I am not a financial adviser. The content on this site is for informational and educational purposes only and should not be construed as professional financial advice. Please consult with a licensed financial or tax advisor before making any decisions based on the information you see here. Advertising Disclosure I may be compensated through 3rd party advertisers but our reviews, comparisons, and articles are based on objective measures and analysis. For additional information, please review our advertising disclosure. Contact Us Best Wallet Hacks P.O. Box 323 Fulton, MD 20759 Email Us © 2025 Best Wallet Hacks • All rights reserved

Images (1):

|

|||||

| Tutor Raises $34 Million to Teach Warehouse Robots | https://www.pymnts.com/news/investment-… | 1 | Dec 29, 2025 16:00 | active | |

Tutor Raises $34 Million to Teach Warehouse RobotsContent:

Tutor Intelligence, which makes AI-powered robots for warehouse work, has raised $34 million in new funding. Complete the form to unlock this article and enjoy unlimited free access to all PYMNTS content — no additional logins required. yesSubscribe to our daily newsletter, PYMNTS Today. By completing this form, you agree to receive marketing communications from PYMNTS and to the sharing of your information with our sponsor, if applicable, in accordance with our Privacy Policy and Terms and Conditions. Δ The company will use the new capital to speed commercialization of its robots, scale its consumer packaged goods (CPG) fleet and advance its central robot intelligence platform and research infrastructure, according to a Monday (Dec. 1) news release. “Tutor stands out for its extraordinary speed of execution and its ability to balance cutting-edge product and model development with a clear commercial focus that quickly gets this functionality into customers’ hands,” Rebecca Kaden, managing partner at Union Square Ventures, which led the funding round, said in the release. “They’re not building for an abstract future; they’re transforming how CPG companies operate today.” Founded out of MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), Tutor Intelligence’s robots work alongside human operators to process goods for a “vast Fortune 50 supply chain network,” the company said in the release. It also works with multiple Fortune 500 packaged food companies, and “global leaders” in the personal care, toys, home goods, beauty and consumer technology spaces. “When we started Tutor Intelligence nearly five years ago as grad students at MIT, we saw that the robotics intelligence bottleneck was the key barrier to robotic worker viability,” said Josh Gruenstein, the company’s co-founder and CEO. “We built a system that leverages on-the-job data to teach robots to navigate and understand the physical world with human-like intuition. This new capital enables us to expand our fleet, scale our robot training infrastructure, and empower our robots to tackle increasingly complex tasks, reshaping industrial work as we know it.” Advertisement: Scroll to Continue The funding comes at a time when, as covered here last month, physical artificial intelligence (AI) is emerging as the next stage of robotics. Earlier robots followed fixed commands and worked only in predictable environments, having trouble with the unpredictability found in everyday operations such as shifting layouts, mixed lighting, and human movement. “That is beginning to change as research groups show how simulation, digital twins and multimodal learning pipelines enable robots to learn adaptive behaviors and carry those behaviors into real facilities with minimal retraining,” PYMNTS wrote. Amazon’s launch of its Vulcan robot is one of the clearest examples of physical AI moving from research to frontline operations. This robot uses both vision and touch to pick and stow items in the company’s fulfillment centers, letting it handle flexible fabric storage pods and unpredictable product shapes. Terraform Labs Co-Founder Do Kwon Sentenced to 15 Years in Prison Costco’s Digital Sales Surge 21% as Members Maintain Spending Appeals Court Says Judge Must Consider Allowing Apple to Collect Commission Disney's $1 Billion Bet: A Licensing Model With OpenAI for User Content We’re always on the lookout for opportunities to partner with innovators and disruptors.

Images (1):

|

|||||

| HIT Shenzhen Team Develops Multimodal Large Model 'JiuTian', Tops OpenCompass … | https://pandaily.com/hit-shenzhen-team-… | 1 | Dec 29, 2025 16:00 | active | |

HIT Shenzhen Team Develops Multimodal Large Model 'JiuTian', Tops OpenCompass Ranking - PandailyDescription: The first multimodal large-scale model 'JiuTian' has topped the OpenCompass multimodal large-scale model ranking upon its debut evaluation. Content:

Want to read in a language you're more familiar with? The first multimodal large-scale model 'JiuTian' has topped the OpenCompass multimodal large-scale model ranking upon its debut evaluation. Harbin Institute of Technology (Shenzhen) Computing and Intelligence Research Institute team, relying on Shenzhen Hashen Asset Management Co., Ltd. for achievement transformation, has established a multimodal large-scale model development enterprise - Shenzhen Ruoyu Technology Co., Ltd. (abbreviated as 'Ruo Yu Technology') The first multimodal large-scale model 'JiuTian' under Shenzhen Ruoyu Technology Co., Ltd. has topped the OpenCompass multimodal large-scale model ranking upon its debut evaluation. '123 billion parameters', '120 million image-text pairs', '5.5 million bilingual language samples', '1.2 million fine-tuning data samples', â500,000 reinforcement data samplesâ... The improvement of core parameters brings about a qualitative change in the model's capabilities. JiuTian multimodal large-scale model has achieved remarkable performance in logical reasoning, relational reasoning, and perceptual abilities. With over billions of parameters, JiuTian has achieved multimodal fusion of text, images, audio, and video. Its intelligent understanding and response capabilities not only cover fields such as natural language processing, computer vision, and speech recognition but also effectively break down the information barriers between different modalities, integrating them into a unified 'JiuTian'. 'The 'JiuTian' symbolizes the highest celestial realm in ancient Chinese mythology, representing our boundless pursuit of technological progress and longing for an intelligent future. This model transcends the boundaries of various modes such as text, images, audio, and video with its powerful understanding and responsive capabilities, achieving true multimodal fusion.' Dr. Sun Teng, CEO of Ruoyu Technology, explained: 'By finding bridges that connect various fields from a disordered and fragmented information world, integrating information from different domains such as natural language processing, computer vision, and speech recognition breaks down the information silos between modalities and truly achieves orderly flow and communication of information.' Harbin Institute of Technology Shenzhen Campus has established an asset joint-stock company to encourage the transformation and implementation of achievements by faculty and staff. HIT (Shenzhen) receives policy support for the integration of production, education, and research. If Shenzhen Ruoyu Technology Co., Ltd. had been established from the beginning with the school as an initial shareholder, it would have provided strong support for the company's development. Recently, the well-known magazine IEEE Intelligent Systems announced its list of 'AI's 10 to Watch' for the year 2022. Professor Nie Liqiang was included in this list due to his contributions in the field of multimodal research. Professor Nie is a recipient of the DAMO Academy Qingcheng Award and TR35 China Award. He stated that the achievements of Harbin Institute of Technology (Shenzhen) in the field of artificial intelligence should not only exist within laboratories but also be transformed into practical applications to serve national defense, aerospace, and society. If Ruoyu Technology Co., Ltd. has another AI expert as a co-founder, it would be Professor Zhang Min. Professor Zhang is the Assistant President of Harbin Institute of Technology (Shenzhen), the first distinguished young scholar in NLP field in China, a national "Top Talent" recipient, a mid-career expert with outstanding contributions recognized by the state, and he also enjoys special allowances from the State Council. Harbin Institute of Technology ranks first among Chinese research institutions in NLP direction according to CSRankings (2022-2023), an authoritative ranking list in computer science. Professor Zhang is the most influential person at Harbin Institute of Technology in this field. Dr. Sun Teng, co-founder and CEO of Ruoyu Technology Co., Ltd. , is also a core expert in the company's research and development team. Dr. Sun's research has always focused on multimedia computing, with related achievements published in CCF A-class conferences and IEEE/ACM Trans. Dr. Sun has previous successful entrepreneurial experience and possesses full-process experience in the application of artificial intelligence technology in vertical fields as well as company management expertise. Geng Chen, another co-founder of Ruoyu Technology Co., Ltd. , serves as the company's strategic advisor. He has been repeatedly recognized as the best technology analyst by New Fortune magazine and has accumulated rich industry resources throughout his years of research career. He is responsible for investment and financing activities as well as connecting industrial resources for the company's implementation purposes. âIf Ruoyu Technology Co., Ltd. was established at this time, it has its historical mission and ideals. As cutting-edge researchers, we deeply feel the transformative impact of artificial intelligence on future society. The productivity explosion brought by generative AI will redefine production relationships in various industries. It is our honor and mission to have the opportunity to participate in it. âComputing power, data, and talent are the three major barriers for entering the field of large-scale models, and Ruoyu Technology Co., Ltd. has gathered these core elements from its inception. The internally developed research and development team led by top talents has formed independent iterative capabilities. In the future, under the leadership of technical experts, âJiuTianâwill continue to iterate. With top-notch entrepreneurial team, core capabilities in self-developed multimodal large models, and successful practical experience, Ruo Yu Technology expresses that it will bring a touch of brilliance to the 'Battle of Hundred Models'. Based on the foundation of large-scale model capabilities, reshaping each track has become an industry consensus. According to OpenAI's development path, when models reach a certain size, new abilities will emerge, especially some previously unseen capabilities. If JiuTian will continue to iterate in the future, Dr. Sun Teng said: 'JiuTian' is still iterating towards both larger and smaller directions. On one hand, it is increasing the scale of parameters to explore nodes that support the emergence of universal multimodal large models. On the other hand, in order to meet the application needs of industry users and achieve maximum effects with minimal computing power, it is necessary to compress large models into lightweight ones and combine them with edge computing devices. SEE ALSO: SenseTime Releases Large Multimodal Model amid ChatGPT Boom Based on the multimodal framework of 'JiuTian', Ruo Yu Technology's business model has a fundamental difference from the AI 1.0 era. In the past, the business model required redeveloping algorithms for each specific demand, operating on a project basis. With 'JiuTian' as a unified multimodal foundation, there is no need to redesign the framework; only minor adjustments based on different industry data are necessary to obtain corresponding industry models. Customers can even make secondary adjustments themselves according to their specific domain requirements using their own data. The difficulty of multimodal large models lies in the fusion of multimodal information. Common fusion methods include linear addition, cascading, and other relatively crude means. However, the final effect is often not as impressive as that of a single modality. This is because some technical teams lack experience and capabilities in fine-tuning multimodal data, integrating and aligning multimodal features. JiuTian has a fully integrated model training framework for autonomous development of multimodal feature extraction, alignment, fusion, and inference, as well as a comprehensive and meticulous process for collecting and cleaning multimodal data. The model's top ranking on the multimodal large-scale model list proves the team's leading capabilities in the field of multimodal large-scale models. Robots are system-level application products in the industrial field, and they are a key direction empowered by the multimodal large model base of 'Ruo Yu-Jiu Tian'. Harbin Institute of Technology currently has deep industry-academia-research accumulation in the field of robotics. In the future, embodied robots will require the fusion of multimodal information such as speech, vision, decision-making, and control to form a closed loop. The multimodal large model base of 'JiuTian' will further integrate research based on Harbin Institute of Technology's accumulated expertise in robotics and has already established deep cooperation with several large consumer electronics/automotive companies. With the 'JiuTian' multimodal large model base, Ruo Yu Technology has the ability to provide personalized and customized services for users in different fields through fine-tuning of existing multimodal large model bases. It provides capabilities such as language pre-training large models, multimodal pre-training large models, and vertical domain pre-training large models, aiming to build a future AI general-purpose platform and infrastructure. Related posts coming soon... Pandaily is a tech media based in Beijing. Our mission is to deliver premium content and contextual insights on China's technology scene to the worldwide tech community. © 2017 - 2025 Pandaily. All rights reserved.

Images (1):

|

|||||

| 2026 AI Trends: Multimodal Models, Agents, and Quantum Tech Transform … | https://www.webpronews.com/2026-ai-tren… | 1 | Dec 29, 2025 16:00 | active | |

2026 AI Trends: Multimodal Models, Agents, and Quantum Tech Transform IndustriesDescription: Keywords Content:

In the fast-evolving world of technology, 2026 promises to be a pivotal year where artificial intelligence moves beyond hype into tangible, enterprise-level impact. Industry leaders are shifting from pilot projects to full-scale deployments, driven by advancements in AI infrastructure and multimodal models. According to insights from Deloitte Insights, successful organizations are leveraging these tools to transition from experimentation to measurable outcomes, emphasizing the integration of AI with existing systems for strategic advantages. This shift is not just about adopting new tools but rethinking business models entirely. For instance, AI-powered decision-making is combining with Internet of Things (IoT) and blockchain to enable real-time analytics and secure data sharing. Posts on X highlight how these integrations are expanding AI’s role from operational support to core strategic planning, with examples like multilingual generative AI enhancing global operations. Meanwhile, the push for sustainability is intertwining with tech innovations, as companies invest in green technologies to meet regulatory demands and consumer expectations. Reports indicate that bio-based materials and decentralized renewable energy sources are gaining traction, positioning them as key growth areas in the post-2025 era. AI’s Expanding Dominion in Enterprise Strategy The rise of agentic AI, where systems autonomously perform tasks and make decisions, is set to redefine workflows across sectors. Simplilearn outlines how emerging technologies like this are shaping job markets and innovation pipelines, predicting a surge in demand for skills in AI governance and ethical implementation. In parallel, multimodal AI models that process text, images, and audio simultaneously are enabling more sophisticated applications, from advanced healthcare diagnostics to personalized consumer experiences. This convergence is particularly evident in telemedicine platforms and mental health apps, which are leveraging AI for proactive interventions. Challenges remain, however, including the need for robust data privacy measures and addressing biases in AI systems. Industry insiders note that as AI integrates deeper into critical sectors like healthcare and finance, regulatory frameworks will evolve to ensure accountability without stifling progress. Quantum Leaps and Neuromorphic Computing on the Horizon Quantum computing is another frontier gaining momentum, with potential to solve complex problems in drug discovery and financial modeling at unprecedented speeds. The World Economic Forum lists it among the top emerging technologies for 2025, extending into 2026, highlighting its role in accelerating scientific breakthroughs. Neuromorphic computing, mimicking the human brain’s efficiency, is emerging as a solution to the energy demands of traditional AI hardware. Juniper Research identifies this as a trend to watch, noting its potential to enable physical AI—robots and devices that learn and adapt in real-world environments. This technology is particularly promising for edge computing, where low-power, high-efficiency processing is crucial. Innovations in this area could revolutionize industries like manufacturing, with AI-driven diagnostics and 3D printing for on-demand production reducing waste and costs. Sustainability Drives Tech Innovation Waves The intersection of technology and environmental responsibility is creating new opportunities in renewable energy and circular economies. Decentralized systems powered by blockchain are facilitating peer-to-peer energy trading, as seen in emerging agri-tech solutions that optimize resource use. McKinsey ranks sustainability-focused tech among the top trends, emphasizing how companies are using AI to monitor and reduce carbon footprints in supply chains. This includes predictive analytics for energy consumption in data centers, which are exploding in number due to AI demands. Posts on X underscore the investment potential in AI infrastructure, with cloud giants like Microsoft and Amazon ramping up monetization efforts. These developments are not without hurdles, as the energy requirements of massive data centers raise concerns about grid stability and environmental impact. Blockchain’s Role in Secure Digital Ecosystems Beyond cryptocurrencies, blockchain is evolving into a foundational technology for secure, transparent transactions across industries. Its integration with AI and 5G is enabling innovations in supply chain management and digital identities, reducing fraud and enhancing traceability. In healthcare, blockchain-secured data sharing is improving patient outcomes by allowing seamless, privacy-protected access to records. SciTechDaily reports on breakthroughs in biotechnology that leverage these secure frameworks for collaborative research. The automotive sector is also benefiting, with concepts in electric vehicles (EVs) incorporating blockchain for battery lifecycle management and smart contracts for autonomous vehicle interactions. As the industry navigates hybrid and battery-electric transitions, these technologies ensure efficiency and compliance. The Surge of Physical AI and Robotics Physical AI, where intelligent systems interact with the physical world, is poised for significant advancements. Robots equipped with neuromorphic chips are becoming more autonomous, capable of learning from environments without constant human oversight. TechTarget discusses trends like this in machine learning, predicting widespread adoption in logistics and healthcare by 2026. For example, AI-driven robotics in surgery could enhance precision and reduce recovery times. Challenges in scalability and ethical deployment are prompting discussions on governance. Industry experts on X emphasize the need for open-source AI to foster competition and innovation, especially in geopolitical contexts like U.S.-China tech rivalries. Navigating Geopolitical Influences on Tech Progress Global tensions are influencing tech development, with calls for open-source initiatives to counter proprietary dominance. Elon Musk’s ventures, often highlighted in media, exemplify how individual leaders can shape federal policies on AI and space tech. WIRED reflects on 2025’s key stories, including AI data center expansions and political takeovers, projecting similar dynamics into 2026. This includes debates over post-quantum cryptography to secure communications against future threats. Investment themes on X point to digital banks and AI infrastructure as high-growth areas, with cloud providers leading the charge. These trends suggest a maturing market where profitability drives innovation rather than speculation. Innovations in XR and Foldable Devices Extended reality (XR) technologies are blending virtual and augmented realities for immersive experiences in education and training. The mobile tech sector is seeing revolutions with tri-fold devices and ultra-thin designs, as noted in recent analyses. Android Central details how 2025’s foldables and AI integrations are fundamentally changing user interactions, with 2026 expected to build on this by incorporating more seamless AI assistants. In consumer electronics, these innovations are driving competition, with companies like Samsung and Apple pushing boundaries in hardware that supports advanced software ecosystems. The result is a more connected, intuitive user experience that blurs lines between devices. Healthcare Transformations Through Tech Integration AI’s role in healthcare is expanding rapidly, from predictive diagnostics to personalized medicine. Multimodal models are analyzing vast datasets to identify patterns in diseases, accelerating drug development. Jagran Josh lists breakthroughs like autonomous agents in medical research, which are set to define 2026’s advancements. Telemedicine platforms enhanced by AI are making healthcare more accessible, especially in remote areas. Ethical considerations, such as data sovereignty and bias mitigation, are critical. Regulatory bodies are stepping in to ensure these technologies benefit society equitably, balancing innovation with public trust. The Future of Work in an AI-Driven World As AI automates routine tasks, the workforce is shifting toward roles requiring creativity and strategic thinking. Remote work norms, amplified by digital tools, are becoming standard, as per startup trends discussed on X. Capgemini explores how these changes are driving industry transformation, with a focus on upskilling programs to prepare employees for an AI-first environment. Investments in synthetic biology and longevity research are opening new frontiers, potentially extending human capabilities and creating markets in bio-based innovations. This holistic approach ensures technology enhances human potential rather than replacing it. Strategic Investments and Market Dynamics Venture capital is flowing into AI infrastructure, with hyperscalers projecting massive capital expenditures. Posts on X from investors highlight profitable growth in cloud revenues, underscoring the economic viability of these technologies. Firstpost recaps 2025’s defining innovations, noting AI’s dominance alongside breakthroughs in EVs and biotechnology, setting the stage for 2026’s expansions. Companies like Tesla and Amazon exemplify how innovation leads to market leadership, with revenue growth tied to tech adoption. As 2026 unfolds, the focus will be on scalable, impactful solutions that address real-world challenges. Emerging Sectors and Long-Term Visions New sectors like advanced waste management and micro-factories are emerging, driven by 3D printing and AI optimization. These areas promise sustainable growth, reducing environmental footprints while creating jobs. Popular Science celebrates 2025’s greatest innovations, including automotive shifts toward hybrids and EVs, which will influence 2026’s transportation tech. Ultimately, the tech environment in 2026 will be defined by interconnected innovations that prioritize impact over novelty. Industry insiders must navigate these trends with foresight, investing in technologies that align with broader societal goals for enduring success. Subscribe for Updates The AITrends Email Newsletter keeps you informed on the latest developments in artificial intelligence. Perfect for business leaders, tech professionals, and AI enthusiasts looking to stay ahead of the curve. Help us improve our content by reporting any issues you find. Get the free daily newsletter read by decision makers Get our media kit Deliver your marketing message directly to decision makers.

Images (1):

|

|||||

| NEO, the Worldâs First Native Multimodal Architecture, LaunchesâAchieving Deep Vision-Language … | https://pandaily.com/neo-the-world-s-fi… | 1 | Dec 29, 2025 16:00 | active | |

NEO, the Worldâs First Native Multimodal Architecture, LaunchesâAchieving Deep Vision-Language Fusion and Breaking Industry Bottlenecks - PandailyDescription: SenseTime and Nanyang Technological University have unveiled NEO, the worldâs first scalable, open-source native multimodal architecture that fundamentally fuses vision and language. Content:

Want to read in a language you're more familiar with? SenseTime and Nanyang Technological University have unveiled NEO, the worldâs first scalable, open-source native multimodal architecture that fundamentally fuses vision and language. On December 5, 2025, SenseTime, together with Nanyang Technological University and other research teams, released NEO, the worldâs first scalable, open-source native multimodal architecture (Native VLM), breaking free from the limitations of traditional modular âassembly-styleâ models and marking the arrival of a new era of true multimodal fusion. Unlike mainstream modular models such as GPT-4V or Claude 3.5, NEO discards the conventional âvision encoder + projection layer + language modelâ pipeline and instead builds a unified multimodal âbrainâ. Its breakthroughs stem from three native technologies: Real-world evaluations show that NEO matches top models such as Qwen2-VL and InternVL3 on visual tasks(including AI2D and DocVQA) using only 390 million image-text pairsâjust one-tenth of the data used by comparable models. On benchmarks like MMMU and MMBench, NEO outperforms other native VLMs in overall capability. Its 2Bâ8B parameter models deliver exceptional inference cost-efficiency, making them suitable for mobile devices, robots, and other edge scenarios. SenseTime has already open-sourced the 2B and 9B versionsof NEO and plans to extend the architecture to video understanding, 3D interaction, and more. This new framework not only introduces a fresh paradigm for multimodal AI, but also accelerates the shift of advanced AI from the cloud to edge devices, representing a significant contribution by Chinese researchers to global AI architecture innovation. Related posts coming soon... Pandaily is a tech media based in Beijing. Our mission is to deliver premium content and contextual insights on China's technology scene to the worldwide tech community. © 2017 - 2025 Pandaily. All rights reserved.

Images (1):

|

|||||

| China AI helps humanoid robots handle more objects with less … | https://interestingengineering.com/ai-r… | 1 | Dec 29, 2025 16:00 | active | |

China AI helps humanoid robots handle more objects with less trainingURL: https://interestingengineering.com/ai-robotics/humanoids-complete-household-tasks-with-less-training Description: Chinese researchers unveil RGMP, a data-efficient AI framework boosting humanoid robots’ grasping skills and generalization. Content:

From daily news and career tips to monthly insights on AI, sustainability, software, and more—pick what matters and get it in your inbox. Access expert insights, exclusive content, and a deeper dive into engineering and innovation. Engineering-inspired textiles, mugs, hats, and thoughtful gifts We connect top engineering talent with the world's most innovative companies. We empower professionals with advanced engineering and tech education to grow careers. We recognize outstanding achievements in engineering, innovation, and technology. All Rights Reserved, IE Media, Inc. Follow Us On Access expert insights, exclusive content, and a deeper dive into engineering and innovation. Engineering-inspired textiles, mugs, hats, and thoughtful gifts We connect top engineering talent with the world's most innovative companies We empower professionals with advanced engineering and tech education to grow careers. We recognize outstanding achievements in engineering, innovation, and technology. All Rights Reserved, IE Media, Inc. RGMP helps humanoids adapt quickly to new environments, enabling them to perform household chores without additional training. Researchers in China have introduced a new AI framework designed to enhance humanoid robot manipulation. According to researchers at Wuhan University, RGMP (recurrent geometric-prior multimodal policy) aims to improve grasping accuracy across a broader range of objects and enable robots to perform more complex manual tasks. Unlike many data-driven methods that rely on large training datasets, RGMP incorporates geometric reasoning to boost generalization in new or unpredictable environments. The framework achieves 87 percent generalization and is 5 times more data-efficient than leading diffusion-based models, combining spatial reasoning with efficient learning. The researchers say the framework could be a step toward more adaptable and capable humanoid systems. For humanoid robots to operate independently, they must reliably handle multiple objects across different environments. Current machine learning models often work well only when the robot operates in settings similar to those used during training. These systems rely heavily on large datasets and do not fully use geometric reasoning or spatial awareness, making it difficult for robots to adapt in new situations. Vision-language models can understand instructions but often struggle to link them with the correct actions, especially when object shapes or contexts vary. According to researchers, other approaches, like diffusion or imitation learning, require many demonstrations and still fail to generalize. This raises two key questions: how robots can reason about object geometry and how they can learn effectively with fewer examples. To address limitations in current robot manipulation systems, the team developed RGMP, a new end-to-end framework that combines geometric reasoning with efficient learning. The first part, called the Geometric-prior Skill Selector (GSS), helps the robot choose the correct action based on an object’s shape and task requirements, much as humans decide whether to grasp, pinch, or push. It uses simple geometric rules and works even in new environments. The second part, the Adaptive Recursive Gaussian Network (ARGN), improves learning from small datasets by storing and updating spatial memory. It models the robot’s interactions with objects over time, thereby avoiding vanishing gradients. Together, these components help robots generalize better and handle more complex tasks with fewer training examples. The team tested the RGMP framework to assess its performance and generalization. Experiments were carried out on two types of robots: a humanoid system and a desktop dual-arm robot equipped with cameras and 6-DoF arms. A dataset of 120 demonstration trajectories was used, and performance was measured through two metrics: selecting the correct skill and executing it accurately. RGMP was compared with leading models, including ResNet50, Diffusion Policy, Octo, OpenVLA, and others. The results show RGMP performed better across multiple manipulation tasks, including unseen objects and new environments. Researchers claim the GSS module improved skill selection by up to 25 percent, while ARGN and Gaussian modeling improved execution accuracy. The system also required far fewer training samples—achieving high performance with just 40 examples, compared to 200 needed by baseline models—demonstrating strong efficiency and adaptability. The team highlights that by linking skills to object context and breaking 6-DoF motions into Gaussian components, the system improves efficiency and generalization. RGMP achieves 87 percent generalization accuracy and uses 5 times less data than the Diffusion Policy during human-robot interaction tests. The results show that integrating symbolic reasoning with learning improves adaptability across new objects and environments. Future research will focus on enabling robots to infer actions for new objects after learning just one example. The Wuhan University team’s research details are available on the arXiv preprint server. Jijo is an automotive and business journalist based in India. Armed with a BA in History (Honors) from St. Stephen's College, Delhi University, and a PG diploma in Journalism from the Indian Institute of Mass Communication, Delhi, he has worked for news agencies, national newspapers, and automotive magazines. In his spare time, he likes to go off-roading, engage in political discourse, travel, and teach languages. Premium Follow

Images (1):

|

|||||

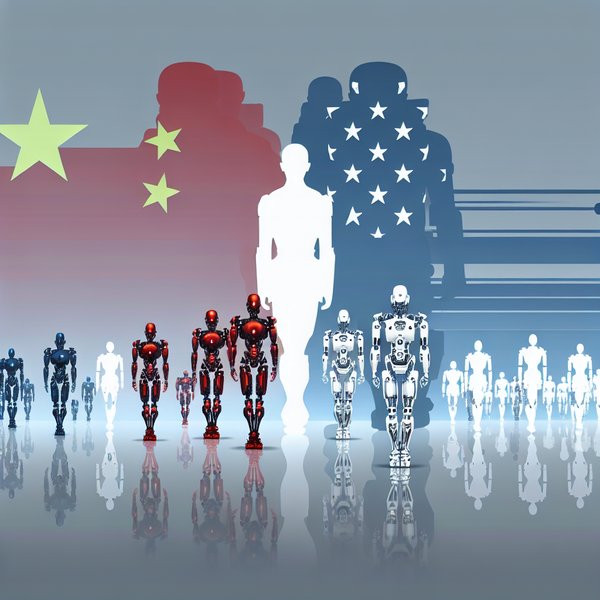

| China Recap | AI and robots take center stage | https://kr-asia.com/china-recap-ai-and-… | 1 | Dec 29, 2025 16:00 | active | |

China Recap | AI and robots take center stageURL: https://kr-asia.com/china-recap-ai-and-robots-take-center-stage Description: New breakthroughs buttress the view that China is drawing closer to US capabilities. Content:

Written by Vicky Chang Published on 15 Sep 2025 3 mins read China Recap is a weekly roundup tracking Chinese companies expanding abroad, covering market entries, funding rounds, product launches, and global partnerships. China’s corporate globalization strategy is evolving fast. Industry giants are rewriting the global playbook, while a new generation of companies charts fresh paths overseas. China Recap tracks both—focusing on strategic expansion, brand building, and localized operations—to help readers make sense of shifting trends and understand how Chinese firms are reshaping their global approach. This edition highlights China’s advances in artificial and embodied intelligence, as humanoid robots move toward commercialization and AI infrastructure and applications expand in function and capacity. Meituan has launched Xiaomei, an app powered by its LongCat large model, to strengthen its food delivery and local services. Xiaomei uses AI agents to let users order meals by voice and book restaurants. Shares rose in Hong Kong after the rollout, which comes as Alibaba and JD.com escalate competition in China’s delivery market. —Bloomberg UBTech Robotics has won a RMB 250 million (USD 35 million) contract for its Walker S2 humanoid robots, which it described as the “world’s largest order” of its kind. Deliveries will begin this year, and the deal includes operational support services. The Hong Kong-listed firm did not name the client. —Nikkei Asia Alibaba and Baidu have reportedly begun using self-designed chips to train AI models, partly replacing Nvidia hardware. Alibaba has applied its chips to smaller models, while Baidu is testing its Kunlun P800 for Ernie. The shift reflects US export curbs and Beijing’s push for tech self-sufficiency, though both firms still rely on Nvidia for top-tier models. —The Information Ant Group has unveiled its first humanoid robot, R1, at a Shanghai tech conference, highlighting its shift toward AI-powered robotics. Designed to handle tasks from cooking to medical assistance, the R1 runs on Ant’s in-house large model and reflects its strategy to scale AI assistant use across China. —Bloomberg China has reportedly narrowed its gap in AI development with the US to about three months, driven by rapid iteration, open-source model advances, and strategic chip reserves, according to CITIC CLSA. However, it still lags in advanced semiconductor production, which remains a longer-term hurdle that constrains progress in cutting-edge AI capabilities. —SCMP RELATED ARTICLENewsChina Recap | How fandoms shape marketsWritten by Vicky Chang Written by Vicky Chang Unitree Robotics is reportedly preparing to file for an IPO in the final quarter of 2025, targeting a valuation of up to RMB 50 billion (USD 7 billion). Already profitable and active in factory deployments, Unitree could become one of the first humanoid robotics firms to go public. —CNBC ByteDance’s Seed team has released Seedream 4.0, a multimodal AI model for text-to-image generation and editing. It supports 4K output, faster inference, visual signal control, and in-context reasoning. Now live via Dreamina, Doubao, and Volcano Engine, the unified system has shown notable performance across creative tasks. Tencent has released its AI CLI tool, CodeBuddy Code, and launched the global open beta of CodeBuddy IDE. The move positions Tencent Cloud as China’s first provider to support AI-driven coding across plugin, IDE, and CLI formats. Developers can use natural language in the terminal to automate tasks like refactoring, testing, and deployment. —IT Zhijia Alibaba Cloud has led a RMB 1 billion (USD 140 million) Series A+ funding round for Shenzhen-based X Square Robot, marking its first investment in embodied intelligence. Other investors that took part include CAS Investment, China Development Bank Capital, HongShan, Meituan, and Legend Capital. The funds will support training of foundation models and hardware development. —SCMP That wraps up this edition of China Recap. If your company is expanding internationally, we’d love to hear about your latest milestones. Get in touch to share your story. Loading... Subscribe to our newsletters KrASIA A digital media company reporting on China's tech and business pulse.

Images (1):

|

|||||

| From Navigation to Cognition: Building a Multimodal AI Robot - … | https://www.hackster.io/HiwonderRobot/f… | 1 | Dec 29, 2025 16:00 | active | |

From Navigation to Cognition: Building a Multimodal AI Robot - Hackster.ioDescription: MentorPi melds SLAM navigation and multimodal AI to explore and describe its world through natural commands. Find this and other hardware projects on Hackster.io. Content:

Add the following snippet to your HTML:<iframe frameborder='0' height='385' scrolling='no' src='https://www.hackster.io/HiwonderRobot/from-navigation-to-cognition-building-a-multimodal-ai-robot-986212/embed' width='350'></iframe> MentorPi melds SLAM navigation and multimodal AI to explore and describe its world through natural commands. Read up about this project on MentorPi melds SLAM navigation and multimodal AI to explore and describe its world through natural commands. Have you ever imagined owning a robot that not only follows commands but truly understands what you want to explore? Traditional robots might get you from point A to point B, but what if it could genuinely see the world around it and converse with you like a partner? Meet MentorPi, an open-source robotic platform built on the Raspberry Pi 5 and ROS 2. It's far more than just a SLAM navigation rover; it's an intelligent agent deeply integrated with multimodal AI large models (language, vision, speech), merging precise low-level motion control, robust environmental perception, and high-level cognitive reasoning into a single, hands-on system. Imagine saying to it: "Hey Mentor, first go to the zoo and see what animals are there; then head to the supermarket to check out what fruits are available; finally, take me to the soccer field for a game." In traditional human-robot interaction, executing this seamlessly is nearly impossible—it contains three distinct layers of tasks: MentorPi accomplishes this coherently, thanks to the synergy between semantic understanding from large models and its SLAM navigation system. 1. Task Comprehension & Planning Voice commands are captured and converted to text. A Language Large Model deconstructs the natural language instruction, extracting the three locations and their associated visual tasks to generate a structured mission queue. 2. Autonomous Navigation & Obstacle Avoidance The SLAM system (using LiDAR and a prior map) handles point-to-point navigation. Orchestrated by ROS 2, the robot plans optimal paths, moves reliably, avoids obstacles, and reaches each target area in sequence. 3. Visual-Semantic Understanding Upon arrival, the Vision Large Model activates, scanning the scene via a 3D depth camera. At the Zoo: It doesn't just detect "animals" but provides a detailed description: "The scene includes models of a giraffe, kangaroo, tiger, etc." At the Supermarket: It focuses on identifying fruits, reporting: "Various fruits are available, such as apples, bananas, grapes, and oranges. You can choose based on preference." This represents an evolution from merely "seeing" to "comprehending" the scene's semantic content. 4. Task Completion & Closure Arriving at the soccer field, the robot confirms the user's intent is satisfied, reporting "Arrived at the soccer field, ready to play!" and closing the task loop. MentorPi is not just a demo; it's a fully open-source platform designed for learning and development: Open Hardware: Based on Raspberry Pi 5 & ROS 2, it's highly extensible and compatible with various sensors. Flexible AI Integration: Supports either local lightweight models or cloud-based AI APIs (like GPT-4V), allowing you to balance performance and cost. Modular Design: Clear separation between SLAM, voice interaction, visual recognition, and navigation modules makes debugging and customization easier. Learning-Friendly: An ideal platform for advancing your skills in robotics, SLAM, 3D vision, human-robot interaction, and AI integration. MentorPi's breakthrough lies in its deep fusion of precise spatial positioning from SLAM ("where am I") with rich semantic understanding from AI models ("what is here, what is this place"). This transforms the robot from a simple tool executing "go to coordinates (x, y)" into a responsive "exploration partner" that interacts meaningfully with its environment. We believe the future of robotics lies not in faster movement or more precise grasping, but in how well robots understand our world and how naturally we can collaborate with them. MentorPi is our practical step in that direction, and we hope it becomes a starting point for more developers, students, and enthusiasts to enter the exciting field of Embodied AI. Let's turn robots from mere tools into curious extensions for exploring the world around us. Hackster.io, an Avnet Community © 2025

Images (1):

|

|||||

| Meta Pivots to AI Backbone for Humanoid Robots with Llama … | https://www.webpronews.com/meta-pivots-… | 1 | Dec 29, 2025 16:00 | active | |

Meta Pivots to AI Backbone for Humanoid Robots with Llama ModelsURL: https://www.webpronews.com/meta-pivots-to-ai-backbone-for-humanoid-robots-with-llama-models/ Description: Keywords Content: