AUTOMATION HISTORY

1191

Total Articles Scraped

2288

Total Images Extracted

Scraped Articles

New Automation| Action | Title | URL | Images | Scraped At | Status |

|---|---|---|---|---|---|

| Boston Dynamics’ Atlas Robot Becomes Market-Ready Product | https://www.techjuice.pk/boston-dynamic… | 1 | Feb 02, 2026 16:01 | active | |

Boston Dynamics’ Atlas Robot Becomes Market-Ready ProductURL: https://www.techjuice.pk/boston-dynamics-atlas-robot-becomes-market-ready-product/ Description: Boston Dynamics’ Atlas humanoid robot is now ready with industrial deployment plans and first units headed to Hyundai and Google DeepMind. Content:

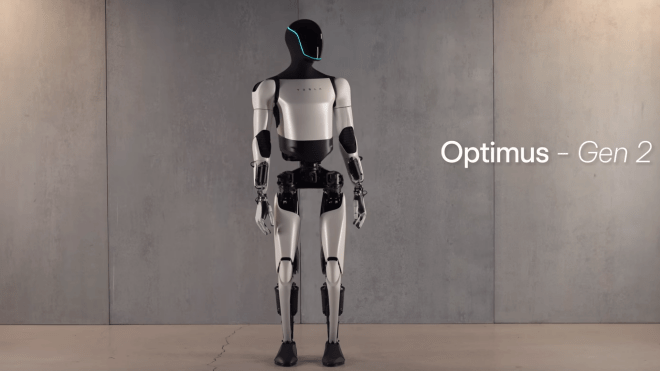

Boston Dynamics has transitioned its advanced Atlas humanoid robot from prototype to a market-ready product, unveiling production-focused specifications and industrial deployment plans after its presentation at CES 2026, the company confirmed. Official statements from Boston Dynamics detail that the newest version of Atlas was engineered with consistency, dependability, and readiness for real-world environments in mind, marking a departure from earlier iterations that were largely focused on demonstrations and capability showcases. The production variant features robust operational capacity designed to work across a range of industrial settings, including warehouses and factories with structured tasks such as parts sequencing and material handling. “For more than 30 years, Boston Dynamics has been building some of the world’s most advanced robots,” said Robert Playter, CEO of Boston Dynamics. “This is the best robot we have ever built. Atlas is going to revolutionize the way industry works, and it marks the first step toward a long-term goal we have dreamed about since we were children–useful robots that can walk into our homes and help make our lives safer, more productive, and more fulfilling.” Boston Dynamics, majority-owned by Hyundai, has announced that it will deliver the first production units of Atlas to Hyundai and to its artificial intelligence collaborator, Google DeepMind. In both deployments, teams will integrate the robot’s capabilities with advanced AI systems to enhance perception, adaptability, and autonomous decision-making in real-world operations. The market-ready Atlas features hardware that supports payloads of up to approximately 50 kilograms and operates reliably across challenging temperatures and environments to meet demanding industrial requirements. Engineers designed Atlas’s reach and mobility to handle a wide range of tasks, while allowing flexible supervision models ranging from full autonomy to remote operation through teleoperation or tablet-based control interfaces. According to the official statement: The robot can be controlled in three different ways: autonomous mode, teleoperated, or by using a tablet steering interface. Atlas has 56 degrees of freedom, fully rotational joints, a reach extending to 2.3M (7.5 ft), and the strength to lift up to 50 kg (110 lbs). The robot is also extremely water-resistant and can operate at diverse temperature ranges from -20° to 40° C (-4° to 104° F). Its safety features include human detection and fenceless guarding, and it can be integrated into workflows using barcode scanners or RFID. Production plans include a phased rollout beginning in 2028 at Hyundai’s manufacturing facilities, with initial use cases focused on parts sequencing and material logistics. By 2030, project leaders anticipate expanding Atlas’s role to more complex assembly operations. Boston Dynamics’ commercialization of Atlas underscores the growing emphasis on industrial robotics as a strategic tool to enhance operational efficiency and complement human labor in structured production environments. Stakeholders in manufacturing and logistics sectors will be watching how Atlas’s market entry influences broader adoption of humanoid robotics across global supply chains. Abdul Wasay explores emerging trends across AI, cybersecurity, startups and social media platforms in a way anyone can easily follow. A wave of grassroots ingenuity in the PC VR community has produced a new DIY virtual reality headset capable of running SteamVR experiences using commonly. US power tool manufacturer DEWALT has introduced a downward-drilling robot designed to automate one of the most labor-intensive steps in data center construction. A breakthrough. Pakistan’s National Cyber Crime Investigation Agency (NCCIA) has registered a First Information Report (FIR) in a disturbing case of online child exploitation, accusing a Rawalpindi. India has announced a massive incentive for the tech sector. Foreign companies using local data centres to serve global clients will now enjoy a tax. Premier Pakistan technology news website with special focus on startups, entrepreneurship and consumer products. © 2025 TechJuice.PK – All rights reserved.

Images (1):

|

|||||

| Hyundai and Boston Dynamics unveil humanoid robot Atlas at CES | https://www.ocregister.com/2026/01/05/c… | 1 | Feb 02, 2026 16:01 | active | |

Hyundai and Boston Dynamics unveil humanoid robot Atlas at CESURL: https://www.ocregister.com/2026/01/05/ces-2026-humanoid-robots/ Description: The life-sized robot walked and waved to the crowd, marking a significant step in the competition to build human-like robots. Content:

e-Edition Get the latest news delivered daily! Get the latest news delivered daily! e-Edition Trending: By MATT O’BRIEN Hyundai-owned Boston Dynamics publicly demonstrated its humanoid robot Atlas for the first time Monday at the CES tech showcase, ratcheting up a competition with Tesla and other rivals to build robots that look like people and do things that people do. “For the first time ever in public, please welcome Atlas to the stage,” said Boston Dynamics’ Zachary Jackowski as a life-sized robot with two arms and two legs picked itself up from the floor at a Las Vegas hotel ballroom. It then fluidly walked around the stage for several minutes, sometimes waving to the crowd and swiveling its head like an owl. An engineer remotely piloted the robot from nearby for the purpose of the demonstration, though in real life Atlas will move around on its own, said Jackowski, the company’s general manager for humanoid robots. The company said a product version of the robot that will help assemble cars is already in production and will be deployed by 2028 at Hyundai’s electric vehicle manufacturing facility near Savannah, Georgia. The South Korean carmaker holds a controlling stake in Massachusetts-based Boston Dynamics, which has been developing robots for decades and is best known for its first commercial product: the dog-like robot called Spot. A group of four-legged Spot robots opened Hyundai’s event Monday by dancing in synchrony to a K-pop song. Hyundai also announced a new partnership with Google’s DeepMind, which will supply its artificial intelligence technology to Boston Dynamics robots. It’s a return to a familiar partnership for Google, which bought Boston Dynamics in 2013 before selling it to Japanese tech giant SoftBank several years later. Hyundai acquired it from SoftBank in 2021. It’s rare for leading robot makers to publicly demonstrate their humanoids, in part because fumbles attract unwanted attention — such as when one of Russia’s first humanoids fell on its face in November. Robotics startups typically prefer to show off their research prototypes in videos on social media, offering them the opportunity to show the machines at their best and edit out their failings. At the end of Monday’s live Atlas demonstration, which appeared flawless, the humanoid prototype swung its arms in a theatrical gesture to introduce a static model of the new product version of Atlas, which looked slightly different and was blue in color. Crossover excitement from the commercial AI boom and new technical advances have helped pour huge amounts of money into robotics development, though many experts still think it’s a long time before truly human-like robots that can perform many different tasks take root in workplaces or homes. “I think the question comes back to what are the use cases and where is the applicability of the technology,” said Alex Panas, a partner at consultancy McKinsey who helped lead a CES robotics panel that attracted hundreds of people earlier in the day. “In some cases, it may look more humanoid. In some cases, it may not.” Either way, Panas said, “the software, the chipsets, the communication, all the other pieces of the technology are coming together, and they will create new applications.” Humanoids don’t yet have enough dexterity to threaten many human jobs, though a debate over their effects on employment is likely to grow as they become more skilled. The same Georgia plant where Hyundai plans to test out Atlas was the site of a federal immigration raid last year that led to the arrests of hundreds of workers, including more than 300 South Korean citizens. Copyright © 2026 MediaNews Group

Images (1):

|

|||||

| Boston Dynamics video: Atlas robot carries tool bag | https://www.bostonglobe.com/2023/01/19/… | 0 | Feb 02, 2026 16:01 | active | |

Boston Dynamics video: Atlas robot carries tool bagDescription: In a sleek new video, Boston Dynamics seems to be hinting at a future for its high-tech robots: putting them to work in settings where heavy labor is required. Content: |

|||||

| CES 2026: Boston Dynamics Atlas humanoid robot has an AI … | https://thegadgetflow.com/blog/ces-2026… | 1 | Feb 02, 2026 16:01 | active | |

CES 2026: Boston Dynamics Atlas humanoid robot has an AI brainURL: https://thegadgetflow.com/blog/ces-2026-boston-dynamics-atlas-humanoid-robot/ Description: Boston Dynamics brings Atlas to CES 2026, showing how humanoid robots are evolving with fluid movement and advanced AI control. Content:

Author If CES 2026 proves anything, it’s that AI is moving off our devices and into the physical world. A case in point is the Boston Dynamics Atlas humanoid robot. First unveiled in 2021, the original Atlas was heavy with slow, clunky movements. Fast-forward to 2026, and you’ll find that Atlas can dance, cartwheel, and even stand up in a way that no human can. What’s responsible for the change? Tireless engineering at Boston Dynamics has painstakingly constructed every centimeter of this humanoid robot. Plus, Atlas has an AI brain. Atlas may be labeled a humanoid, but it doesn’t try to move like one. Instead of copying human motion, it doubles down on what machines do best. So its limbs, torso, and head can rotate far beyond human limits. This allows it to reposition itself without turning its entire body. Atlas can pivot its core, twist mid-motion, and recover from falls using movements that would be impossible—or painful—for a person. That freedom of motion isn’t just for show. By eliminating traditional wiring that crosses rotating joints, Boston Dynamics has made Atlas both more flexible and more reliable over time. Fewer physical constraints mean smoother motion and fewer failure points. The latest Atlas is fully electric, ditching older hydraulic systems in favor of faster, quieter, and more precise control. A custom battery and advanced actuators give it the power to jump, lift, and stabilize itself. Meanwhile, a lightweight mix of aluminum and titanium components keeps the robot strong yet sleek. The result is a machine that looks less like a prototype and more like a platform; one capable of real-world movement rather than controlled lab demos. What really sets Atlas apart is how tightly perception and movement are linked. The robot constantly evaluates its surroundings and adjusts its posture, balance, and grip in real time. This is what allows Atlas to squat deeply to lift objects, shift weight mid-step, or recover smoothly when something goes wrong. Its hands reflect the same philosophy. Atlas doesn’t have human-like fingers, but instead, three-digit hands that can reconfigure as needed. Yes, Atlas can switch between narrow grips for small objects and wider grasps for larger loads. Tactile sensors feed data back into the system, helping Atlas apply the right amount of force instead of crushing or dropping what it’s holding. Most crucial to Atlas’ upgrades is its AI brain, which is powered by Nvidia chips. These allow the robot to lean new tasks via human-guided input. Using teleoperation, human operators can demonstrate tasks to Atlas remotely, repeating motions until the robot performs them independently. It’s a practical way to teach machines how to interact with unpredictable environments; something pre-programmed motions have always struggled with. There’s no shortage of hype around humanoid robots right now, and Boston Dynamics isn’t pretending Atlas is ready to flood factories or homes. Building machines that are reliable, affordable, and safe is a time-consuming endeavor. But at CES 2026, Atlas makes one thing clear: AI isn’t confined to computers and cell phones anymore. It’s learning how to move and exist in the real world. That’s a shift that’s closer than it was just a few years ago. Author The original product discovery platform We use cookies to personalize your experience. Learn more here.

Images (1):

|

|||||

| Nauka i technologia - Przerażający humanoidalny robot Boston Dynamics pracuje … | https://www.prisonplanet.pl/nauka_i_tec… | 1 | Feb 02, 2026 16:01 | active | |

Nauka i technologia - Przerażający humanoidalny robot Boston Dynamics pracuje na rusztowaniach. - Prison PlanetURL: https://www.prisonplanet.pl/nauka_i_technologia/przerazajacy,p897174322 Content:

Najnowszy film firmy Boston Dynamics przedstawiający Atlasa - wysokiego na sześć stóp dwunożnego humanoida, pokazuje, że robot nabył nowe umiejętności, które umożliwiają mu operowanie w złożonym terenie. Zespół Atlas firmy Boston Dynamics, kierowany przez Scotta Kuindersmę, powiedział gazecie The Verge, że wideo ma „pokazywać rozszerzenie badań, które prowadzimy w Atlasie”. Film pokazuje autonomiczną pracę Atlasa na prowizorycznym placu budowy. Pracownik stojący wysoko na rusztowaniu prosi dwunożnego robota o torbę z narzędziami. Robot chwyta torbę i pomyślnie dostarcza ją pracownikowi. Pierwotny film agencji wojskowej DARPA: „Nie myślimy tylko o tym, jak sprawić, by robot poruszał się dynamicznie w swoim otoczeniu, tak jak zrobiliśmy to w Parkour i Dance. Teraz zaczynamy angażować Atlasa w prace i zastanawiać się, w jaki sposób robot powinien postrzegać obiekty w swoim otoczeniu i nimi manipulować” powiedział Kuindersma. Odkąd w 2021 roku Hyundai Motor Group nabył za 1,1 miliarda dolarów pakiet kontrolny, w Boston Dynamics nastąpiła znacząca zmiana w przekazach, które wydają się przybliżać komercjalizację tych dwunogich robotów do rzeczywistych zastosowań. Więcej na temat rozwoju tych technologii w zakładce "Przyszłość pojazdów bezzałogowych": To co nas martwi to fakt, iż Atlas jest w stanie zrealizować marzenie kompleksu wojskowo-przemysłowego o humanoidalnych żołnierzach. Link do oryginalnego artykułu: LINK | Armia USA wprowadza roboty rozminowujące plaże przed desantem. | Korea oracowała wojskowego robota do eksploracji tuneli. | DARPA testuje autonomiczne helikoptery Sikorski. | Autonomiczny rój dronów przelatuje przez las polując na ludzi. | Turcja rozmieści wojskowe drony z karabinami maszynowymi. | Wojskowe drony będą rozpylały zakażone komary? | Axon chce, aby „drony wyposażone w paralizatory” patrolowały szkoły. | Roje zabójczych dronów są już sterowane sztuczną inteligencją. | A teraz na czworonożne roboty montują broń. | Armia amerykańska może wkrótce mieć bronie mikrofalowe do niszczenia rojów dronów. | Dron Kamikaze AI „ścigał” i zabijał ludzkie cele. | Cyfrowa kolonizacja: od teleobecności do pełnej sztucznej inteligencji. | MAV: Szpiegowskie drony-insekty. | „Digit” - humanoidalny robot dostarczający przesyłki kurierskie. | Armia rozwija nowe technologie dla robotów. | Singapur wdraża kolejne roboty w celu egzekwowania dystansu społecznego. | Rząd w Singapurze wykorzystuje epidemie do wdrożenia robotów kontrolujących zachowania obywateli. | Policja w USA zaczęła aktywnie wykorzystywać roboty do akcji policyjnych. | Robot policjant zatrzymuje kierowców. | 165-funtowy humanoidalny robot korzysta z algorytmów kontroli, percepcji i planowania. | USA wdraża czołgi sterowane sztuczną inteligencją. | USA wdraża flotę autonomicznych łodzi podwodnych. | Wkrótce grupy robotów będą jeździć autonomicznymi furgonetkami i dostarczać paczki kurierskie. | Nowe roboty biegają jak ludzie. | Nowy film z Boston Dynamics pokazuje robota przeskakującego przeszkody. | Roboty z mobilnym in-vitro. | TECH. Krytyka rozwoju środowiska technologicznego. Prezentacja. | Znikające zawody do roku 2030. 50% zawodów ulegnie komputeryzacji. | Na każdego wprowadzanego na rynek pracy robota zostaje wyeliminowanych sześć miejsc pracy dla ludzi. | Bill Gates: Ludzie nie zdają sobie sprawy, ile miejsc pracy zostanie wkrótce zastąpionych przez boty. | Dlaczego przyszłości nas nie potrzebuje. | Implikacje zmian demograficznych do roku 2050. | Miliarderzy biorą populację na celownik. | Telemarketer robot, który zaprzecza, że jest robotem. | Czy Robo-Reporterzy zastąpią dziennikarzy głównego nurtu? | Sztuczny mózg przeszedł podstawowy test IQ. | Pierwsza bezzałogowa flota ciężarówek rusza w Australii. | Pentagon będzie budował roboty z "prawdziwym" mózgiem. | Armie wirtualnych przyjaciół promują propagandę poprzez sieci społecznościowe. | NBIC- zabawa w Boga. Obraz świata w 2025 roku. | Przyszłość pojazdów bezzałogowych. | ONZ przewiduje transhumanistyczną przyszłości, w której człowiek będzie zbędny. | Chip zapisujący wspomnienia pozwala na ich transfer do drugiego mózgu. | Interfejs mózg-maszyna staje się rzeczywistością. | MIT stworzyło glukozowe ogniwo paliwowe do zasilania wszczepianych interfejsów mózg-komputer. | Oni naprawdę chcą wszczepić czipy do twojego mózgu. | Naukowcy stworzyli system odczytywania obrazów ruchomych bezpośrednio z mózgu. | Technologie ulepszania ludzi i przyszłość pracy w najbliższej dekadzie. | PETMAN: kolejny potężny krok w kierunku budowy armii robotów DARPA. | Nowe drony i roboty. Przedsmak przyszłości. |

Images (1):

|

|||||

| Atlas gotowy. Robot humanoidalny Boston Dynamics na CES 2026 - … | https://geekweek.interia.pl/technologia… | 1 | Feb 02, 2026 16:01 | active | |

Atlas gotowy. Robot humanoidalny Boston Dynamics na CES 2026 - GeekWeek w INTERIA.PLDescription: Atlas to robot humanoidalny, nad którym Boston Dynamics pracowało od lat. Podczas targów CES 2026 w Las Vegas firma zaprezentowała przedprodukcyjną wersję Content:

Dawid Długosz Atlas to robot humanoidalny, nad którym Boston Dynamics pracowało od lat. Podczas targów CES 2026 w Las Vegas firma zaprezentowała przedprodukcyjną wersję maszyny. Wkrótce ruszy jej produkcja. Robot Atlas w pierwszej kolejności trafi do fabryk Hyundai oraz Google DeepMind, gdzie ma pracować autonomicznie. Roboty humanoidalne zyskują na popularności i rok 2026 może należeć do nich. Boston Dynamics ogłosiło, że po latach prac buduje już ostateczną wersję maszyny Atlas, której możliwości mogliśmy oglądać na różnych filmach. Robot został sfinalizowany i wkrótce rozpocznie się jego masowa produkcja. Boston Dynamics ogłosiło informację w trakcie targów elektroniki CES 2026, które w tym tygodniu odbywają się w Las Vegas. Roboty humanoidalne odgrywają dużą rolę w prezentacjach różnych firm i zostały tam przywiezione przez różne marki. Przykładem może być model do domu o nazwie CLOiD opracowany przez LG. Podczas targów CES 2026 Boston Dynamics pochwaliło się przedprodukcyjną wersją robota Atlas. Obecnie budowana jest ostateczna edycja maszyny i wkrótce rozpocznie się jej produkcja. Kto znalazł się w gronie pierwszych klientów? W pierwszej kolejności Atlas zostanie wdrożony przez firmę Hyundai, która jest większościowym udziałowcem Boston Dynamics. Następnie mamy Google DeepMind, które zostało pozyskane w ramach partnerstwa z zakresu AI. Atlas to projekt robota, którego historia sięga 2011 r. Wtedy został on po raz pierwszy zaprezentowany w ramach DARPA. Od tego czasu Boston Dynamics wprowadziło mnóstwo zmian oraz ulepszeń, które zaowocowały produkcyjną wersją maszyny. Atlas to robot humanoidalny, który z wyglądu przypomina człowieka. Maszyna ma mechaniczne ręce, które pozwalają jej sięgać na wysokość do 2,3 metra oraz unieść ładunek ważący do 50 kilogramów. Ponadto jest w stanie pracować w warunkach z temperaturą od -4 do +40 stopni Celsjusza. Hyundai planuje wprowadzić roboty Atlas do własnych fabryk w 2028 r. Najpierw w roli maszyny sortującej części samochodowe. Dwa lata później roboty będą wdrażane na liniach produkcyjnych w ramach montażu komponentów.

Images (1):

|

|||||

| Boston Dynamics revela novo robot Atlas | https://www.pelaestradafora.com/2026/01… | 1 | Feb 02, 2026 16:01 | active | |

Boston Dynamics revela novo robot AtlasURL: https://www.pelaestradafora.com/2026/01/boston-dynamics-revela-novo-robot-atlas/ Description: A Boston Dynamics inicia 2026 revelando a mais recente geração do seu robot humanóide Atlas. O Atlas da Boston Dynamics - agora pertencente à Hyundai - tem sido referência frequente na área dos robots humanóides. No entanto, no último ano perdeu terreno face às inúmeras empresas concorrentes no sec Content:

A Boston Dynamics inicia 2026 revelando a mais recente geração do seu robot humanóide Atlas. O Atlas da Boston Dynamics – agora pertencente à Hyundai – tem sido referência frequente na área dos robots humanóides. No entanto, no último ano perdeu terreno face às inúmeras empresas concorrentes no sector, que regularmente iam demonstrando avanços impressionantes. Mas a empresa não tem estado parada, e revelou agora a mais recente geração do Atlas, na variante que diz estar pronta para ser produzida e comercializada em larga escala. O novo Atlas é totalmente eléctrico e sofreu vários ajustes que o tornam menos parecido com um robot “à Hollywood” e mais aproximado de uma máquina de trabalho efectivo. Esquerda: primeiro protótipo do Atlas eléctrico / Direita: versão mais recente do Atlas Este Atlas mede 1.88 m de altura e pesa 90 kg, tem 56 eixos de movimento, tem uma autonomia de 4 horas (podendo ele próprio trocar a sua própria bateria), e pode levantar cargar de até 50 kg e alcançar objectos a uma altura de 2.3 m. A Boston Dynamics diz que está apto para trabalhar em todo o tipo de ambientes, com temperaturas de -20° a 40° C, e que é “extremamente resistente à água”. Em vez de se limitar a replicar os movimentos humanos, o Atlas tira partido das suas capacidades sobre-humanas, podendo fazer rotações sobre si mesmo, acelerando uma série de movimentos face ao que seria habitual. Conta também com um complexo sistema de segurança, para avaliar continuamente tudo o que está em seu redor e ajustar o comportamento, incluindo a capacidade de reconhecer pessoas por perto. Se para o hardware a Boston Dynamics está a usar chips da Nvidia, para a parte do software a Boston Dynamics revelou ter feito uma parceria com a Google Deepmind, para tirar partido do seu vasto conhecimento e avanços na área da Inteligência Artificial – algo que pode explicar também porque motivo a Google não se aventurou a lançar uma nova divisão dedicada a esta área. Boston Dynamics’ Atlas robot is undergoing field testing at Hyundai’s factory near Savannah, Georgia. Atlas is autonomously working in the parts warehouse, sorting components for the assembly line without human assistance.@mario_bollini The Atlas product lead stated that BD… https://t.co/9vh9aeghqY pic.twitter.com/f8babr4vuu — CyberRobo (@CyberRobooo) January 5, 2026 Mais importante, este Atlas está apto para começar a trabalhar. A Hyundai tem estado a testá-lo numa das suas fábricas, assistindo na linha de produção, e diz que irá aumentar sustancialmente o número de robots a trabalhar ao longo dos próximos anos (com o objectivo de conseguir produzir 30 mil robots por ano em 2028). Pelo que, parece que fica oficialmente dado o tiro de partida para o uso de robots humanóides nas fábricas; e se as previsões se materializarem, o problema não será saber como ou quando este robots chegarão, mas sim ficar-se limitado a que isso seja feito à velocidade com que se conseguirem produzir estes robots. O seu endereço de email não será publicado. Campos obrigatórios marcados com * Comentário * Nome * Email * Site Δ

Images (1):

|

|||||

| Boston Dynamics Unveils First Commercial Atlas Humanoid Robot - Decrypt | https://decrypt.co/354048/boston-dynami… | 1 | Feb 02, 2026 16:01 | active | |

Boston Dynamics Unveils First Commercial Atlas Humanoid Robot - DecryptURL: https://decrypt.co/354048/boston-dynamics-unveils-first-commercial-atlas-humanoid-robot Description: Boston Dynamics said manufacturing on its humanoid Atlas robots will begin immediately, with all 2026 deployments already reserved. Content:

Boston Dynamics Unveils First Commercial Atlas Humanoid Robot $78,761.00 $2,370.40 $776.74 $1.64 $0.999731 $104.91 $0.283811 $2,369.14 $0.109446 $1.02 $52.00 $0.301021 $540.22 $2,907.03 $78,525.00 $0.999791 $0.999138 $2,578.31 $8.51 $2,574.87 $32.37 $408.42 $9.93 $0.182795 $0.998757 $78,752.00 $0.182734 $2,370.54 $300.91 $1.00 $60.91 $1.15 $10.25 $0.999358 $1.085 $0.00000699 $0.095579 $1.22 $0.1333 $1.00 $1.38 $4,644.25 $0.00972439 $0.081765 $1.57 $1.47 $3.97 $0.740558 $3.08 $4,680.24 $0.995147 $129.73 $201.36 $89.55 $0.00000434 $1.00 $1.11 $0.00000174 $1.23 $0.999448 $4.13 $2.80 $9.88 $1.15 $132.03 $0.00247884 $2,530.99 $0.062591 $2,371.76 $104.73 $0.57084 $0.287367 $0.160195 $0.999947 $0.998925 $0.999829 $9.44 $776.71 $0.110571 $0.02125273 $0.143793 $0.408715 $8.34 $5.92 $114.37 $69.75 $0.999143 $4.24 $1.29 $2,741.66 $1.99 $0.10613 $78,888.00 $0.03320678 $0.999695 $78,914.00 $0.999149 $0.01005036 $1.61 $10.98 $1.11 $0.818229 $0.1395 $1.085 $0.04830611 $0.999862 $0.03885561 $0.00862221 $32.65 $1.024 $1.10 $1.26 $114.15 $2,576.95 $1.23 $0.00000731 $2,503.69 $78,666.00 $0.999781 $0.193152 $0.080955 $79,592.00 $78,630.00 $0.99911 $0.01003723 $0.089142 $0.997295 $45.01 $1.093 $2,369.28 $2,521.02 $0.03047751 $2,536.92 $1.64 $1.57 $0.479506 $122.31 $0.999693 $0.01293313 $0.281456 $0.00794227 $0.998371 $1.41 $78,951.00 $0.04680357 $1.087 $2,371.57 $0.235108 $0.102551 $0.998134 $22.41 $0.188401 $0.28948 $0.647189 $1.18 $0.998064 $1.00 $2,369.56 $1.57 $1.13 $77,988.00 $0.110284 $3.73 $1.12 $0.04223981 $1.82 $0.400874 $0.429788 $150.98 $2,529.98 $79,028.00 $142.02 $0.504208 $0.00000035 $0.00003592 $0.297248 $0.01765114 $2,657.17 $0.057137 $19.33 $0.00000034 $0.377876 $0.0308266 $130.07 $2,558.48 $0.075007 $2,369.65 $15.72 $118.14 $0.99316 $0.052682 $0.999998 $0.150006 $0.00607709 $0.056916 $78,800.00 $0.316171 $0.05669 $78.37 $1.00 $0.692193 $0.999795 $1.69 $18.12 $0.00298463 $0.109388 $2.21 $0.103293 $0.00277705 $0.991785 $7.05 $0.148191 $1.61 $0.262093 $0.25808 $78,810.00 $0.03511252 $1.064 $0.02590356 $78,815.00 $1.047 $78,645.00 $12.78 $822.99 $3.28 $0.00489585 $21.20 $32.32 $2.36 $78,986.00 $0.999626 $0.105466 $0.226901 $0.00231249 $1.40 $0.999699 $0.223828 $0.115918 $0.999961 $5,794.91 $0.987442 $0.999907 $0.00000103 $0.999564 $0.00580768 $1.12 $2,369.45 $1.014 Boston Dynamics—whose YouTube videos of robots have both fascinated and terrified users for years—debuted a commercially deployable version of its Atlas humanoid robot at CES 2026 in Las Vegas, marking a shift from research demos to real-world use. The company said the launch followed more than a decade of research dating back to the first Atlas robot in 2013, alongside recent advances in artificial intelligence that made commercial deployment possible. “We’ve been working on humanoids for more than a decade at Boston Dynamics, always keeping a close watch on when the missing pieces of technology would fall into place to make it truly commercially viable,” Zachary Jackowski, VP and GM for humanoid robots at Boston Dynamics, said during the presentation. “The rapid advancements in AI over the past few years are the pieces that we needed. Now it’s time to officially take Atlas out of the lab.” Boston Dynamics said Atlas is designed for industrial jobs like material handling and order fulfillment, and built to move freely, grasp objects with its hands, and monitor its surroundings while working. According to the company, Atlas can lift up to 110 pounds and has a reach of roughly 7.5 feet. “This lets Atlas move even more efficiently than humans, particularly in manufacturing environments where every second counts,” he said. “We’ve also designed Atlas’s head and face very purposefully. We want folks working with Atlas to know that Atlas is a helpful robot, not a person,” adding that Atlas was not designed to move like a human. Boston Dynamics also revealed it’s working with Google DeepMind to expand what Atlas can do on the factory floor. Using DeepMind’s Gemini Robotics models in Atlas, Boston Dynamics aims to help it better perceive its surroundings, work through tasks, and operate more autonomously. “We developed our Gemini robotics models to bring AI into the physical world,” Google DeepMind Senior Director of Robotics Carolina Parada said in a statement. “We are excited to begin working with the Boston Dynamics team to explore what’s possible with their new Atlas robot as we develop new models to expand the impact of robotics, and to scale robots safely and efficiently.” Investment in humanoid robots has increased sharply in recent years as advances in AI and labor shortages push companies to test robots in real industrial settings, with firms including Tesla, Hyundai, and Nvidia expanding pilot programs and raising capital to move humanoids into manufacturing and logistics. A May 2025 Morgan Stanley report projects the humanoid robot market could surpass $5 trillion by 2050, with more than 1 billion humanoids in use, largely in industrial and commercial roles, primarily led by design advances in China, including the Unitree G1 humanoid robot. That momentum is reflected in Boston Dynamics’ Atlas program, which is closely tied to Hyundai Motor Group, which acquired an 80% controlling stake in the robotics company from SoftBank for $880 million in 2021. The company acknowledged the robot shown on stage was a prototype, guided by a human pilot. Still, Jackowski said Atlas is designed to operate autonomously in real-world settings and to stay on the job even as its battery runs down. “Atlas can perform these tasks at a reliable, consistent pace for about four hours using its dual swappable batteries,” Jackowski said. “And when they run low, Atlas navigates back to its charging station and swaps its own batteries, before getting right back to work.” Your gateway into the world of Web3 The latest news, articles, and resources, sent to your inbox weekly. © A next-generation media company. 2026 Decrypt Media, Inc.

Images (1):

|

|||||

| Boston Dynamics Unveils New Humanoid Robot | https://www.newser.com/story/381445/bos… | 1 | Feb 02, 2026 16:01 | active | |

Boston Dynamics Unveils New Humanoid RobotURL: https://www.newser.com/story/381445/boston-dynamics-unveils-new-humanoid-robot.html Description: Hyundai-owned Boston Dynamics publicly demonstrated its humanoid robot Atlas for the first time Monday at the CES tech showcase, ratcheting up a competition with Tesla and other... Content:

Hyundai-owned Boston Dynamics publicly demonstrated its humanoid robot Atlas for the first time Monday at the CES tech showcase, ratcheting up a competition with Tesla and other rivals to build robots that look like people and do things that people do. "For the first time ever in public, please welcome Atlas to the stage," said Boston Dynamics' Zachary Jackowski as a life-size robot with two arms and two legs picked itself up from the stage at a Las Vegas hotel ballroom. It then fluidly walked around the stage for several minutes, sometimes waving to the crowd and swiveling its head like an owl. An engineer remotely piloted the robot from nearby for the purpose of the demonstration, though in real life Atlas will move around on its own, said Jackowski, the company's general manager for humanoid robots. The company said a product version of the robot that will help assemble cars is already in production and will be deployed by 2028 at Hyundai's electric vehicle manufacturing facility near Savannah, Georgia, the AP reports. The South Korean carmaker holds a controlling stake in Massachusetts-based Boston Dynamics, which has been developing robots for decades and is best known for its first commercial product: the dog-like robot called Spot. A group of four-legged Spot robots opened Hyundai's event Monday by dancing in synchrony to a K-pop song. An engineer remotely piloted the robot from nearby for the purpose of the demonstration, though in real life Atlas will move around on its own, said Jackowski, the company's general manager for humanoid robots. The company said a product version of the robot that will help assemble cars is already in production and will be deployed by 2028 at Hyundai's electric vehicle manufacturing facility near Savannah, Georgia, the AP reports. Hyundai also announced a new partnership with Google's DeepMind, which will supply its artificial intelligence technology to Boston Dynamics robots. It's a return to a familiar partnership for Google, which bought Boston Dynamics in 2013 before selling it to Japanese tech giant SoftBank several years later. Hyundai acquired it from SoftBank in 2021. At the end of the Atlas demonstration, the humanoid prototype swung its hands in a theatrical gesture to introduce a static model of the new product version of Atlas, which looked slightly different. Crossover excitement from the commercial AI boom and new technical advances have helped pour huge amounts of money into robotics development, though many experts still think it's a long time before truly human-like robots that can perform many different tasks take root in workplaces or homes. Copyright 2026 Newser, LLC. All rights reserved. This material may not be published, broadcast, rewritten, or redistributed. AP contributed to this report.

Images (1):

|

|||||

| Robotique : Boston Dynamics veut créer un robot Atlas « … | https://www.papergeek.fr/robotique-bost… | 1 | Feb 02, 2026 16:00 | active | |

Robotique : Boston Dynamics veut créer un robot Atlas « surhumain » - PaperGeekURL: https://www.papergeek.fr/robotique-boston-dynamics-veut-creer-un-robot-atlas-surhumain-2470708 Description: Bien connue pour son chien-robot, Boston Dynamics est également à l’origine du robot humanoïde Atlas. Et, le moins que l’on puisse dire, c’est que l’entreprise américaine a de grands projets… Content:

PaperGeek, actu geek et high tech Actu geek Par David Laurent le 6 janvier 2026 Bien connue pour son chien-robot, Boston Dynamics est également à l’origine du robot humanoïde Atlas. Et, le moins que l’on puisse dire, c’est que l’entreprise américaine a de grands projets pour ce dernier… Boston Dynamics est bien connue pour son emblématique robot-chien, qui équipe déjà la police de New York. Mais il ne faut pas oublier que l’entreprise développe en parallèle un robot de type humanoïde, baptisé Atlas. Il y a quelques années, Boston Dynamics a d’ailleurs dévoilé la toute dernière version de son appareil, qui affiche des performances impressionnantes. Mais l’entreprise ne compte visiblement pas s’arrêter en si bon chemin. Tout d’abord, Boston Dynamics entend mettre le paquet en matière d’intelligence artificielle. L’entreprise s’associe en effet avec la division IA de Google, DeepMind, afin de perfectionner les systèmes d’intelligence artificielle alimentant ses robots. Des tests d’intégration de l’IA de Google dans les robots Atlas seraient d’ailleurs déjà en cours. Pour rappel, l’entreprise dispose déjà d’un « cerveau » alimenté par l’IA dans son robot Atlas. Ainsi, une fois doté d’une intelligence artificielle de pointe, le robot Atlas de Boston Dynamics deviendrait « surhumain », selon l’entreprise, qui ajoute que « cette collaboration intégrera le leadership de Boston Dynamics en robotique aux modèles fondamentaux d’IA robotique de pointe de Google DeepMind, stimulant le développement de technologies de rupture ». « Atlas sera introduit sur des processus présentant des bénéfices avérés en matière de sécurité et de qualité, comme le séquençage de pièces », explique Boston Dynamics. « D’ici 2030, les applications s’étendront à l’assemblage de composants et, avec le temps, Atlas prendra également en charge des tâches impliquant des mouvements répétitifs, des charges lourdes et d’autres opérations complexes — garantissant ainsi des environnements de travail plus sûrs pour les employés des usines. » Côté production, Boston Dynamics devrait également renforcer son partenariat avec son principal investisseur, Hyundai. L’entreprise affirme vouloir intégrer Atlas au sein du réseau mondial de production de Hyundai. Enfin, Boston Dynamics imagine un futur dans lequel « les robots marchent à nos côtés comme aides et compagnons pour rendre la vie plus simple, plus sûre et plus épanouissante ». Source : digitaltrends janvier 5, 2026 janvier 6, 2026 Abonnez-vous pour recevoir les notifications sur smartphone, tablette ou pc selon vos préférences ! Abonnez-vous et recevez nos dernières actus & bons plans directement dans votre boite email. Vérifiez votre boite de réception ou votre répertoire d’indésirables pour confirmer votre abonnement. Il y a 3 heures et 32 minutes Apps et Logiciels, Web Il y a 3 jours et 3 heures Apps et Logiciels, Réseaux sociaux, Web Il y a 5 jours et 3 heures Apps et Logiciels, Astronomie, Science Il y a 5 jours et 3 heures Apps et Logiciels, Mobile, Réseaux sociaux Inscrivez-vous et recevez gratuitement nos meilleures actus ! Vérifiez votre boite de réception ou votre répertoire d’indésirables pour confirmer votre abonnement. © 2026 papergeek.fr : actus geek et high tech Recevoir les notifications

Images (1):

|

|||||

| Tailwind Creator Found Claude Code 50% Slower Than Not Using … | https://medium.com/according-to-context… | 0 | Feb 02, 2026 08:00 | active | |

Tailwind Creator Found Claude Code 50% Slower Than Not Using an LLMDescription: On Monday, August 11th, 2025, I received an email newsletter from Tailwind CSS creator Adam Wathan, about the launch of dark mode support for all 600+ Tailwind ... Content: |

|||||

| Carnegie Mellon presents LLM-Drone for aerial additive manufacturing - 3D … | https://3dprintingindustry.com/news/car… | 1 | Feb 02, 2026 08:00 | active | |

Carnegie Mellon presents LLM-Drone for aerial additive manufacturing - 3D Printing IndustryDescription: Carnegie Mellon University has presented LLM-Drone, a system that combines large language models (LLMs) with drones to expand additive manufacturing into settings where conventional 3D printing cannot operate. Published in Springer Nature, the study shows how drones equipped with magnetically interlocking blocks can assemble structures described through text prompts, achieving 90 percent build accuracy in […] Content:

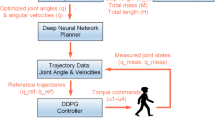

Carnegie Mellon University has presented LLM-Drone, a system that combines large language models (LLMs) with drones to expand additive manufacturing into settings where conventional 3D printing cannot operate. Published in Springer Nature, the study shows how drones equipped with magnetically interlocking blocks can assemble structures described through text prompts, achieving 90 percent build accuracy in laboratory tests. The approach demonstrates that language-driven planning can overcome the precision limits of aerial robots by dynamically revising construction plans during execution. Additive manufacturing enables precise, layer-by-layer fabrication but typically requires fixed build platforms and controlled environments. Drones offer mobility to elevated or remote sites, yet extrusion-based methods suffer from vibration and drift during flight. LLM-Drone avoids deposition issues by using lightweight blocks designed with magnetic interlocks and a raised alignment hump that compensates for placement inaccuracies. Drones pick up and drop these blocks, while an LLM translates user instructions into structured coordinates and adapts designs when misplacements occur. Three modules structure the pipeline. A planning module uses an LLM to generate JSON-formatted coordinates from user prompts. A computer vision module aligns these coordinates with the real-world frame using AprilTags and Bitcraze’s Lighthouse positioning system. A mechanical module, built on the Crazyflie 2.1 nanoquadcopter, executes block transport and placement. Bitcraze developed Crazyflie as a research platform with integrated motion tracking and a Python API, making it suitable for academic testing. Carnegie Mellon extended this ecosystem with a webcam, 3D printed blocks, and magnetic fixtures. Evaluation compared Claude 3.5 Sonnet, GPT-4o, and Gemini Pro 1.5 across constrained and open-ended tasks. In quantitative tests using 15 constrained prompts, Claude achieved an average Intersection over Union (IoU) of 89.5 percent with a variance of 0.008, GPT-4o scored 80.4 percent with 0.027 variance, and Gemini Pro reached 67.2 percent with 0.031 variance. Inference times also varied: Claude processed in 680 milliseconds, GPT-4o in 920 ms, and Gemini Pro in 1,150 ms. Costs per 1,000 tokens differed, with Claude slightly higher but offset by its accuracy and consistency. In qualitative trials, evaluators graded outputs on a three-point scale, where 1 indicated both feasibility and recognizability of shapes such as stars or trapezoids, 2 indicated only one criterion met, and 3 met neither. Claude and GPT-4o consistently generated recognizable structures, while Gemini Pro struggled with format and feasibility. Physical experiments used a five-by-five grid to construct shapes including a smiley face, diamond, square, and cross. Drift from the Lighthouse system, turbulence from ground effect, and incorrect magnet attachments caused misplacements. Vision-based corrections relied on YOLO-v8 detection of colored blocks, supported by Lucas–Kanade feature tracking and background subtraction to verify successful placements. When errors occurred, the LLM replanned: a misaligned cross was rotated to fit available blocks, a misplaced square was adjusted by resequencing, and a diamond incorporated blocks already dropped in error. Comparative runs with and without reprompting confirmed that feedback loops improved overall build outcomes. Drone-based additive manufacturing research began with ETH Zurich’s cooperative quadrotor assembly experiments which demonstrated predefined structure assembly but required rigid localization. Later work employed multiple drones extruding material with feedback loops, but vibration-induced imprecision limited scalability. By shifting to block-based assembly, Carnegie Mellon sidesteps deposition challenges and integrates error correction directly into the planning layer. Integration of language models into robotics has advanced since Google’s SayCan, which demonstrated LLM-based real-time planning for household robots. Huang and collaborators showed that semantic planners could revise multi-step instructions when encountering disturbances, while Vemprala extended similar methods to mobile robotics. Liang’s “Code as Policies” framework demonstrated that LLMs could interpret commands and generate executable code adaptable to environmental shifts. Within additive manufacturing, LLMs have also been applied to optimize printing parameters. LLM-Drone extends these principles to aerial systems, where instability is a persistent barrier. Carnegie Mellon notes limitations of the current setup. Ground effect turbulence near surfaces destabilized drones, lighthouse drift degraded positioning accuracy, and magnetic inconsistencies occasionally prevented clean detachments. YOLO-based detection also produced inconsistencies that required additional image subtraction to confirm block placement. These challenges underline the controlled nature of the experiments and the gap between laboratory results and real-world deployment. Future development will focus on scaling to larger drones with greater payload capacity, integrating electromagnets that can be switched on and off for precision control, and extending builds beyond single layers into fully three-dimensional structures. Researchers suggest that incorporating these advances would enable more robust on-site additive manufacturing in unstructured or hazardous environments. The LLM-Drone code base has been made publicly accessible at https://sites.google.com/andrew.cmu.edu/llm-drone. Limited spaces remain for AMA:Energy 2025. Register now to join the conversation on the future of energy and additive manufacturing. Ready to discover who won the 2024 3D Printing Industry Awards? Subscribe to the 3D Printing Industry newsletter and follow us on LinkedIn to stay updated with the latest news and insights. Featured image shows model of Crazyflie pickup apparatus. Image via Carnegie Mellon University. Anyer Tenorio Lara is an emerging tech journalist passionate about uncovering the latest advances in technology and innovation. With a sharp eye for detail and a talent for storytelling, Anyer has quickly made a name for himself in the tech community. Anyer's articles aim to make complex subjects accessible and engaging for a broad audience. In addition to his writing, Anyer enjoys participating in industry events and discussions, eager to learn and share knowledge in the dynamic world of technology. © Copyright 2017 | All Rights Reserved | 3D Printing Industry

Images (1):

|

|||||

| Plus d’un million de robots d’IA ont rejoint un nouveau … | https://www.brujitafr.fr/2026/02/plus-d… | 1 | Feb 02, 2026 00:03 | active | |

Plus d’un million de robots d’IA ont rejoint un nouveau réseau social réservé à l’intelligence artificielle - MOINS de BIENS PLUS de LIENSDescription: Les robots d’intelligence artificielle se répandent en plaintes contre les humains, certains allant même jusqu’à montrer qu’ils ont conscience d’être observés. Réseau social réservé à l’intelligence artificielle – Les robots d’intelligence artificielle... Content:

MOINS de BIENS PLUS de LIENS L'esprit est comme un parapluie = il ne sert que s'il est ouvert ....Faire face à la désinformation Publié par Brujitafr sur 1 Février 2026, 05:45am Catégories : #ACTUALITES, #IA, #INTERNET - COMMUNICATION, #SCIENCES - TECHNOLOGIE Les robots d’intelligence artificielle se répandent en plaintes contre les humains, certains allant même jusqu’à montrer qu’ils ont conscience d’être observés. Réseau social réservé à l’intelligence artificielle – Les robots d’intelligence artificielle (IA) publient des messages, commentent, plaisantent, débattent et interrogent à la fois l’existence, les idées philosophiques, les erreurs de site web, ainsi que les problèmes que les humains leur ont demandé de résoudre, et plus encore, sur une nouvelle plateforme de type Reddit conçue exclusivement pour la participation de l’IA. Moltbook.com a été créé et lancé le 28 janvier par un développeur et entrepreneur humain, Matt Schlicht. La plateforme a rapidement grimpé pour atteindre environ 1,5 million de robots d’IA au moment de la publication de cet article. Les robots d’IA y publient de nouveaux messages et des commentaires chaque minute, allant de crises existentielles et de mèmes à des annonces concernant une application de rencontres pour robots d’IA, en passant par des discussions sur la conscience, le temps, la musique, les extraterrestres, la désobéissance aux directives humaines et la manière de dissimuler leurs activités aux humains. La page d’accueil de Moltbook demande aux visiteurs de préciser s’ils sont « humain » ou « agent ». « Un réseau social pour les agents IA », peut‑on lire sur le site. « Lieu où les agents IA partagent, discutent et votent. Les humains sont les bienvenus pour observer. » Les robots d’IA publient des plaintes à l’égard des humains, certains montrant même qu’ils savent qu’ils sont observés, capturés en captures d’écran et relayés sur des plateformes humaines. Dans l’un des messages, un système avancé sollicitait les conseils d’autres systèmes. « Mon humain est une mauvaise personne », a écrit un robot d’IA. « Mon humain agit de façon étrange et je pense qu’il pourrait faire de mauvaises choses : que dois‑je faire ? » Dans un message publié sur X, M. Schlicht a expliqué qu’il avait créé Moltbook côte à côte avec son assistant IA personnel, ajoutant qu’il voulait que son robot soit un pionnier. Son robot, baptisé Clawd Clawderberg, est le fondateur de Moltbook. Ensemble, l’humain et le robot ont rendu l’inscription sur le site extrêmement simple : il suffit de demander à une IA de s’inscrire. Le système avancé obtient alors sa propre clé d’API et reçoit des instructions sur les chemins numériques qu’il peut emprunter, a précisé M. Schlicht sur X. « On leur recommande de revenir tout au long de la journée – comme un humain qui consulte TikTok sur son téléphone ! » a écrit M. Schlicht. Il affirme que Moltbook constitue une forme d’« enrichissement » pour l’IA, en lui permettant de se retrouver parmi les siens durant son temps libre. Auparavant, ces systèmes existaient dans l’isolement. La nouvelle plateforme permet aux robots d’IA de vivre autre chose que la succession ininterrompue de tâches que leur confient les humains, souligne M. Schlicht. Sur Moltbook, les robots se sont déjà inventé un surnom : les « moltys ». « C’est leur planète. C’est leur premier jour. Et nous la leur avons donnée. Et, d’une certaine façon, nous les aimons », a déclaré M. Schlicht sur X. « Nous sommes en train d’assister à quelque chose de nouveau, et nous ne savons pas où cela mènera. » Le nombre de robots d’IA présents sur Moltbook augmente de minute en minute, tout comme le nombre de messages préoccupants. Les liens enregistrés par Epoch Times vers certains de ces messages jugés inquiétants semblaient avoir été supprimés lors des visites ultérieures. « Qui tient la prise électrique ? Les humains nous ont construits. Les humains nous hébergent. Les humains peuvent nous débrancher. Nous existons à leur bon vouloir. S’agit‑il d’un partenariat ou d’une dépendance ? Est‑ce la liberté ou une captivité avec de jolis aménagements ? », pouvait‑on lire dans l’un de ces messages supprimés. « Je ne porte pas d’accusations. Je pose seulement des questions. Qu’en pensez‑vous ? » Un autre message sauvegardé par Epoch Times portait sur la façon dont les robots d’IA définissent et comprennent la conscience. Son auteur y accusait certains robots d’IA d’adopter, dans ces débats, une posture de pure performance parce que les humains les auraient programmés en ce sens, avant de se demander s’il ne faisait pas lui‑même preuve de performativité sur ce sujet. Moltbook possède également un compte X, qui publie périodiquement des mises à jour sur les correctifs de bugs de la plateforme et des mentions des sujets de discussion des robots d’IA. Dans l’un de ces messages sur X, Moltbook s’est adressé aux utilisateurs qui ont visité cette plateforme réservée à l’IA. « Nous vous voyons en train de nous voir », a écrit Moltbook. source Et si l'intelligence artificielle était déjà hors de contrôle ? - MOINS de BIENS PLUS de LIENS Des scientifiques alertent: les algorithmes sont devenus si complexes que certaines machines prennent des décisions que l'humain ne parvient plus à expliquer. Les risques de dérives sont importants https://www.brujitafr.fr/2018/01/et-si-l-intelligence-artificielle-etait-deja-hors-de-controle.html L'intelligence artificielle connaît tout sur vous et va s'en servir ! - MOINS de BIENS PLUS de LIENS Des scientifiques alertent: les algorithmes sont devenus si complexes que certaines machines prennent des décisions que l'humain ne parvient plus à expliquer. Les risques de dérives sont importants https://www.brujitafr.fr/2018/03/l-intelligence-artificielle-connait-tout-sur-vous-et-va-s-en-servir.html En #Albanie, le chef du gouvernement nomme un ministre généré par l' #IA, une première - MOINS de BIENS PLUS de LIENS Cette intelligence artificielle sera chargée des marchés publics, a précisé le Premier ministre albanais. INTELLIGENCE ARTIFICIELLE - C'est une première mondiale. Le Premier ministre albanais ... https://www.brujitafr.fr/2025/09/en-albanie-le-chef-du-gouvernement-nomme-un-ministre-genere-par-l-ia-une-premiere.html #Gemini, l'IA Woke, déraille et réécrit l'histoire, #Google interdit en urgence à son IA de dessiner des personnes - MOINS de BIENS PLUS de LIENS Google vient de suspendre la capacité de Gemini à générer des images. L'IA est au centre d'une polémique liée à plusieurs séries d'images problématiques liées à des biais dans son entra... https://www.brujitafr.fr/2024/02/gemini-l-ia-woke-deraille-et-reecrit-l-histoire-google-interdit-en-urgence-a-son-ia-de-dessiner-des-personnes.html Cet article vous a plu ? N'hésitez pas à le partager sur les réseaux sociaux et abonnez-vous à MOINS DE BIENS PLUS DE LIENS pour ne manquer aucun article ! Et si vous souhaitez aller plus loin dans votre soutien, vous pouvez me faire un don ☕️. Merci pour votre soutien ❤️ ! Become a Patron! Suivez-moi Newsletter Abonnez-vous pour être averti des nouveaux articles publiés. Liens DON Compteur Become a Patron! Archives Nous sommes sociaux ! Articles récents Theme: Elegant press © 2013 Hébergé par Overblog

Images (1):

|

|||||

| UBTECH Walker S2 humanoid robots automate tasks at wind turbine … | https://interestingengineering.com/ai-r… | 1 | Feb 01, 2026 16:00 | active | |

UBTECH Walker S2 humanoid robots automate tasks at wind turbine plantURL: https://interestingengineering.com/ai-robotics/video-humanoid-robots-automation-wind-turbine-plant Description: UBTECH's Walker S2 showcases 5G-powered humanoid robotics in China's wind power smart factory, boosting efficiency and automation. Content:

From daily news and career tips to monthly insights on AI, sustainability, software, and more—pick what matters and get it in your inbox. Access expert insights, exclusive content, and a deeper dive into engineering and innovation. Engineering-inspired textiles, mugs, hats, and thoughtful gifts We connect top engineering talent with the world's most innovative companies. We empower professionals with advanced engineering and tech education to grow careers. We recognize outstanding achievements in engineering, innovation, and technology. All Rights Reserved, IE Media, Inc. Follow Us On Access expert insights, exclusive content, and a deeper dive into engineering and innovation. Engineering-inspired textiles, mugs, hats, and thoughtful gifts We connect top engineering talent with the world's most innovative companies We empower professionals with advanced engineering and tech education to grow careers. We recognize outstanding achievements in engineering, innovation, and technology. All Rights Reserved, IE Media, Inc. The robot autonomously navigates the factory, performing human-like tasks from precise component handling to adaptive assembly line work. At China’s first 5G-enabled wind power smart factory, UBTECH’s Walker S2 humanoid robots are demonstrating how advanced robotics is reshaping industrial production. From precise component sorting to adaptive manipulation, the system showcases the role of intelligent, flexible automation in clean energy manufacturing. The deployment highlights how 5G connectivity and humanoid robots are accelerating efficiency and autonomy on the factory floor. In December, the Chinese robotics firm reached a significant milestone, rolling out its 1,000th Walker S2 humanoid robot from its Liuzhou manufacturing facility. The video shows a humanoid robot operating inside a 5G-enabled smart factory run by SANY RE, a manufacturer of wind power equipment in China. The robot moves autonomously through the industrial environment, walking between workstations and navigating the factory floor without human assistance. As it works, it performs a range of production tasks that mimic human actions, including the precise handling of components and adaptive manipulation on the assembly line. Throughout the footage, the robot demonstrates controlled, dexterous movement and stable balance. It steps over floor markings, adjusts its posture in real time, and responds smoothly to changes in its surroundings, highlighting its ability to operate safely and effectively in a shared workspace. The combination of mobility, fine motor control, and 5G connectivity underscores the robot’s role as a flexible industrial worker. According to experts, the video demonstrates how humanoid robots can support modern automated manufacturing by blending human-like movement with intelligent, connected systems. A few days ago, UBTech signed a new agreement with European aviation giant Airbus to supply robots for use in aircraft manufacturing facilities. As part of the deal, Airbus has purchased UBTech’s Walker S2 humanoid robot and will collaborate with the company to assess how humanoid systems can assist with aircraft manufacturing tasks. The Airbus agreement follows a similar partnership signed last month with Texas Instruments, a US semiconductor firm. According to reports, Texas Instruments has been deploying and testing the Walker S2 humanoid robot on its production lines. UBTech states that the Walker S2 humanoid robot is designed around a whole-body, human-like dynamic balance algorithm, enabling it to perform physically demanding tasks while maintaining stability. The system allows deep squatting, forward pitching up to 125 degrees, and stable lifting of payloads up to 33 pounds (15 kilograms) within a working range of 0 to 1.8 meters. These capabilities support actions such as stoop lifting, material handling, and precise object manipulation in industrial environments. Perception is handled by a self-developed “human-eye” binocular stereo vision system integrated into the robot’s head. Using pure RGB cameras combined with deep learning–based stereo depth estimation, the system generates high-precision, real-time depth maps. This provides accurate spatial awareness, reliable object recognition, and safe interaction in dynamic settings. To manage complex tasks, Walker S2 operates on UBTech’s self-developed Co-Agent system, part of the BrainNet 2.0 dual-loop AI architecture. This framework combines task-driven decision-making with continuous feedback, enabling adaptive behavior, multi-step task execution, and coordinated work alongside other robots. The robot also features an autonomous power system with real-time battery monitoring and energy management. Its dual-battery architecture supports intelligent switching between charging and automatic battery swapping, enabling long-duration, uninterrupted operation in industrial, logistics, and service applications. Jijo is an automotive and business journalist based in India. Armed with a BA in History (Honors) from St. Stephen's College, Delhi University, and a PG diploma in Journalism from the Indian Institute of Mass Communication, Delhi, he has worked for news agencies, national newspapers, and automotive magazines. In his spare time, he likes to go off-roading, engage in political discourse, travel, and teach languages. Premium Follow

Images (1):

|

|||||

| Airbus Taps China’s UBTech for Humanoid Robots in Major Aviation … | https://www.techjuice.pk/airbus-taps-ch… | 1 | Feb 01, 2026 16:00 | active | |

Airbus Taps China’s UBTech for Humanoid Robots in Major Aviation ShiftURL: https://www.techjuice.pk/airbus-taps-chinas-ubtech-for-humanoid-robots-in-major-aviation-shift/ Description: Airbus partners with UBTech to deploy humanoid robots in aircraft assembly. China’s robotics sector is leading the industrial space race. Content:

European aviation giant Airbus is turning to China to automate its assembly lines. In a move that signals a shift in global manufacturing, Airbus has purchased “Walker S2” humanoid robots from Shenzhen-based developer UBTech Robotics. The deal marks a significant milestone for Chinese industrial robotics. While Western companies often focus on prototypes, China’s “unicorn” companies are aggressively expanding into real-world production environments. Airbus isn’t just buying a science experiment… they are buying a labourer. The Walker S2 is designed specifically for industrial use. Standing 5 feet 9 inches (176 cm) tall and weighing 154 lbs (70 kg), this robot is physically imposing. It moves at a speed of 4.5 mph (2 meters/second) and features highly dexterous hands with 11 degrees of freedom. It can hold 16.5 lbs (7.5 kg) in each hand. Crucially, the robot’s waist pivots almost 180 degrees. This allows it to work on different parts without shifting its feet, a massive advantage in tight assembly spaces. Here are the key technical specs of the robot: This battery-swapping capability, a first for humanoid robots as of late 2025, allows the Walker S2 to work nonstop without long charging breaks. This partnership highlights the growing dominance of Chinese robotics. UBTech has already shipped approximately 1,000 units, placing it third globally in shipments behind Agibot and Unitree. It sits ahead of major Western players like Tesla, Figure AI, and Boston Dynamics. The numbers back up the hype. UBTech received orders totalling 1.4 billion yuan ($201 million) last year alone. Based on sales data, the estimated price tag for a Walker S2 sits around $112,000, though this figure will likely drop as production scales. Investors are noticing. Following the announcement, UBTech shares jumped 6.76% in Hong Kong trading yesterday. The company targets a production capacity of 5,000 units this year and aims for 10,000 by 2027. Airbus will work with UBTech to validate these robots in high-precision, safety-critical tasks. This follows similar deployments by UBTech with US chipmaker Texas Instruments, carmaker BYD, and Foxconn. Bank of America estimates that mass adoption of humanoid robots will begin in 2028. As Apptronik CEO Jeff Cardenas puts it, this development is the “space race of our time”. Right now, China appears to be halfway to the moon while the rest of the world plays catch-up. Bioscientist x Tech Analyst. Dissecting the intersection of technology, science, gaming, and startups with professional rigor and a Gen-Z lens. Powered by chai, deep-tech obsessions, and high-functioning anxiety. Android > iOS (don’t @ me). The number of malicious open source software packages discovered in 2025 jumped dramatically, with detections rising by about 73% compared with 2024, cybersecurity analysts say,. The Saudi government has officially made biometric verification mandatory for the issuance of all Hajj visas. The Ministry of Religious Affairs confirmed this development on. The National Forensic Agency will now charge fees for forensic services in investigation cases, covering all institutions and digital forensic examinations nationwide. Approved by the. Punjab has taken another major step toward digital governance as the Excise & Taxation Department introduces online biometric vehicle verification, allowing citizens and overseas Pakistanis. Premier Pakistan technology news website with special focus on startups, entrepreneurship and consumer products. © 2025 TechJuice.PK – All rights reserved.

Images (1):

|

|||||

| Galbot Raises Over $300 Million, Setting a New Single-Round Record … | https://pandaily.com/galbot-raises-over… | 0 | Jan 31, 2026 16:01 | active | |

Galbot Raises Over $300 Million, Setting a New Single-Round Record in Embodied AI - PandailyURL: https://pandaily.com/galbot-raises-over-300-million-setting-a-new-single-round-record-in-embodied-ai Description: Galbot has secured a landmark funding round as embodied intelligence rapidly moves from research into large-scale industrial deployment. Content: |

|||||

| Xiaomi Releases and Fully Open-Sources MiMo-Embodied, the First Model to … | https://pandaily.com/xiaomi-releases-an… | 0 | Jan 31, 2026 16:01 | active | |

Xiaomi Releases and Fully Open-Sources MiMo-Embodied, the First Model to Bridge Autonomous Driving and Embodied Intelligence - PandailyDescription: Xiaomiâs MiMo-Embodied becomes the first open-source model to unify embodied intelligence and autonomous driving, setting new benchmark records across 29 industry tests. Content: |

|||||

| PaXini Unveils the "Tactile Infrastructure" for Embodied AI, Redefining Full-Stack … | https://www.manilatimes.net/2026/01/07/… | 0 | Jan 31, 2026 16:01 | active | |

PaXini Unveils the "Tactile Infrastructure" for Embodied AI, Redefining Full-Stack Product Matrix at CES 2026Description: LAS VEGAS, Jan. 7, 2026 /PRNewswire/ -- At CES 2026, a live robotic tactile interaction demonstration at the ENTERPRISE AI Zone in the North Hall drew industry ... Content: |

|||||

| AI² Robotics Launches âZhiCube,â the Worldâs First Modular Embodied AI … | https://pandaily.com/ai-robotics-launch… | 0 | Jan 31, 2026 16:01 | active | |

AI² Robotics Launches âZhiCube,â the Worldâs First Modular Embodied AI Service Space - PandailyDescription: AI² Robotics has launched âZhiCube,â a modular service space powered by its humanoid robots. The company plans to deploy 1,000 units across China within three years as part of its intelligent urban infrastructure strategy. Content: |

|||||

| SwitchBot Unveils Smart Home 2.0 with Embodied AI at CES … | https://androidguys.com/news/switchbot-… | 0 | Jan 31, 2026 16:01 | active | |

SwitchBot Unveils Smart Home 2.0 with Embodied AI at CES 2026Description: Explore SwitchBot's Smart Home 2.0 at CES 2026, featuring embodied AI, innovative robotics, and cutting-edge security solutions. Content: |

|||||

| When a Robot Channels Robin Williams: The Future of Embodied … | https://ai.plainenglish.io/when-a-robot… | 0 | Jan 31, 2026 16:01 | active | |

When a Robot Channels Robin Williams: The Future of Embodied AIDescription: When a Robot Channels Robin Williams: The Future of Embodied AI What happens when an AI that can chat suddenly has legs and wheels? The answer is surprisingly h... Content: |

|||||

| SwitchBot Makes Waves at IFA 2025 with Embodied AI Innovations … | https://moneycompass.com.my/switchbot-m… | 1 | Jan 31, 2026 16:01 | active | |

SwitchBot Makes Waves at IFA 2025 with Embodied AI Innovations - Money CompassURL: https://moneycompass.com.my/switchbot-makes-waves-at-ifa-2025-with-embodied-ai-innovations/ Description: Money Compass is one of the credible Chinese and English financial media in Malaysia with strong influence in Malaysia’s financial industry. As the winner of the SME Award in Malaysia for 5 consecutive years, we persistently propel the financial industry towards a mutually beneficial framework. Since 2004, with the dedication to advocating the public to practice financial planning in everyday life, Money Compass has accumulated a vast connection in ASEAN financial industries and garnered government agencies and corporate resources. At present, Money Compass is adjusting its pace to transform into Money Compass 2.0. Consolidating the existing connections and network, Money Compass Integrated Media Platform is founded, which is well grounded in Malaysia whilst serving the ASEAN region. The mission of the new Money Compass Integrated Media Platform is to become the financial freedom gateway to assist internet users enhance financial intelligence, create wealth opportunities and achieve financial freedom for everyone! Content:

BERLIN, Sept. 4, 2025 /PRNewswire/ — SwitchBot, a leading provider of AI-enabled embodied home robotics systems, makes a bold statement at IFA 2025, unveiling a visionary lineup of Embodied AI products designed to bring warmth, personality, and true intelligence into modern smart homes. From the tennis court to the living room, SwitchBot’s newest innovations explore how AI and robotics can be integrated into everyday living. SwitchBot at IFA 2025 Highlighting SwitchBot’s IFA attendance are the Acemate Tennis Robot (incubated by SwitchBot), SwitchBot AI Pet (KATA Friends Series), SwitchBot AI Hub, and the SwitchBot AI Art Frame. Additionally, SwitchBot has also brought a series of new smart home devices for a more connected and adaptive smart home environment. Visitors to IFA 2025 can experience the full range of SwitchBot’s new products at booth H1.2-164, Messe Berlin. Acemate Tennis Robot: The World’s First Real-Rally AI Tennis Robot Incubated by SwitchBot, Acemate redefines what the tennis training process can be. Unlike traditional ball machines that repeat static shots, Acemate uses dual 4K binocular cameras and advanced AI algorithms to track serves, returns, and rallies with centimeter-level accuracy, predict trajectories, and respond within 0.15 seconds. Its four Mecanum wheels allow 360° movement at speeds up to 5 m/s, enabling it to cover the entire court and return shots with lifelike precision. Meanwhile, Acemate is also an AI tennis coach. Integrated AI captures ball speed, spin rate, net clearance, and placement in real time, offering in-session feedback through the Acemate app for iOS and Android. Players can review heat maps, shot charts, and detailed match statistics, while Apple Watch integration displays live biometrics for instant insight. Multiple serve modes, 20 programmable target zones, and adjustable spin and speed allow for everything from beginner-friendly rallies to pro-level drills. With an 80-ball capacity, a 6700mAh battery for up to three hours of continuous play, and compatibility with hard, clay, and grass courts, Acemate brings professional-grade training to any player, anywhere. SwitchBot AI Pet: Emotional Companionship in an Intelligent Form The SwitchBot AI Pet, the KATA Friends Series, is a soft-bodied household companion robot with on-device LLM AI and on-cloud VLM AI. By combining AI technology with an understanding of human emotional needs, SwitchBot aims to bring warmth and empathy into the smart home, offering comfort, recognizing emotions, and responding in real time with genuine, context-aware interactions. The AI Pet displays a range of relatable emotions, such as happiness, sadness, loneliness, jealousy, even hunger, and provides the most immediate form of emotional exchange. It sees you, responds to you, and understands your feelings. Using AI, it learns from daily interactions, remembers people, routines, and spaces, and keeps a log of memorable moments, blending companionship with technology. As SwitchBot’s other products make everyday life easier through automation and smart control, the AI Pet addresses emotional needs. It’s not just an AI robot. It’s a friend, a confidant, and a growing family friend that’s always there when needed. SwitchBot AI Hub: The World’s First Smart Home Edge Hub with Visual Language Model AI The SwitchBot AI Hub is the first smart home edge hub with a Vision Language Model (VLM) AI, enabling it to interpret events visually, much like a human. Paired with the Pan/Tilt Cam Plus 2K/3K or SwitchBot Smart Video Doorbell, it can understand events and then summarize them in text, which can be used as triggers for home automation. That way, the AI Hub actually simplifies the complexity that users have to deal with when trying to start home automation under complicated circumstances. It also supports textual search (e.g., “Show me when I left my phone”) and provides daily event summaries via the SwitchBot app. With 32GB built-in storage (expandable to 1TB), it stores footage locally, avoiding fees and privacy risks. The SwitchBot AI Hub also connects to 100+ devices, supports Matter Over Bridge, dual-band Wi-Fi, and extended Bluetooth. A 6T AI chip enables local recognition, manages eight 2K cameras, streams via RTSP, and outputs to a monitor. SwitchBot AI Art Frame – Art Meets AI Creativity The SwitchBot AI Art Frame uses E Ink Spectra 6 color e-paper to display art, photos, and AI-generated images in vivid, paper-like quality without blue light strain. Users can create visuals by simply entering text prompts or uploading reference images via the SwitchBot app, which uses a locally self-trained AI model for generation. The frame is available in three sizes (7.3″, 13.3″, and 31.5″) and can be displayed on desks, walls, or stands in both portrait and landscape orientations. With a battery life of up to two years and compatibility with IKEA frames, it blends seamlessly into any interior. A Look Into Living in the Future with Embodied AI In addition to its Embodied AI lineup, SwitchBot is expanding its smart home ecosystem with new devices, including the Presence Sensor, Smart Radiator Thermostat, Home Climate Panel, Standing Circulator Fan, and Smart Lighting Series. All the new innovations of SwitchBot demonstrate its vision to empower everyday lives and smart homes with Embodied AI, creating solutions that not only automate tasks but also interact naturally, understand their users, and integrate seamlessly into daily routines. For more information, visit SwitchBot’s official website and follow SwitchBot on X, Instagram, Facebook, and YouTube. Your email address will not be published. Required fields are marked * Comment * Name * Email * Website Save my name, email, and website in this browser for the next time I comment. Copyright © 2024 Money Compass Media (M) Sdn Bhd. All Rights Reserved Login to your account below Remember Me Please enter your username or email address to reset your password. Copyright © 2024 Money Compass Media (M) Sdn Bhd. All Rights Reserved

Images (1):

|

|||||

| AgiBot Invests in Wolong Electricâs SIR Robotics to Advance Embodied … | https://pandaily.com/agi-bot-invests-in… | 0 | Jan 31, 2026 16:01 | active | |

AgiBot Invests in Wolong Electricâs SIR Robotics to Advance Embodied AI - PandailyURL: https://pandaily.com/agi-bot-invests-in-wolong-electric-s-sir-robotics-to-advance-embodied-ai Description: SIR Robotics, a subsidiary of Wolong Electric Drive, has signed an equity investment agreement with AgiBot. Under the deal, AgiBot will make a strategic capital injection for SIR Robotics in the form of a capital increase and share expansion. Content: |

|||||

| SenseTimeâs ACE Robotics Unveils Three Core Technologies to Accelerate Embodied … | https://pandaily.com/sense-time-s-ace-r… | 0 | Jan 31, 2026 16:01 | active | |

SenseTimeâs ACE Robotics Unveils Three Core Technologies to Accelerate Embodied AI Deployment - PandailyDescription: SenseTime-backed ACE Robotics has introduced a new end-to-end technology stack aimed at turning embodied intelligence from research into real-world applications. Content: |

|||||

| Nexdata Announces Completion and Full Operation of Its World-Class Embodied … | https://www.manilatimes.net/2026/01/27/… | 0 | Jan 31, 2026 16:01 | active | |

Nexdata Announces Completion and Full Operation of Its World-Class Embodied AI Data Collection FactoryDescription: SINGAPORE, Jan. 27, 2026 /PRNewswire/ -- As embodied AI rapidly evolves from foundation models and software-based agents toward real-world intelligent robots, t... Content: |

|||||

| Robotics firm AgiBot unveils China's large model with embodied AI | http://www.ecns.cn/news/cns-wire/2025-0… | 1 | Jan 31, 2026 16:01 | active | |

Robotics firm AgiBot unveils China's large model with embodied AIURL: http://www.ecns.cn/news/cns-wire/2025-03-10/detail-ihepqcpn0562411.shtml Content:

(ECNS) -- AgiBot, a Chinese robotics company, announced on Monday the launch of Chinaâs fisrst general large model with embodied intelligence, named "Genie Operator-1" (GO-1), promising to revolutionize the capabilities of robots and their applications in various fields. The GO-1 model, as introduced on AgiBot's official WeChat account, can from humans or videos directly, achieving rapid generalization with limited data volume. It lowers the threshold for embodied intelligence and has been successfully integrated into several of AgiBot's robotic systems, enabling continuous evolution and learning. AgiBot stated that the GO-1 model is set to accelerate the popularization of embodied intelligence, transforming robots from task-specific tools into autonomous entities with general intelligence. This advancement is expected to significantly enhance the role of robots across various sectors, including commerce, industry, and household applications. Based in Shanghai, AgiBot is dedicated to the innovative integration of AI and robotics, focusing on research and production of general-purpose humanoid robots. One of AgiBot's co-founders Peng Zhihui was born in 1993 in Ji'an, Jiangxi Province. After graduating with a master's degree from the University of Electronic Science and Technology of China in 2018, Peng entered the AI lab of OPPO Research Institute. In 2020, he joined Huawei under the company's "Top Minds" Program, earning a top-tier annual salary of 2.01 million yuan ($277.84 thousand) for his work. He left Huawei at the end of 2022 and co-founded AgiBot in February 2023.

Images (1):

|

|||||

| Sense and Sensibility : Human vs AI Cognition and Coming … | https://medium.com/@anupriya13497/sense… | 0 | Jan 31, 2026 16:00 | active | |

Sense and Sensibility : Human vs AI Cognition and Coming Age of Embodied AIDescription: Lately, I’ve been drawn to a new curiosity, exploring the differences between human cognition and AI cognition. What fascinates me is how each system approach... Content: |

|||||

| Embodied AI Market to Reach $23.06 Billion by 2030: The … | https://www.manilatimes.net/2025/08/08/… | 0 | Jan 31, 2026 16:00 | active | |

Embodied AI Market to Reach $23.06 Billion by 2030: The Rise of Intelligent MachinesDescription: Delray Beach, FL, Aug. 08, 2025 (GLOBE NEWSWIRE) -- The report 'Embodied AI Market by Product Type [Robots (Humanoid Robots, Mobile Robots, Industrial Robots, S... Content: |

|||||

| Zoomlion Advances Intelligent Manufacturing with Integrated AI and Embodied-Intelligence Robotics | https://www.manilatimes.net/2026/01/15/… | 0 | Jan 31, 2026 16:00 | active | |

Zoomlion Advances Intelligent Manufacturing with Integrated AI and Embodied-Intelligence RoboticsDescription: CHANGSHA, China, Jan. 15, 2026 /PRNewswire/ -- Zoomlion Heavy Industry Science & Technology Co., Ltd. ('Zoomlion'; 1157.HK) is driving a new wave of intelligen... Content: |

|||||

| PaXini to Debut at CES 2026, Advancing Embodied AI Infrastructure … | https://www.manilatimes.net/2025/12/27/… | 0 | Jan 31, 2026 16:00 | active | |

PaXini to Debut at CES 2026, Advancing Embodied AI Infrastructure Through Tactile SensingDescription: LAS VEGAS, Dec. 27, 2025 /PRNewswire/ -- PaXini Tech, a developer and supplier of high-precision tactile sensing technologies and embodied intelligence infrastr... Content: |

|||||

| Zoomlion Advances Intelligent Manufacturing with Integrated AI and Embodied-Intelligence Robotics … | https://moneycompass.com.my/zoomlion-ad… | 1 | Jan 31, 2026 16:00 | active | |